FTC Alumni in AI Startups: Steering Future Tech Policy?

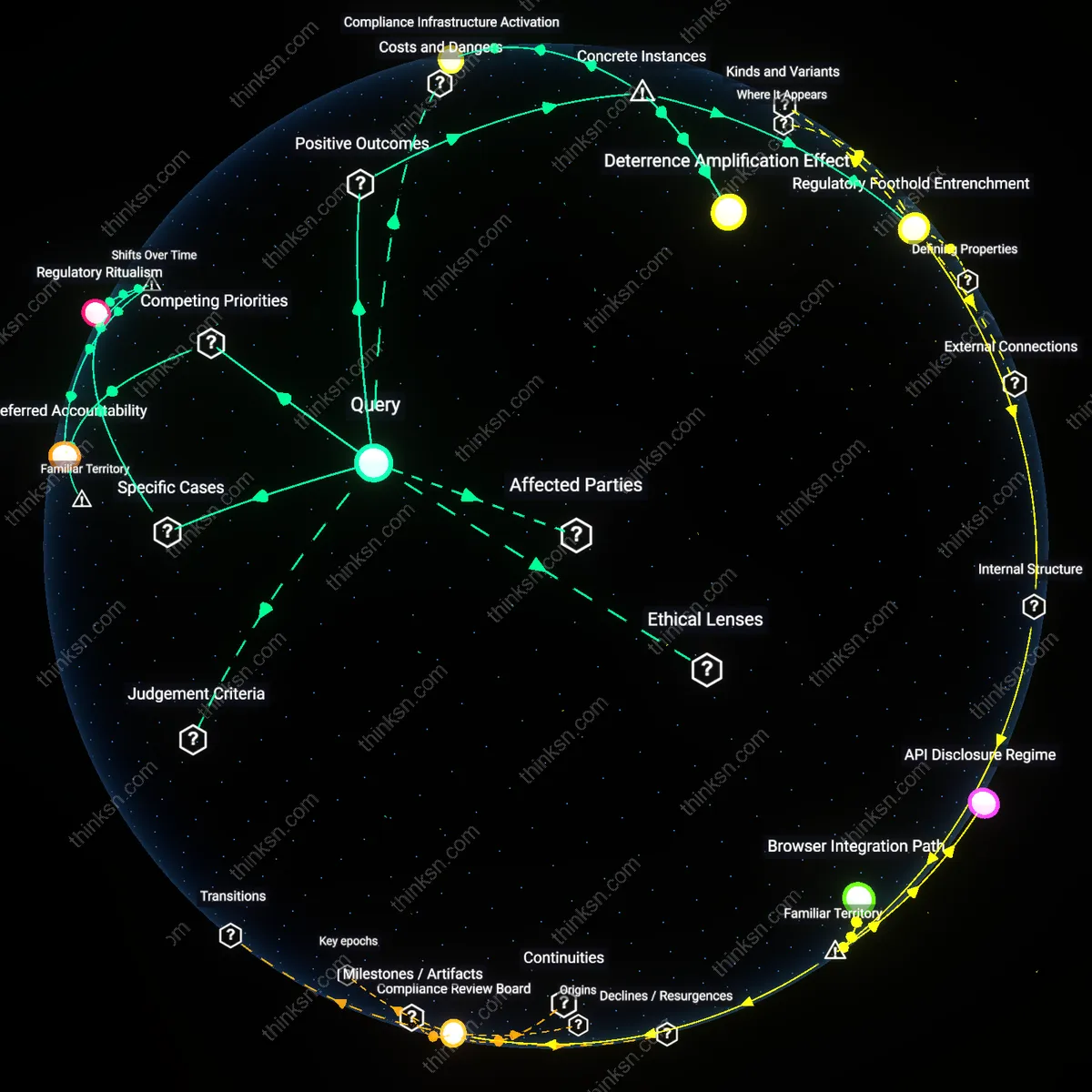

Analysis reveals 5 key thematic connections.

Key Findings

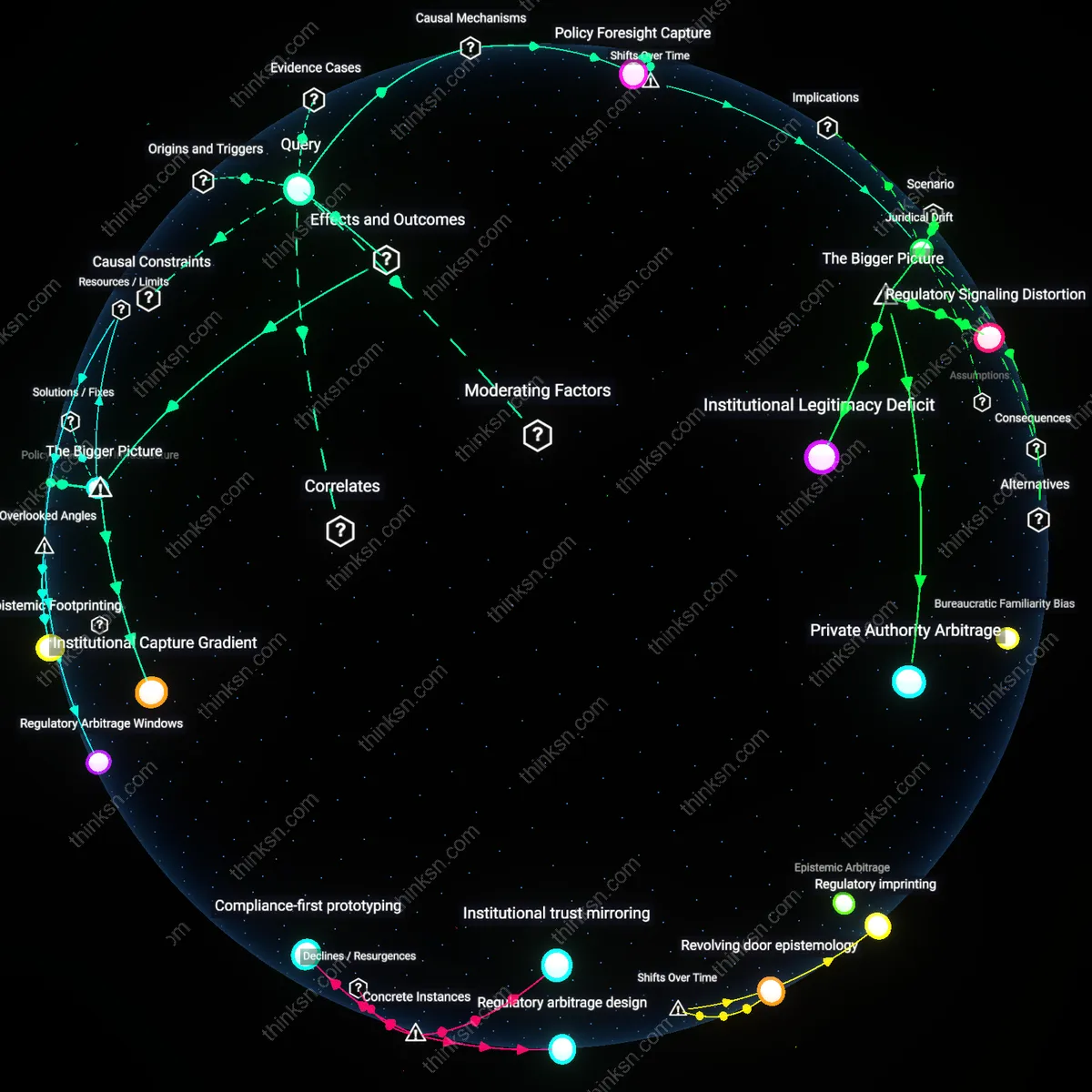

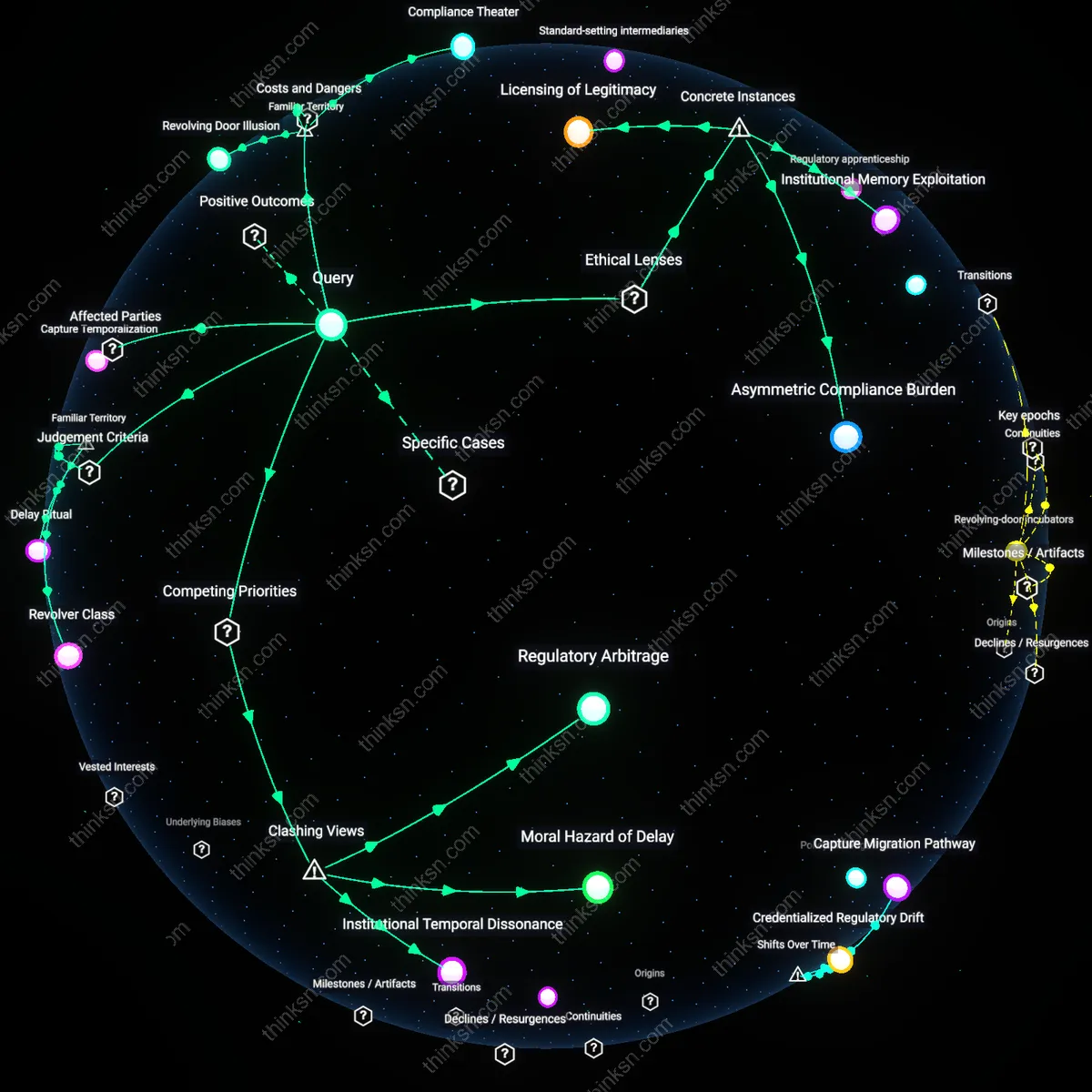

Regulatory Apprenticeship

Former FTC commissioners joining AI startups since the late 2010s reveals that regulatory experience has become a commodified asset in private AI development, signaling a shift from adversarial to anticipatory industry-state relations. This occurs through a causal mechanism whereby commissioners, having shaped enforcement doctrines during the post-2008 expansion of consumer protection in digital markets, now transfer their insider understanding of rulemaking thresholds to help startups preemptively design compliance into products—transforming former regulatory constraints into competitive advantages. The significance lies in how this trajectory, distinct from earlier revolving-door patterns focused on lobbying or penalties, institutionalizes regulatory logic within product development cycles, making compliance not a response but a precondition for market entry.

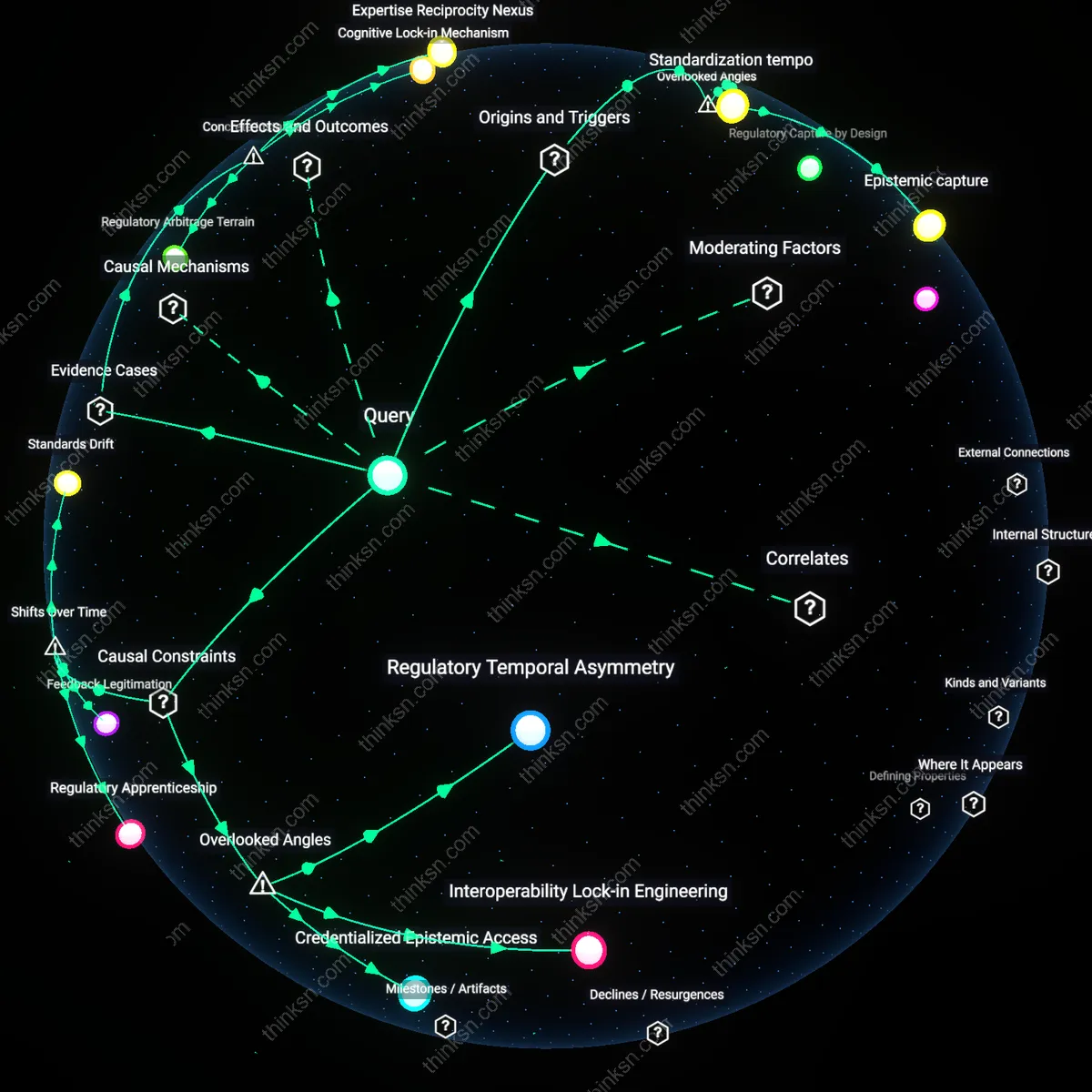

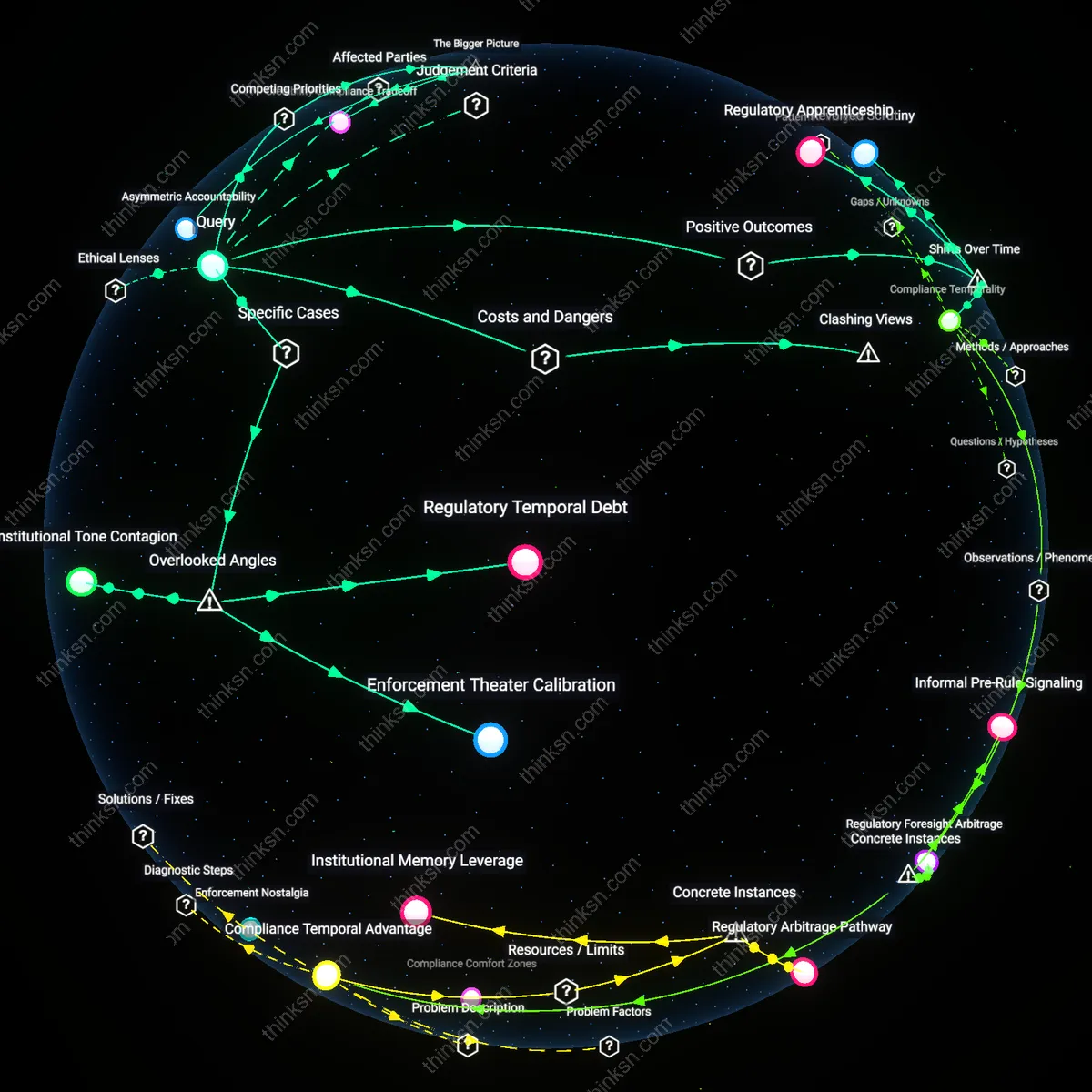

Policy Foresight Capture

The movement of FTC officials into AI firms after 2020 indicates that private actors now recruit regulatory personnel not for past connections but for their capacity to simulate future rulemaking scenarios, reflecting a shift from reactive legal navigation to proactive norm engineering. This operates through the internalization of once-public deliberative processes—such as risk-tiering or algorithmic impact assessment—into corporate strategy teams, where former commissioners act as embedded predictors of regulatory possibility spaces. What is non-obvious is that the temporal shift from enforcement-era expertise (circa 2010s) to foresight modeling (2020s) means regulation is no longer just influenced after the fact but is effectively staged in parallel to policymaking, distorting the democratic horizon of technology governance.

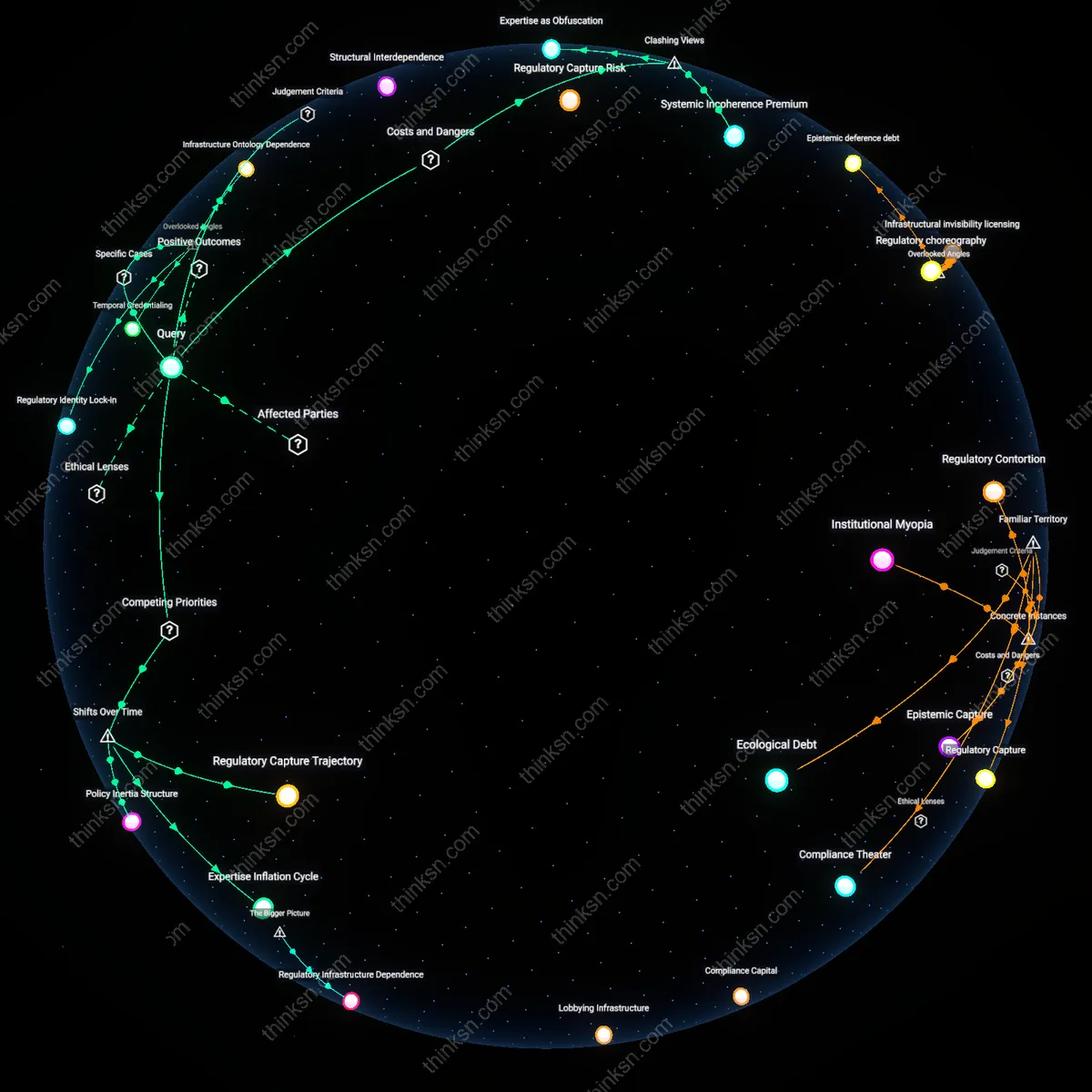

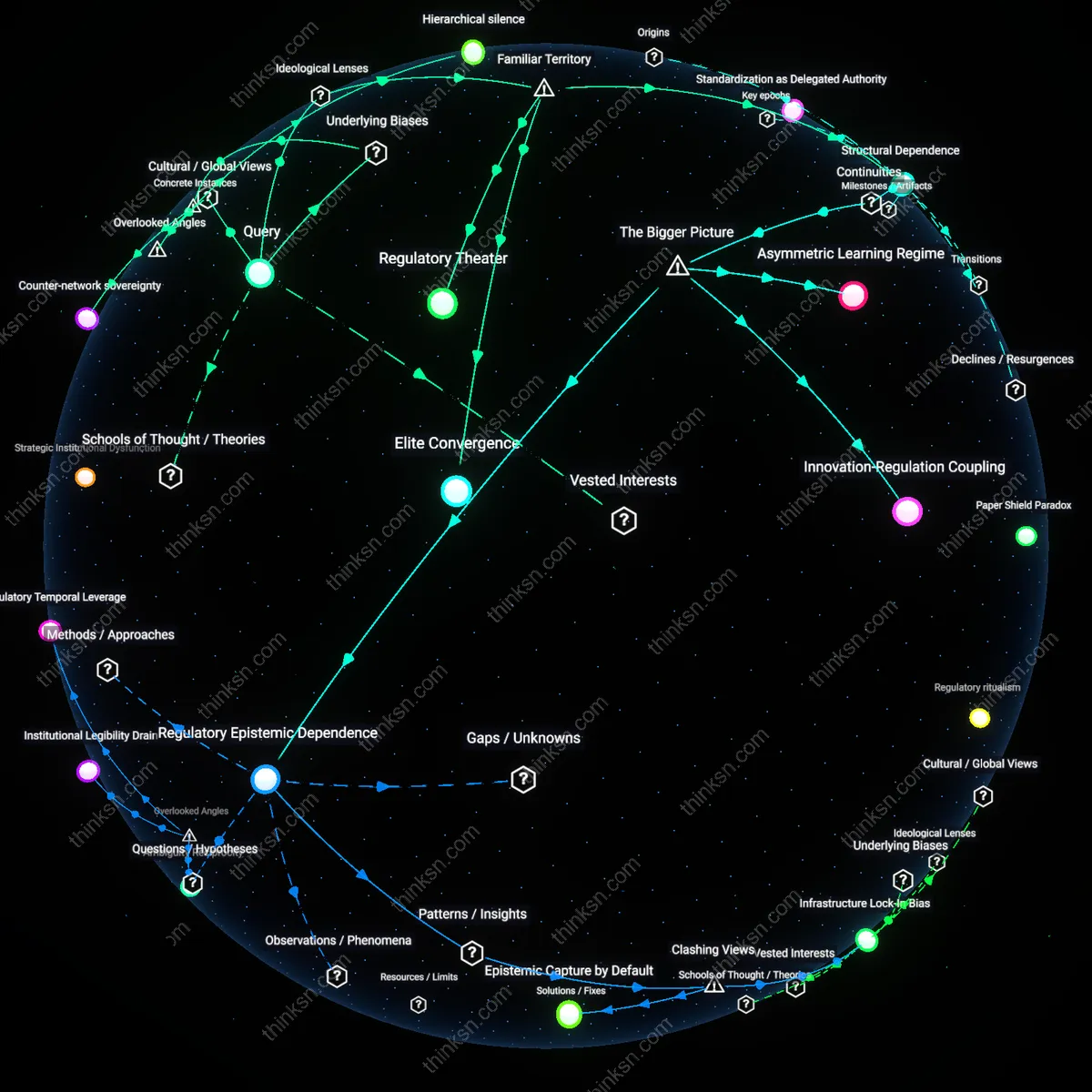

Juridical Drift

The recent pattern of ex-FTC leaders entering AI startups marks a transition from discrete acts of regulatory arbitrage to the systemic migration of legal interpretation norms from public institutions into private technical architectures. This unfolds through a mechanism in which former commissioners, socialized in pre-2010s antitrust and privacy doctrines, carry interpretive dispositions—such as the standard for deceptive practices or unfair data use—into the design of AI governance frameworks, subtly aligning internal compliance systems with their own past judicial reasoning rather than evolving democratic mandates. The underappreciated consequence is that the temporal continuity of individual legal worldview, now embedded in code and corporate policy, produces a slow doctrinal drift where technology policy is increasingly shaped by the personal jurisprudence of former regulators rather than institutional or legislative evolution.

Policy Anticipation Infrastructure

The hiring of former FTC commissioners by AI startups catalyzes the formation of private policy anticipation infrastructure, wherein regulatory experience is repurposed to simulate future enforcement environments and pressure-test product roadmaps against likely governance trajectories. These individuals function as living predictive models, using their firsthand experience with bureaucratic incentives, inter-agency dynamics, and political risk tolerance to forecast which regulatory approaches are likely to materialize and which will stall. This shifts the balance of power in standard-setting processes, as startups backed by such expertise can act anticipatorily rather than reactively—delaying transparency measures or resisting audits under the confidence that regulatory consensus will lag. The underappreciated dynamic is that this does not just accelerate corporate adaptation, but actively distorts the evolution of technology policy by making it easier for private actors to exploit the time-value of regulatory uncertainty.

Institutional Capture Gradient

The migration of FTC commissioners into AI startups reveals an institutional capture gradient, where the movement of personnel follows asymmetries in resources, agility, and long-term vision between public and private sectors. Unlike traditional lobbying or campaign financing, this form of influence operates through embodied capital—the tacit knowledge, networks, and decision-making heuristics these individuals carry with them—allowing startups to reverse-engineer regulatory constraints from within the logic of the regulator. This gradient intensifies because AI policy remains fragmented and anticipatory, making experiential insight more valuable than formal regulations, which are scarce or unenforced. The non-obvious outcome is that regulatory bodies lose not only personnel but epistemic authority, as their former leaders begin shaping the very technologies they were meant to oversee—effectively turning public stewardship into a feedstock for private innovation.