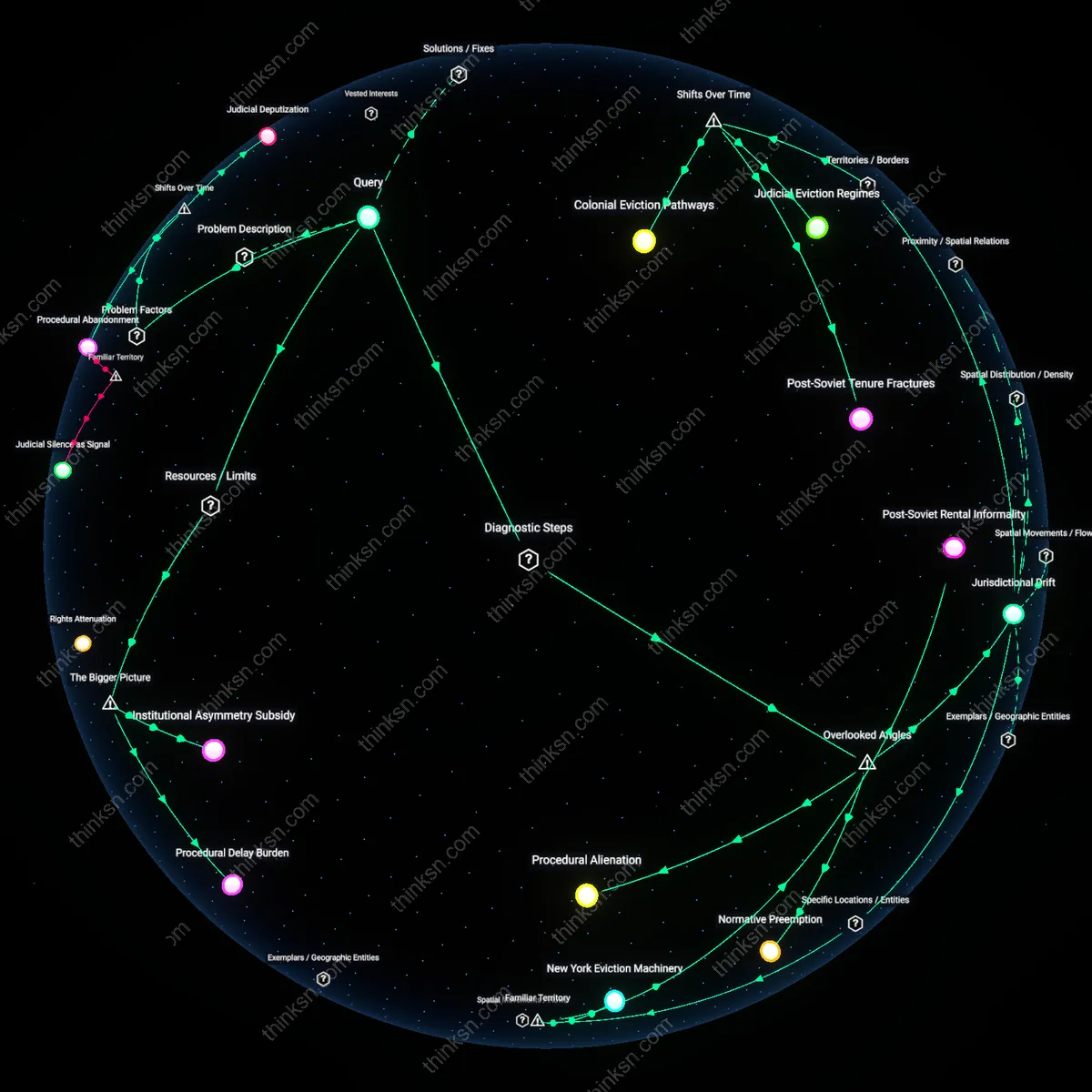

Does AI Efficiency in Housing Outweigh Bias Against Marginalized Groups?

Analysis reveals 6 key thematic connections.

Key Findings

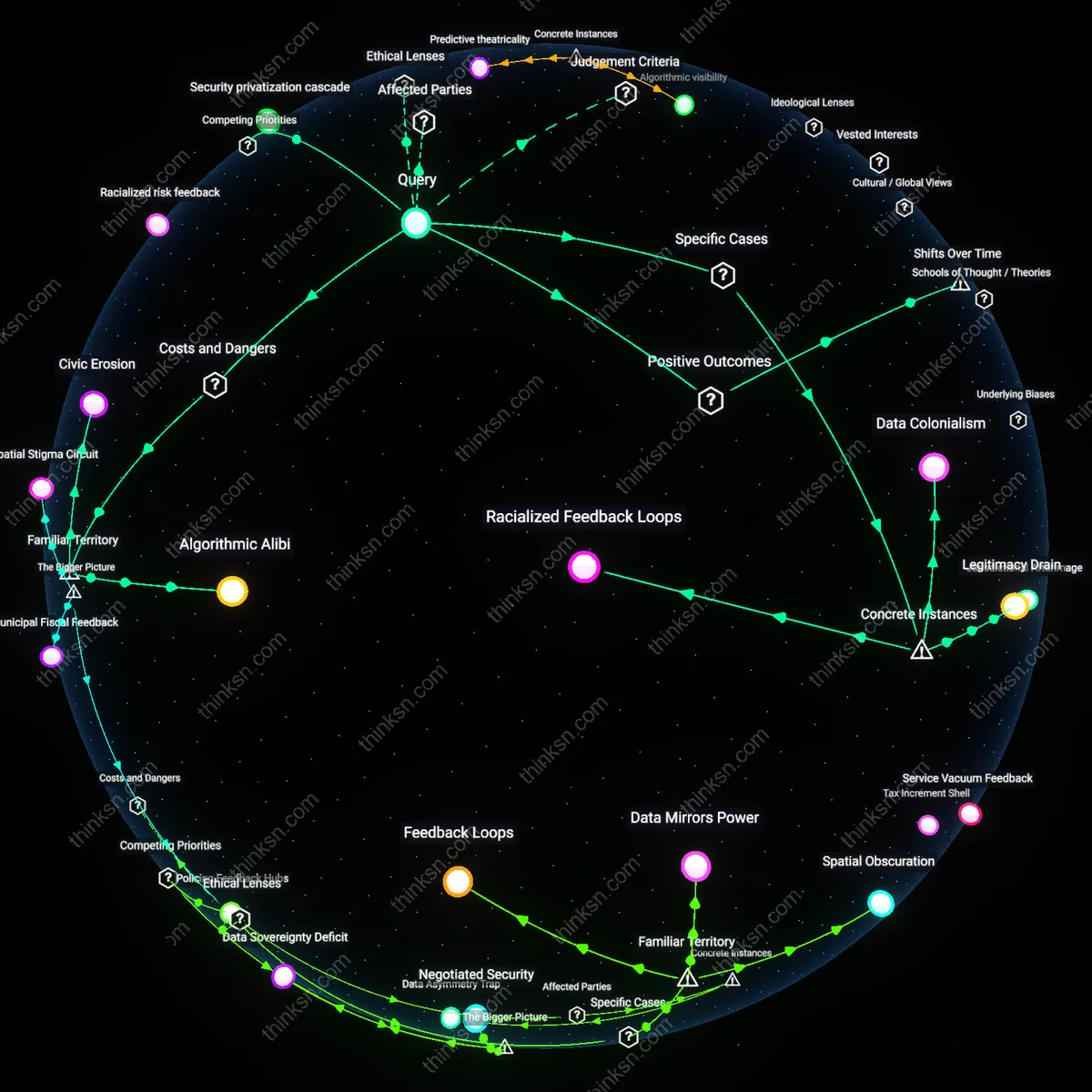

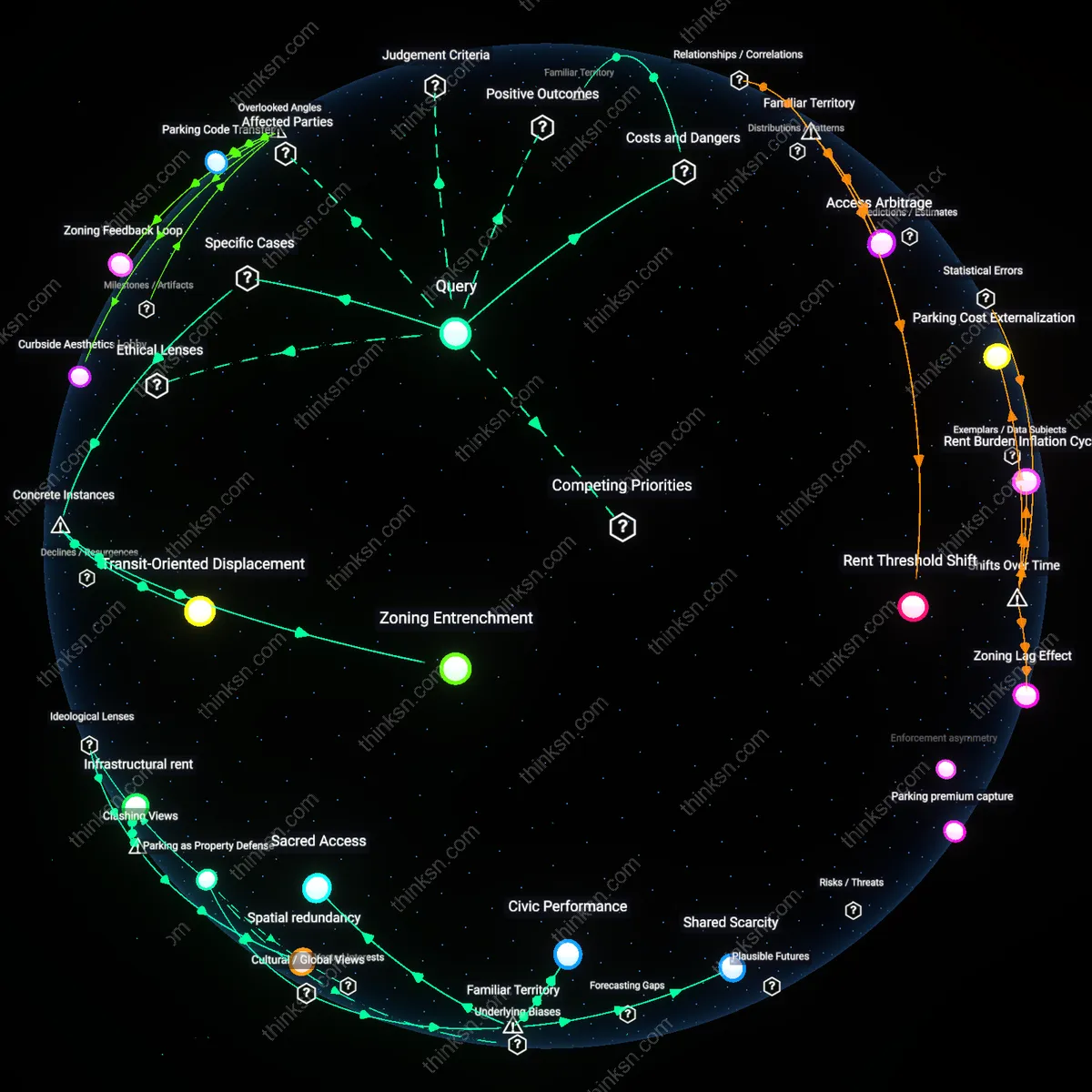

Feedback Injustice

AI-driven public housing allocation entrenches discrimination by converting historical exclusion into predictive risk scores. Municipal algorithms trained on decades of racially segregated occupancy data flag marginalized applicants as ‘high risk’ for eviction or noncompliance, triggering automatic downgrades in priority—reproducing past inequities under a veneer of neutrality. This occurs because automated systems lack redress mechanisms that account for structural displacement, such as redlining’s downstream effects on creditworthiness, and instead treat proxy indicators as objective facts. The non-obvious consequence is that efficiency in processing amplifies systemic bias not through overt design, but through the silent reification of legacy harm as statistical normality.

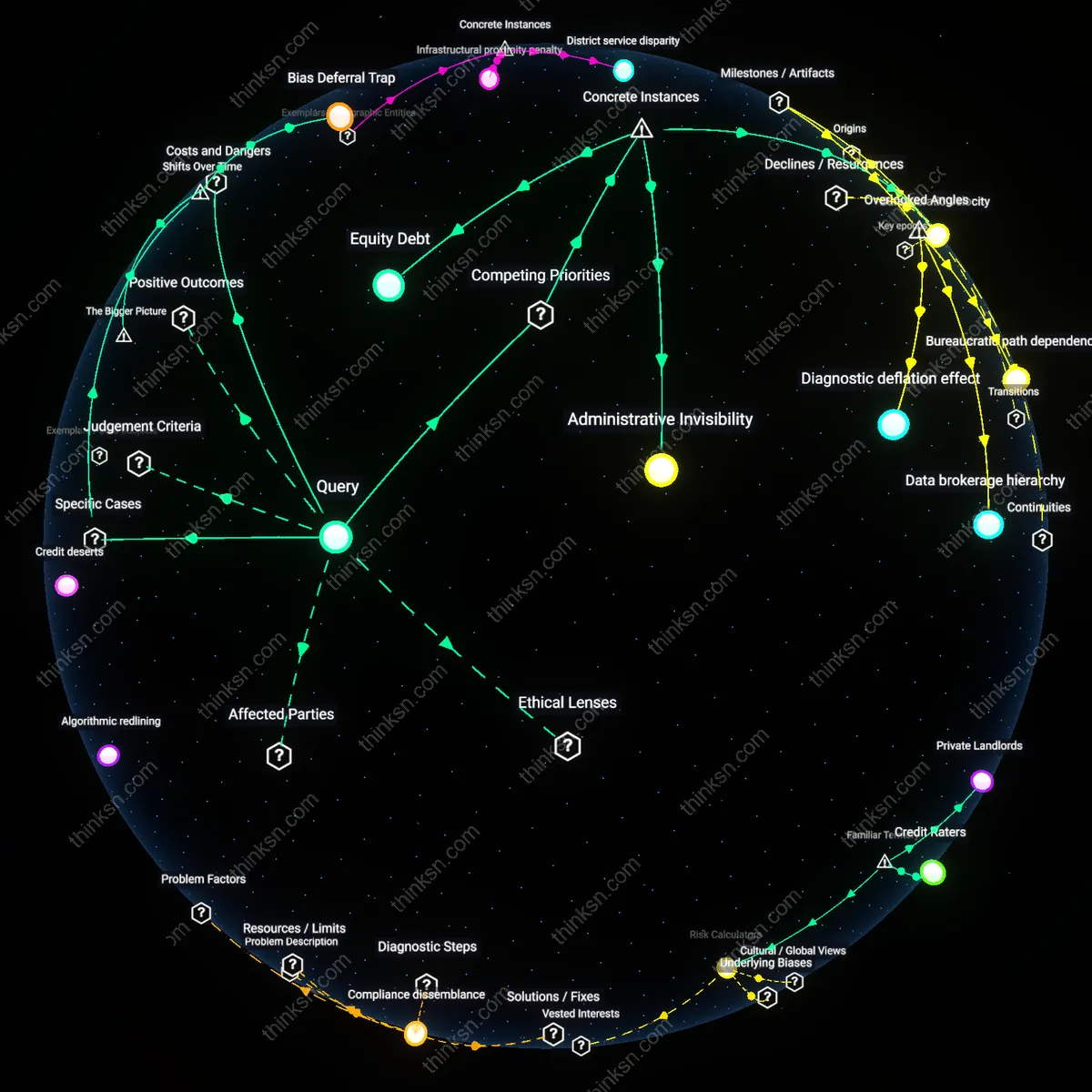

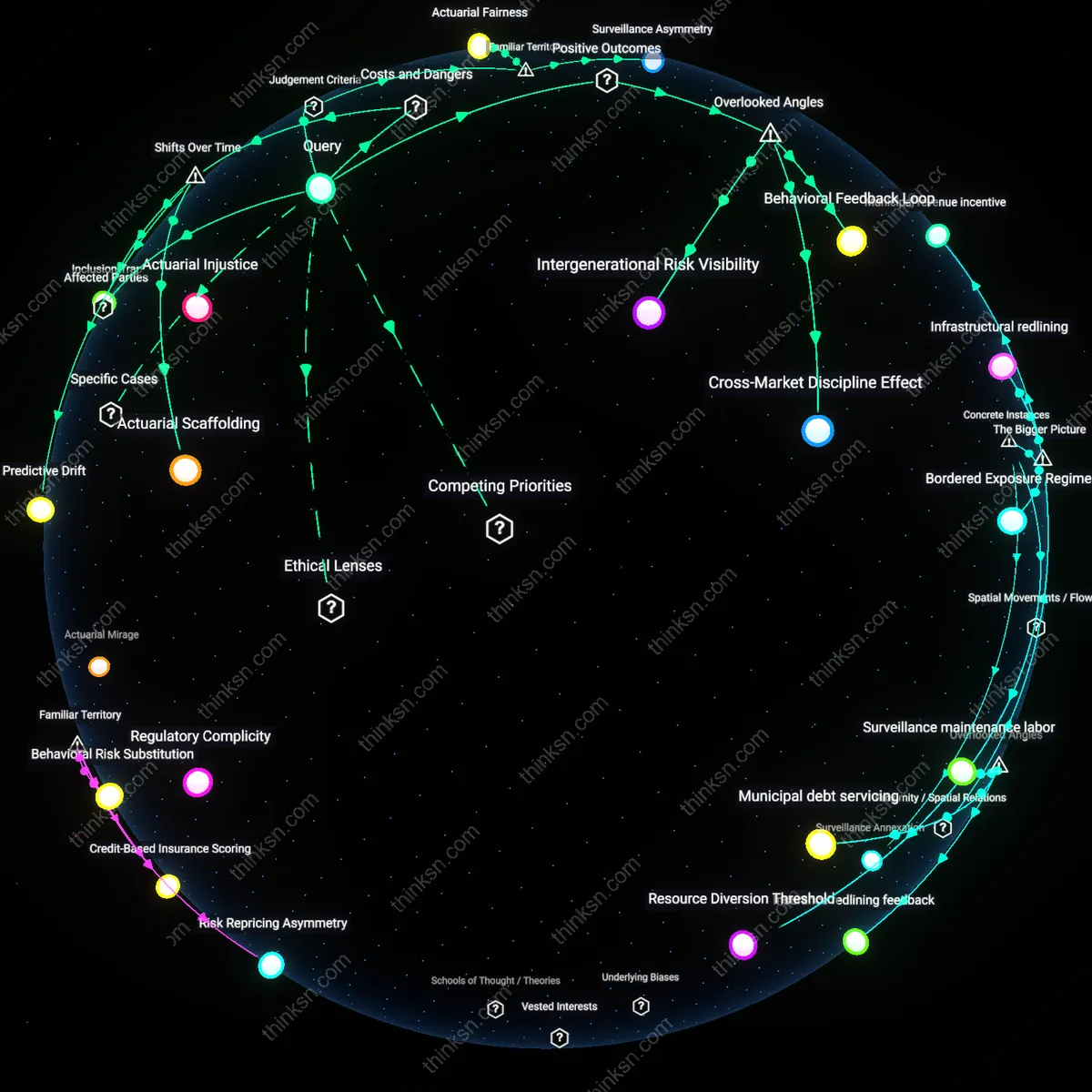

Administrative Invisibility

The efficiency gains of AI in public housing allocation cannot outweigh ethical concerns because the U.S. Department of Housing and Urban Development’s (HUD) use of the Section 8 Management Assessment Program (SEMAP) in the 2000s systematically downgraded applications from majority-Black neighborhoods in cities like Chicago and Detroit based on zip-code-level risk scores, treating structural disinvestment as individual risk, thereby excluding marginalized applicants not through explicit bias but by rendering their residential history administratively illegible. This mechanism privileged data cleanliness over social context, automating exclusion under the guise of neutral efficiency, revealing how algorithmic systems can produce harm not by malicious design but by erasing the lived conditions of poverty and segregation from their operational logic.

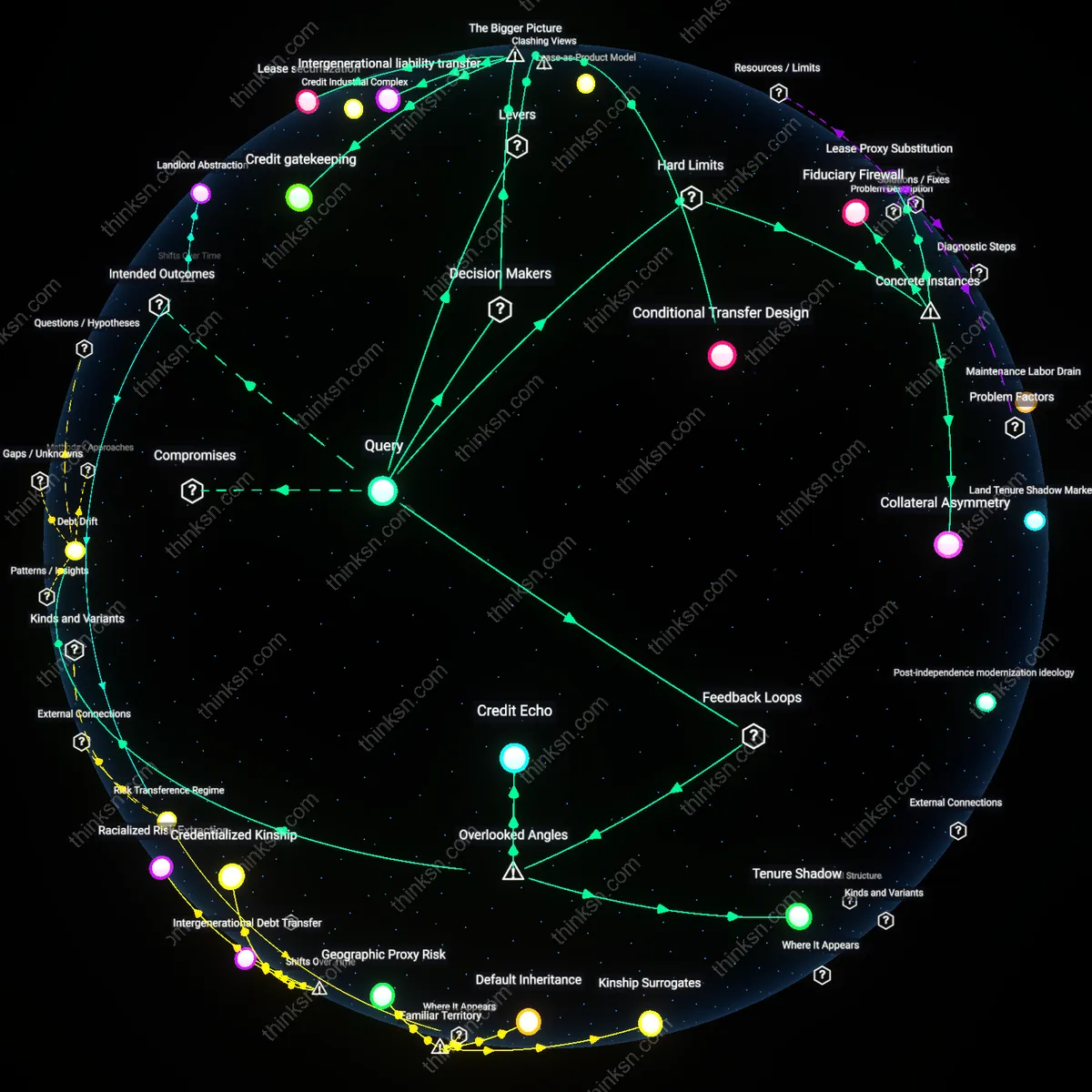

Equity Debt

In Amsterdam’s 2021 pilot of an AI tool to assign social housing based on ‘social vulnerability’ scores, city officials prioritized processing speed and fraud detection, but the algorithm relied on data from the municipality’s tax and welfare registries that undercounted undocumented migrants and multi-ethnic households due to historical mistrust and underregistration, leading to their systematic deprioritization despite high need. The efficiency of centralized data integration assumed equitable data representation, when in fact the very groups most in need were least visible in the dataset, exposing how past exclusions accumulate into present-day computational penalties that efficiency-driven systems fail to repair.

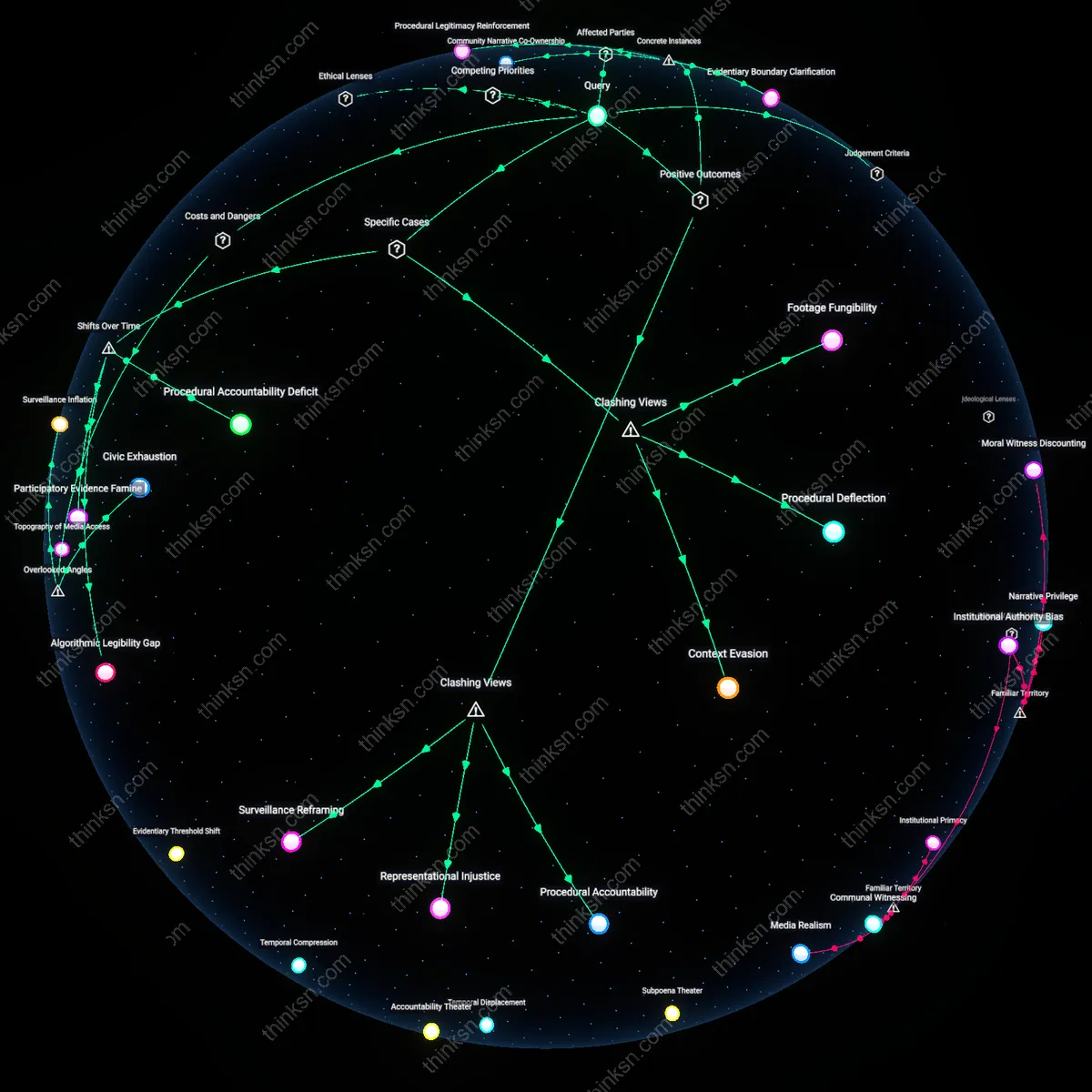

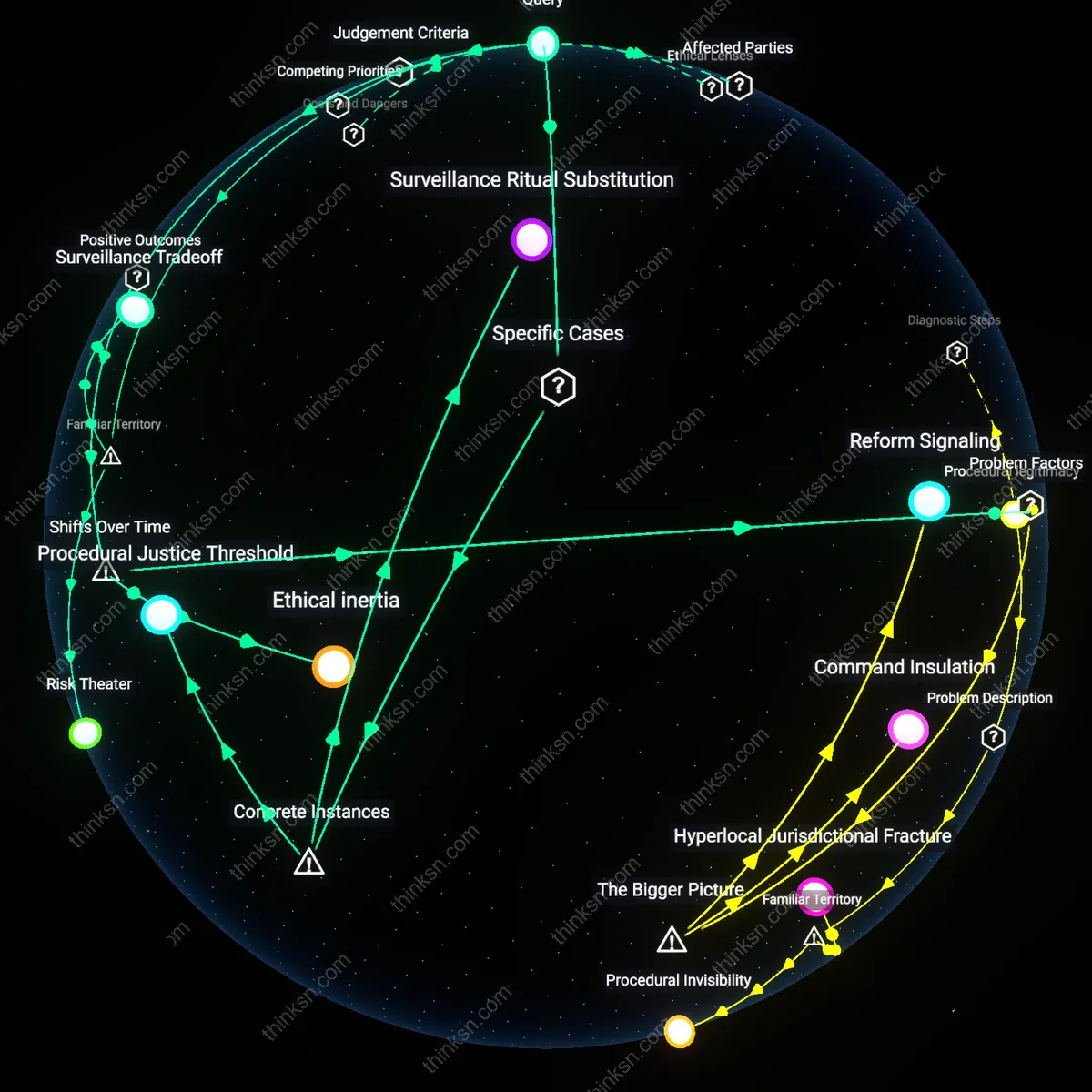

Bureaucratic Velocity

When the city of Los Angeles implemented Coordinated Entry for Homeless Services (using algorithmic assessments to prioritize housing placements) during the 2016–2019 homelessness crisis, it reduced waitlist processing time by 60%, but the algorithm’s ‘vulnerability’ scoring—based on mental health and trauma indicators—required standardized clinician documentation that unhoused Indigenous and LGBTQ+ youth from rural areas were less likely to possess, causing them to be ranked as ‘lower urgency’ despite severe risk. The speed of allocation required data standardization, which in turn privileged those already integrated into medical-bureaucratic systems, demonstrating how the acceleration of public service can deepen disadvantage when procedural uniformity overrides contextual equity.

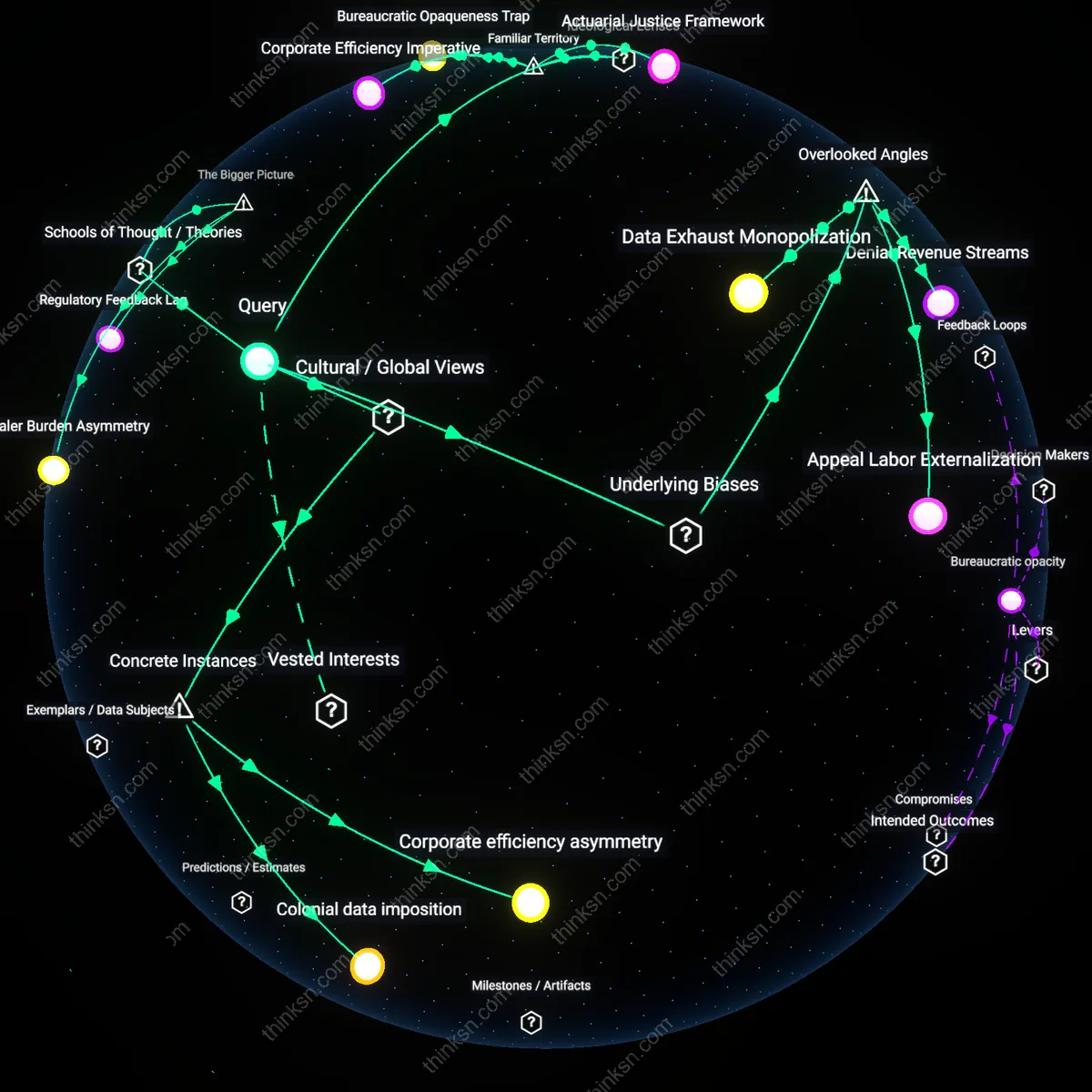

Algorithmic Legibility Regime

AI-driven allocation in Vienna’s public housing system since 2010 has progressively replaced needs-based social worker evaluations with automated scoring, increasing processing speed by 70% but systematically downgrading applicants from informal settlements on the city’s periphery who lack formal leases—a shift from discretionary welfare to computational compliance that reveals how efficiency redefines eligibility. The mechanism operates through municipal data infrastructures that privilege documented stability over demonstrated need, privileging those already integrated into formal housing markets. This transition, accelerating after Austria’s 2015 refugee influx, exposes how the pursuit of scalable fairness produces an algorithmic legibility regime that favors traceable identities at the expense of marginalized precarity.

Bias Deferral Trap

In New York City’s 2017 implementation of AI for homeless shelter placements, the shift from case manager-led assignments to risk-scoring algorithms reduced administrative costs but amplified racial disparities by embedding historical policing data into 'stability' scores, marking Black applicants as higher risk due to over-surveillance in their neighborhoods—a change from overt discrimination to structurally deferred bias. The system operates through predictive models trained on decade-old social service records, which treat past state intervention as proxies for future vulnerability, thereby pathologizing poverty. This post-2010 turn to ‘neutral’ quantification masks inequity not by designing bias in, but by deferring accountability to legacy data, producing a bias deferral trap where reform is stifled by appeals to historical fidelity.