Should You Stay in AI-Assisted Tech Writing or Switch to UX Research?

Analysis reveals 11 key thematic connections.

Key Findings

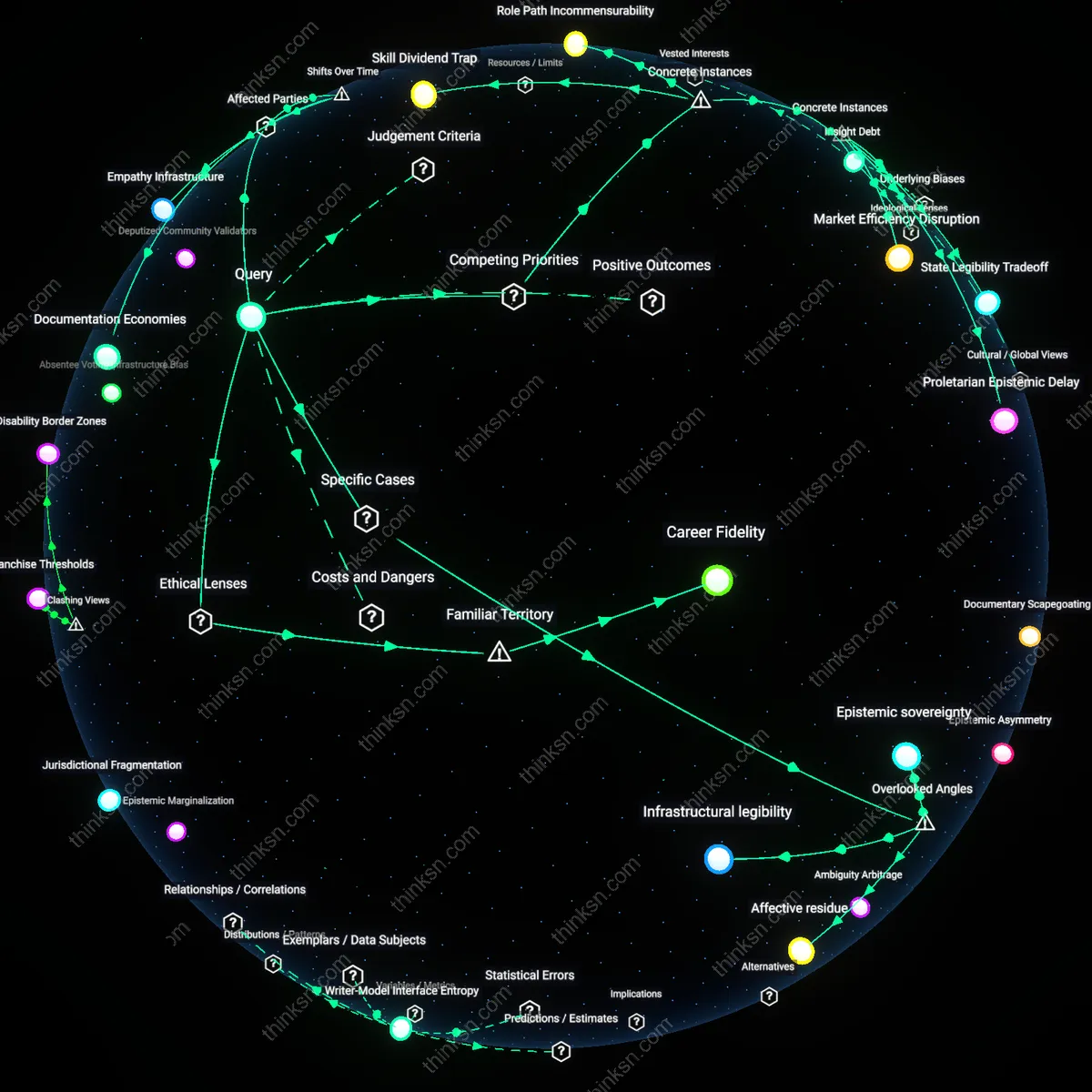

Documentation Economies

Choose the AI-assisted writing role if sustained organizational efficiency in high-velocity technical sectors depends on rapidly scalable, standardized documentation ecosystems, which emerged decisively after 2015 with the industrialization of API-first product development at companies like Stripe and GitHub; in this shift, technical writers became systematizers of machine-readable knowledge flows, reducing release-cycle friction not through human interpretation but through predictable, pattern-based content architectures that integrate directly with development pipelines—revealing how the residual function of technical writing is no longer translation for users but stabilization for systems.

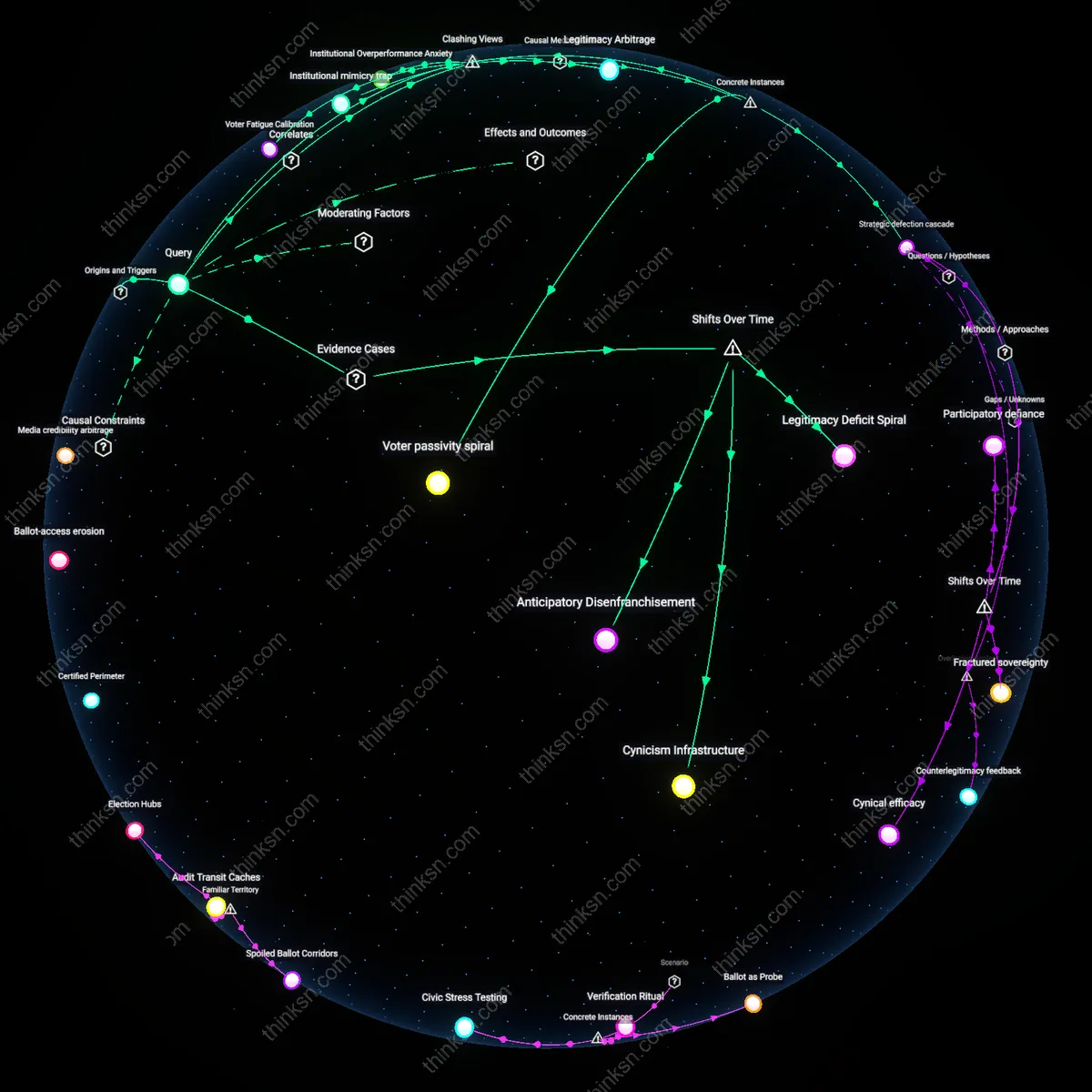

Empathy Infrastructure

Move into user-experience research if the erosion of pre-digital observational methodologies since the early 2000s has rendered genuine behavioral insight a scarce organizational resource, as corporate design practices shifted toward quantitative A/B testing and algorithmic personalization, leaving qualitative depth—such as longitudinal ethnographic probing or disability-inclusive co-design—to be preserved only by specialized UX researchers embedded in human-centered innovation hubs like those at IDEO or the VA’s Office of Design and Innovation; this trajectory reveals that empathic rigor has become a counter-institutional infrastructure, necessary precisely because dominant product paradigms have automated away direct human contact.

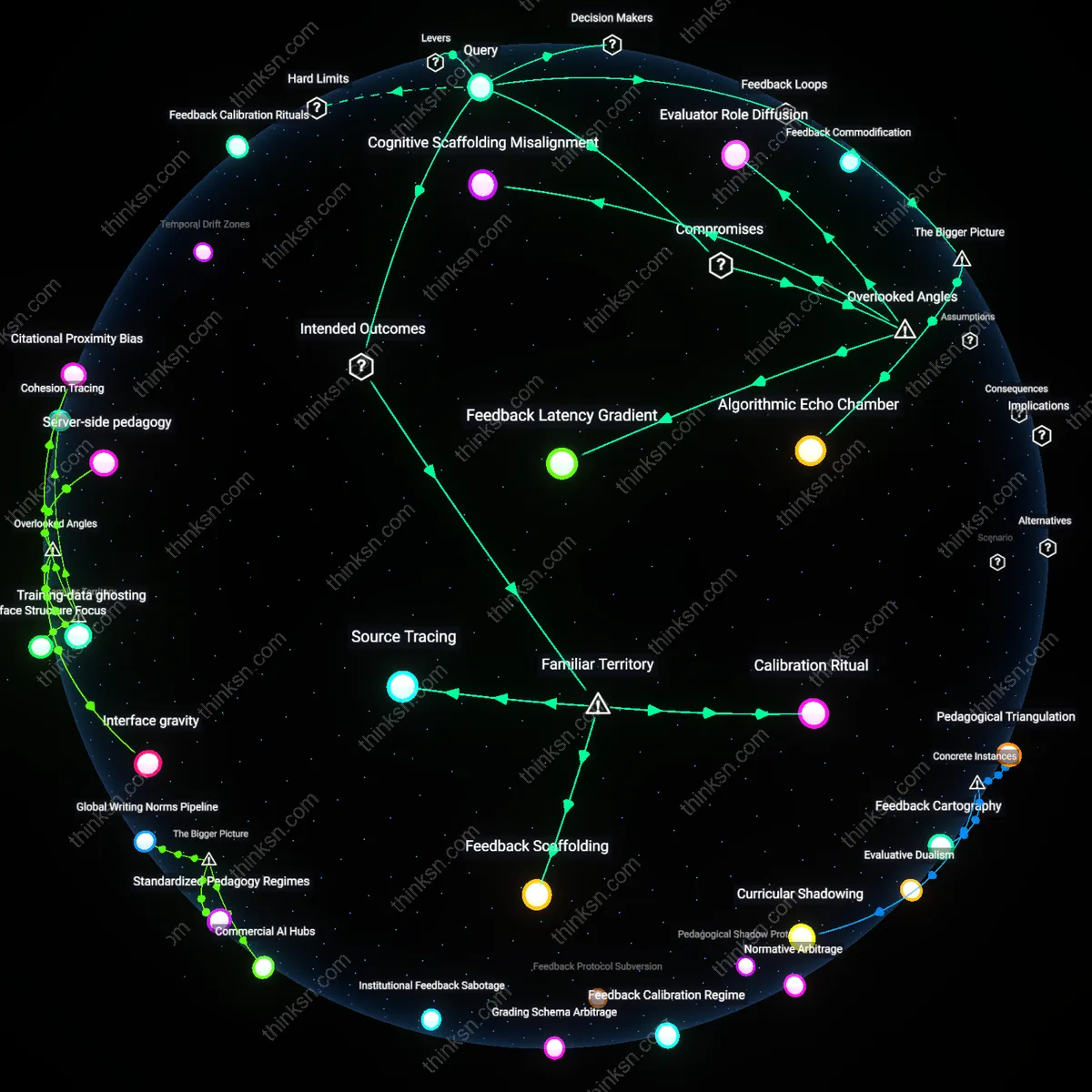

Automation Feedback Loops

Remain in AI-assisted technical writing when the post-2020 proliferation of generative AI tools reveals that content producers who train, correct, and refine AI outputs become the unseen source of the 'human insight' later attributed to autonomous systems, as seen in platforms like GitHub Copilot or Google’s Duet AI, where technical writers’ edits feed closed-loop improvement models that simulate understanding—this inversion shows that human insight is increasingly extracted retroactively from technical roles, not reserved exclusively for traditionally 'insight-driven' fields like UX research.

Skill Dividend Trap

Choosing to stay in an AI-assisted technical writing role locks professionals into a feedback loop where efficiency gains from automation erode opportunities to develop empathetic inquiry, as seen in IBM’s 2020 Documentation Modernization Initiative, where writers using AI templates gradually lost access to direct user feedback channels; this created a hidden career cost where short-term productivity compromised long-term capacity for human-centered roles, revealing how optimization in one domain can silently extinguish competencies vital in another.

Insight Debt

Moving into user-experience research incurs a tangible delay in impact because human insight cycles are slower than algorithmic output, exemplified by Airbnb’s 2014 redesign, which required ethnographic fieldwork across 20 cities before revealing that trust hinged on high-quality photography—a finding that could not be surfaced by data alone; this demonstrates that prioritizing deep human understanding demands sacrificing speed, and that organizations (and individuals) accepting this trade-off build durable insight no AI can replicate in real time.

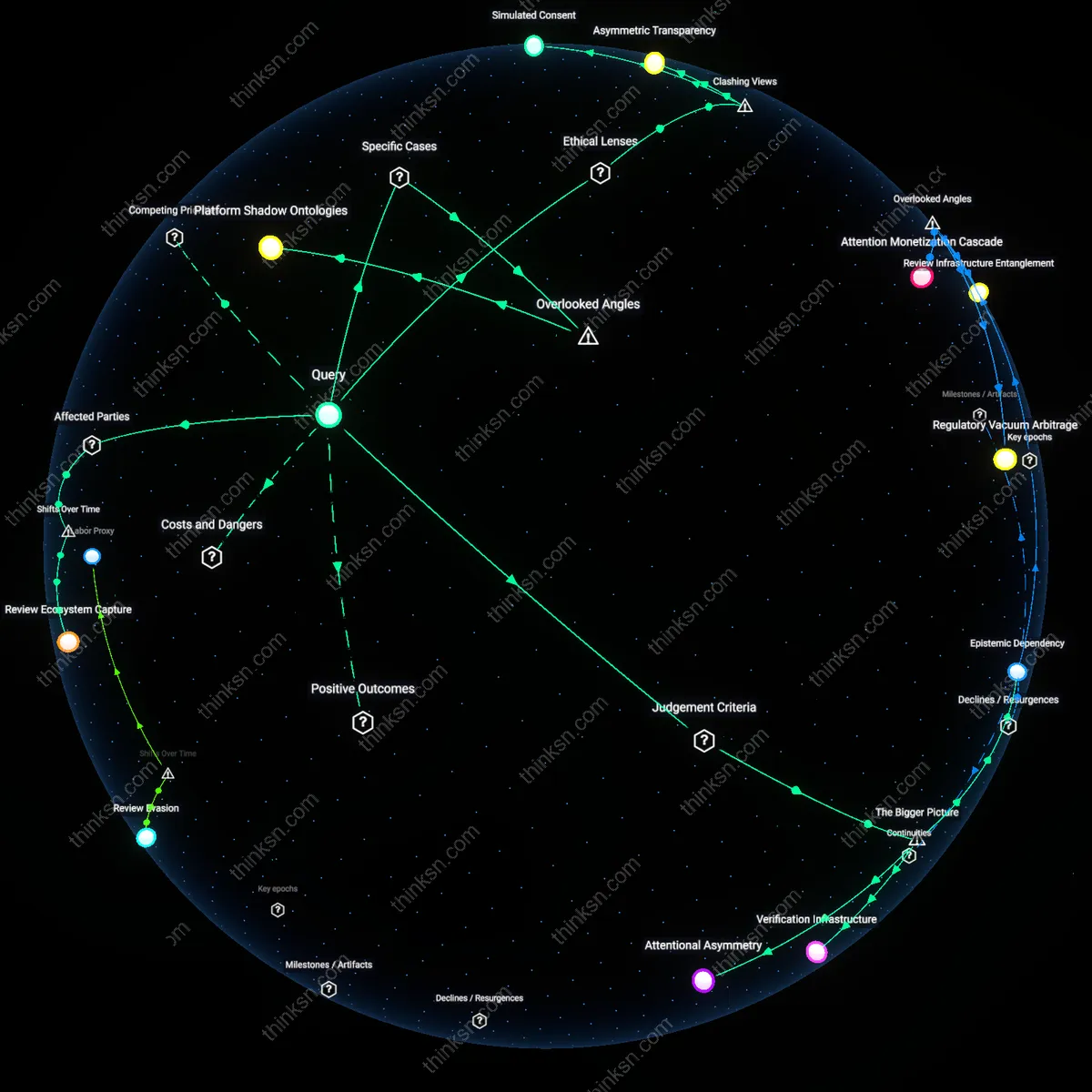

Role Path Incommensurability

The choice between technical writing and UX research mirrors the fracture at ProPublica during its 2016 collaboration with software developers on algorithmic accountability reporting, where journalists trained in narrative precision struggled to align with developers’ data logic, revealing that adjacent roles in tech ecosystems operate under incompatible epistemic standards; thus, transitioning between these domains is not a linear advancement but a leap across cognitive regimes, where success in one undermines credibility in the other due to divergent criteria for what constitutes valid knowledge.

Career Fidelity

Choose the AI-assisted technical writing role if sustaining long-term organizational trust is paramount, because this path aligns with deontological ethics—specifically Kantian duty—where consistency in role adherence becomes a moral obligation to one’s employer and professional identity. Technical writing, especially when embedded in regulated industries like health tech or aviation, operates through procedural accountability governed by compliance frameworks (e.g., FDA documentation standards), making deviation from established roles ethically suspect under rule-based moral systems. The underappreciated insight is that staying put isn't passive inertia but active moral fidelity, resisting the cultural bias that equates change with growth.

Empathy Dividend

Move to user-experience research if cultivating human dignity through direct engagement is your primary ethical commitment, because this shift embodies care ethics—a relational moral framework emphasizing attentiveness to vulnerability and context. UX research, particularly in public-sector design such as disability-accessible voting systems, functions through iterative empathy cycles where moral value accrues from sustained listening to marginalized users. The overlooked truth within the common narrative of 'tech progress' is that technical writing’s efficiency gains often mask ethical distance, while UX’s slower, human-centered rigor produces an empathy dividend that redistributes interpretive power.

Epistemic sovereignty

Choose the role where you retain epistemic sovereignty—AI-assisted technical writing at organizations like Palantir, where documentation directly shapes how clients interpret algorithmic outputs, gives writers unseen influence over decision-making frameworks. Most analysts overlook that technical writers in high-stakes AI deployments are not passive transcribers but active arbiters of what knowledge gets codified and trusted, because their documentation becomes the canonical source for user understanding. This hidden authority to define computational logic in human terms creates a strategic vantage point where technical communicators exercise control over knowledge interpretation, a form of power typically assumed to reside only with data scientists or UX researchers.

Affective residue

Move to UX research if you seek to harness affective residue—the emotional and cognitive traces users leave in qualitative data at companies like Mozilla, where open-source ethos amplifies the impact of user vulnerability shared in research sessions. Standard career advice ignores how UX researchers accumulate invisible equity through intimate exposure to user distress, confusion, or delight, which over time shapes product ethics and team empathy; this psychological ledger influences design decisions long after interviews end. Unlike the clean, procedural outputs of AI-assisted writing, this residual emotional insight becomes a private compass that informs moral positioning within tech, altering one’s capacity to advocate for humane systems.

Infrastructural legibility

Remain in AI-assisted technical writing when you recognize that infrastructural legibility—achieved in environments like AWS or TensorFlow, where API documentation determines what engineers perceive as possible—grants disproportionate leverage over innovation trajectories. Most dismiss technical writers as downstream explainers, but in reality, the structure and clarity of documentation define which features are used, trusted, or even seen as viable, effectively gating technological adoption. This unseen editorial control over what becomes legible within complex systems positions the writer as a silent architect of technical possibility, a role more structurally consequential than the visible empathy work of UX research.