Is Urban Planning Worth the Cost of Anonymity?

Analysis reveals 8 key thematic connections.

Key Findings

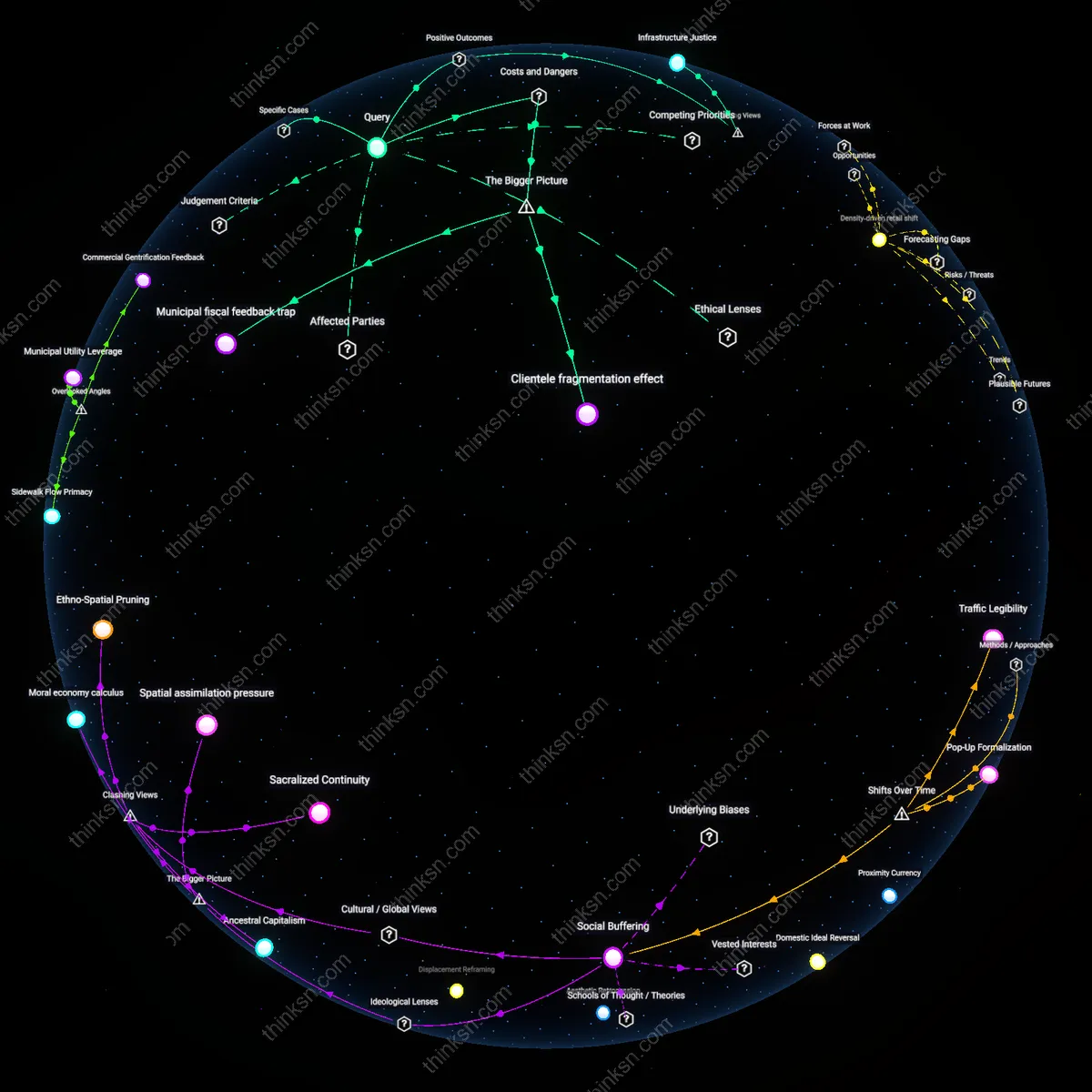

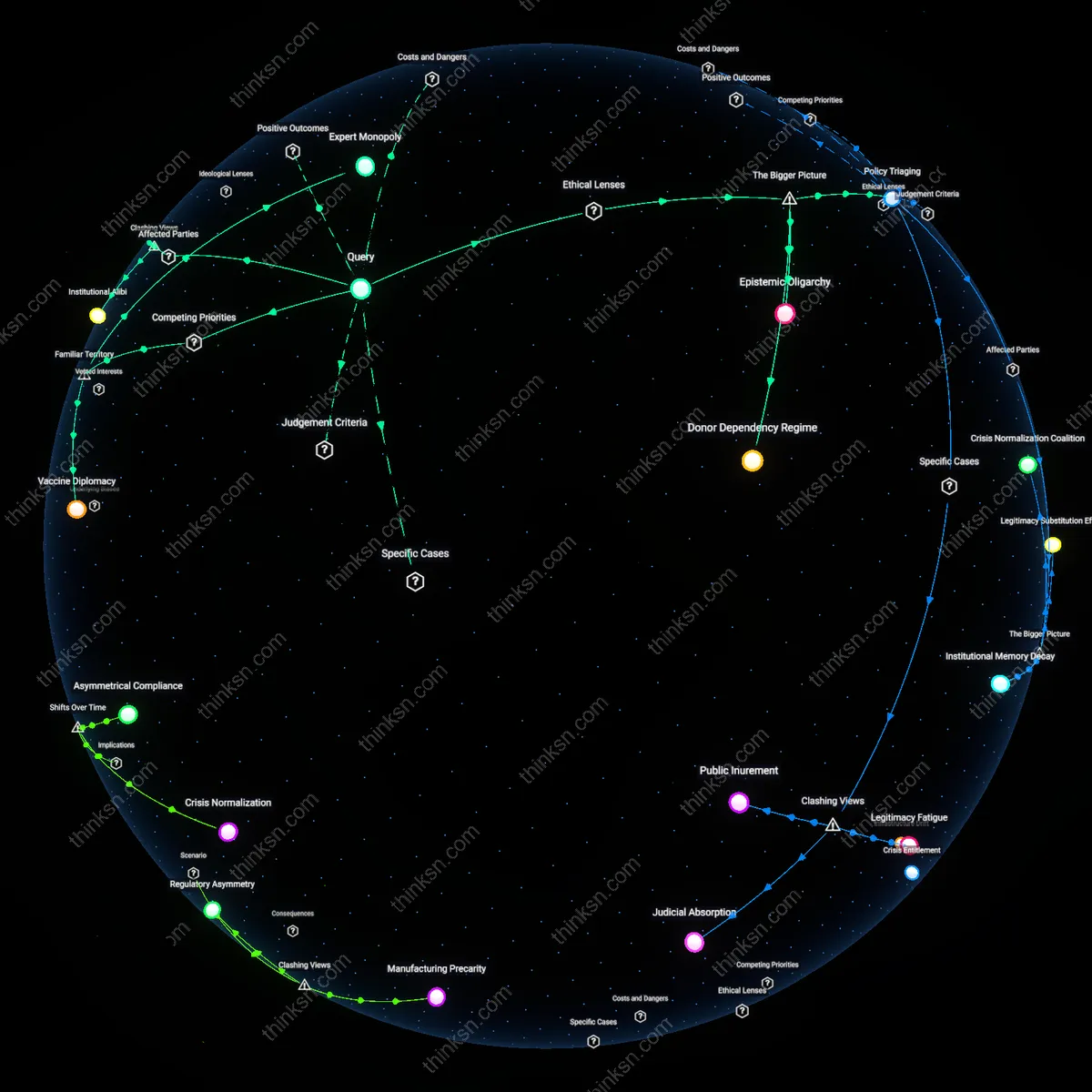

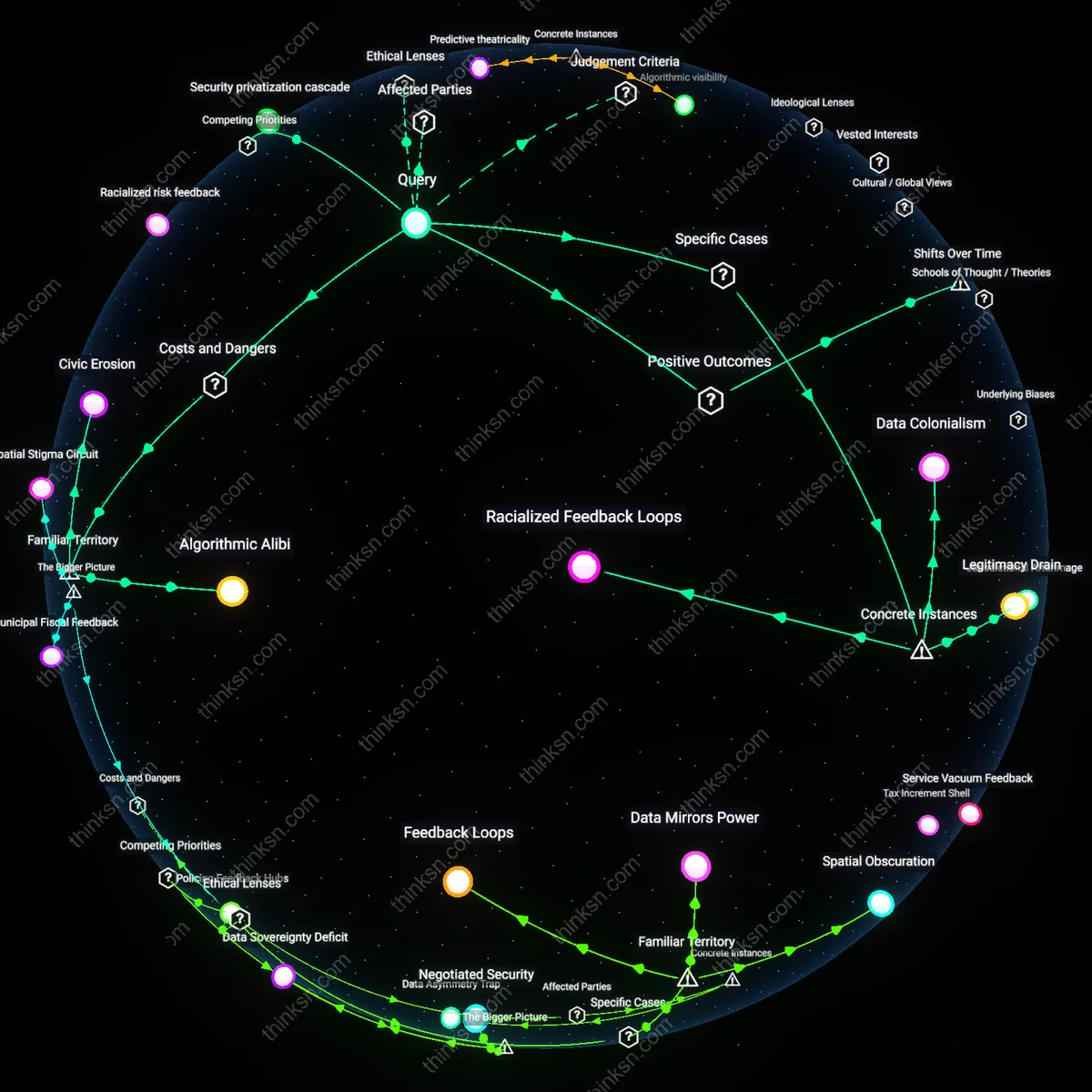

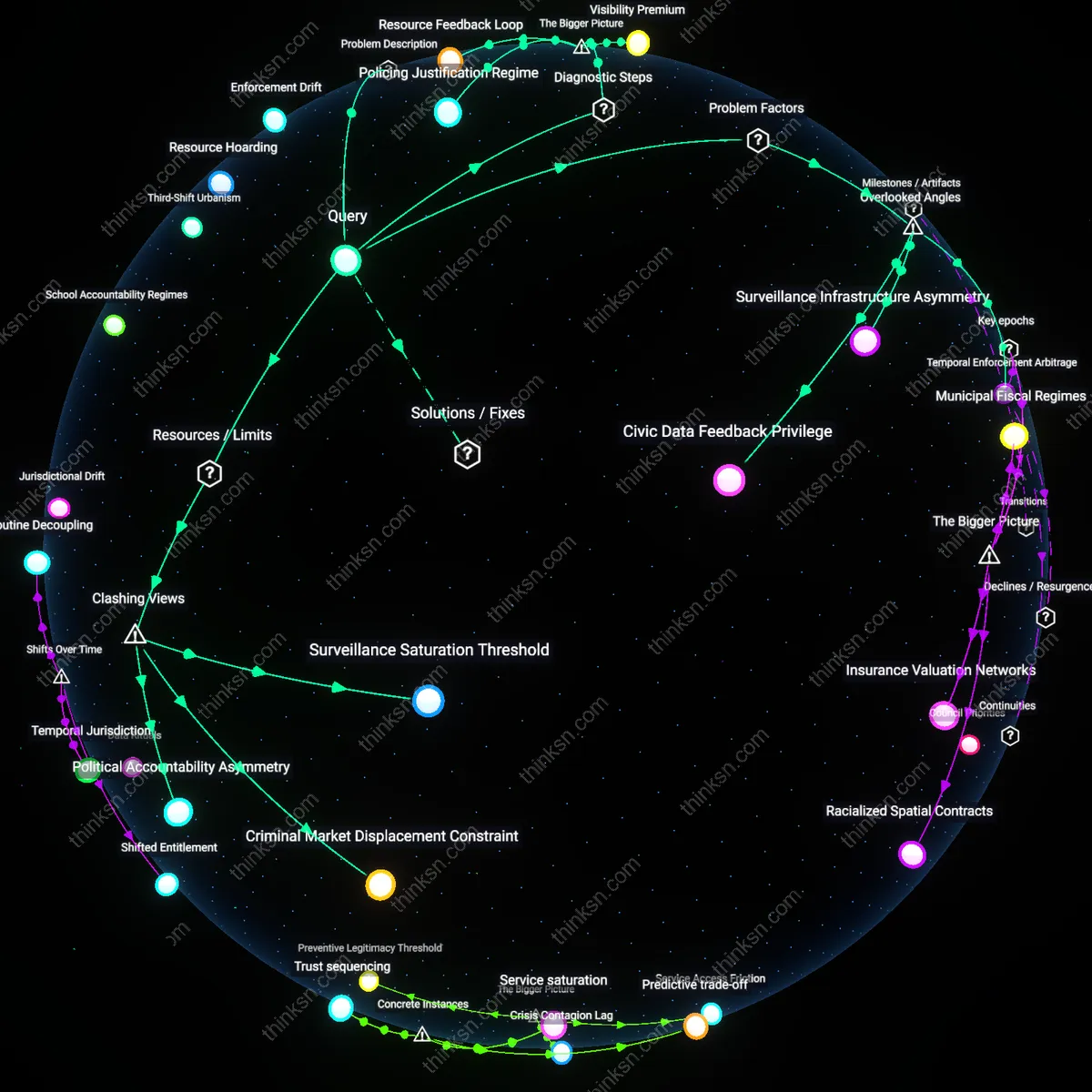

Infrastructure Feedback Loops

Yes, because the integration of location data into municipal systems creates self-reinforcing feedback loops that reshape urban infrastructure in ways that gradually marginalize populations with irregular mobility patterns—such as undocumented residents, gig workers, or unhoused individuals—whose movements fall outside algorithmic norms, thereby distorting public investment away from their needs. These feedback loops operate through adaptive traffic modeling and transit route optimization that prioritize high-density, predictable movement, effectively rendering irregular mobility invisible in long-term planning; this dynamic is rarely acknowledged because conventional equity assessments focus on static demographics rather than the fluid exclusion produced by data-driven infrastructure learning. The non-obvious mechanism is that urban systems don't just reflect mobility—they evolve to reward it, systematically eroding spatial justice over time.

Data Labor Asymmetry

Yes, because location data functions as an unremunerated labor input into urban planning, where individuals generate valuable behavioral traces through daily movement—such as commuting or shopping—yet receive no compensation or decision rights over how that data shapes the built environment, creating a structural asymmetry in value extraction. This asymmetry is mediated through private-public data partnerships, such as city collaborations with tech platforms like Google or telecom providers, which treat aggregated mobility patterns as neutral inputs while obscuring their origin in collective human activity; the overlooked issue is that anonymity erosion is not a side effect but a required condition for data utility, meaning the system depends on disempowered data production. The key insight is that the urban data economy operates on invisible labor, which reframes privacy loss as economic exploitation rather than a trade-off.

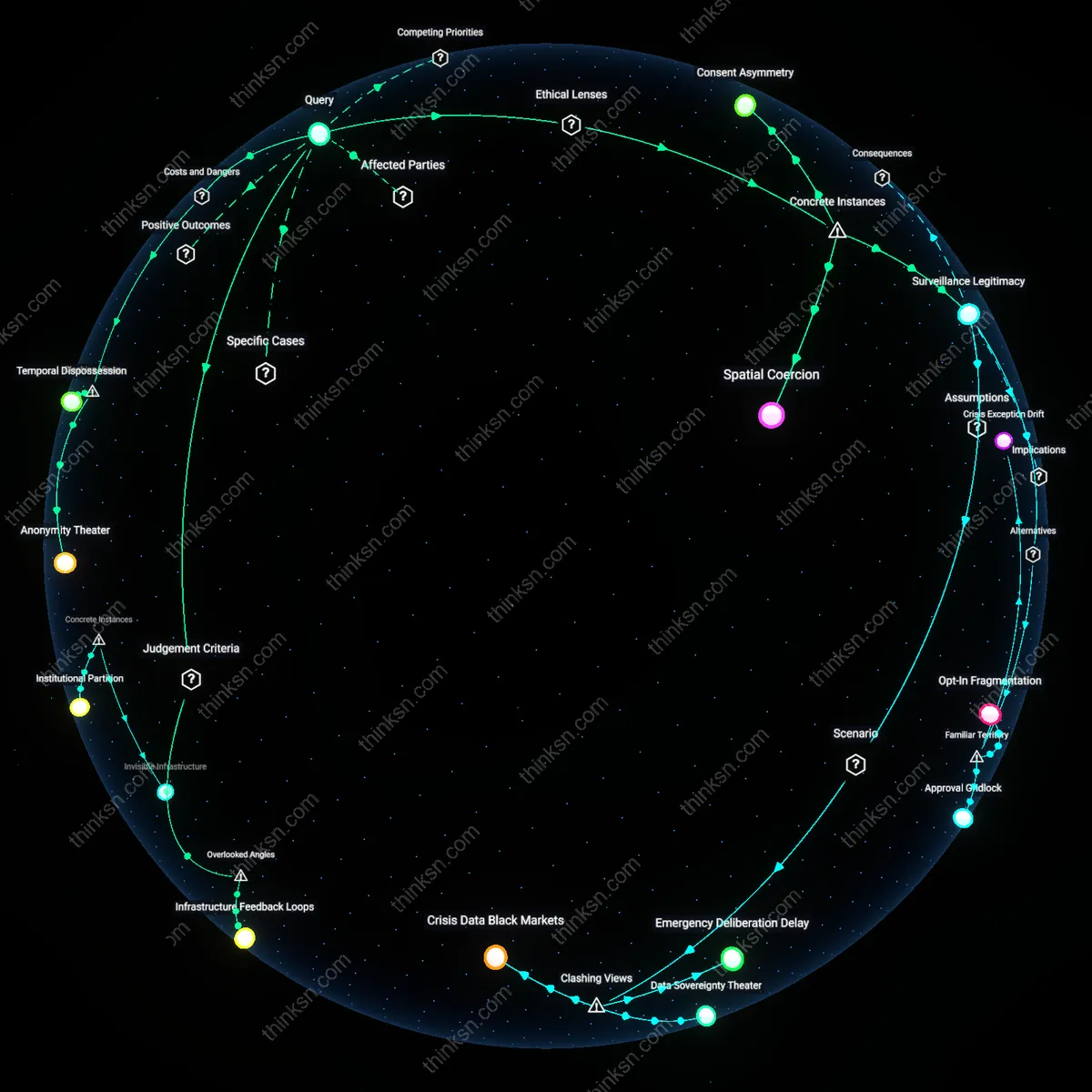

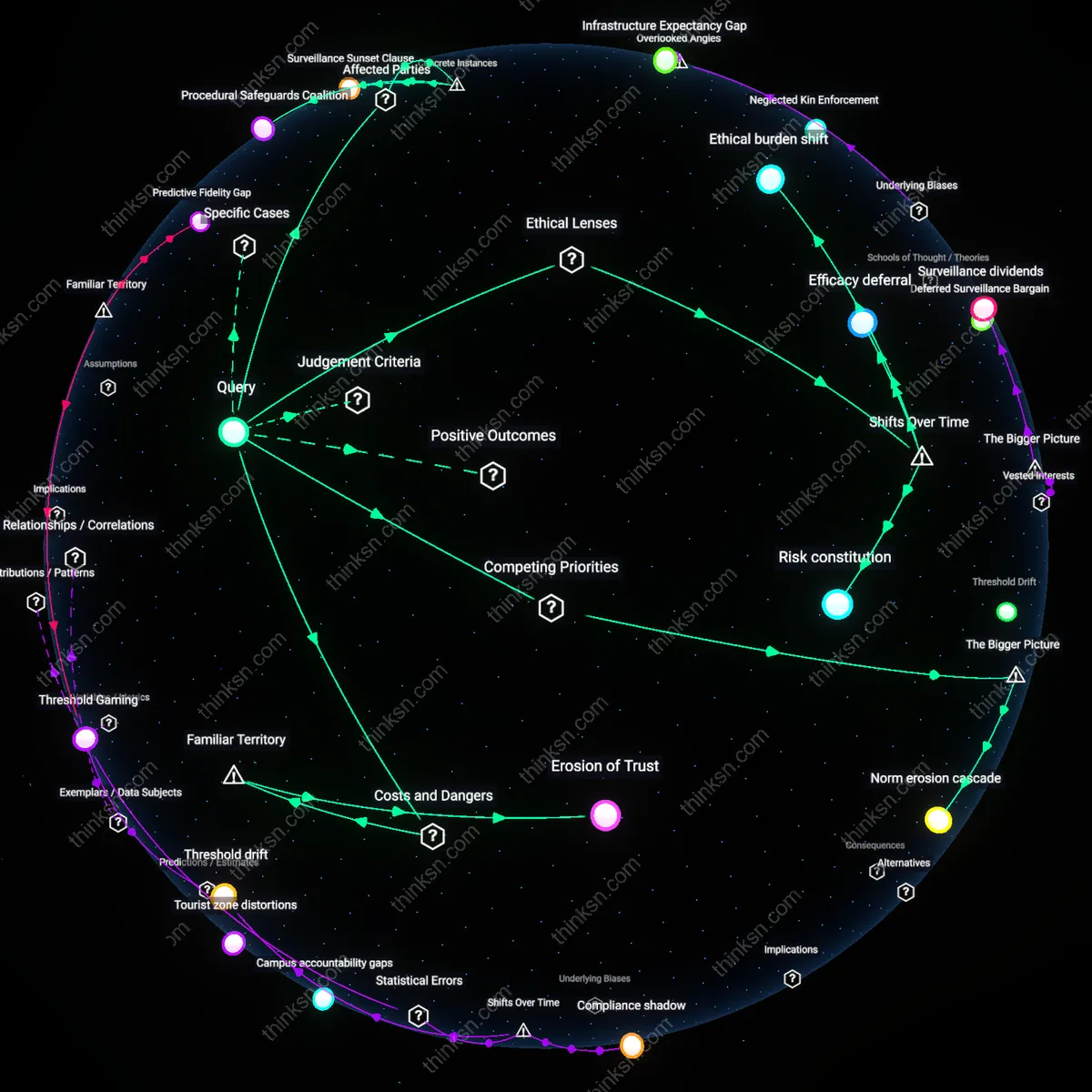

Anonymity Theater

The benefit to society from using location data in urban planning does not justify the erosion of individual anonymity because the anonymization techniques used in public-sector data sharing are structurally performative rather than effective, enabling surveillance-adjacent misuse under the guise of privacy compliance. Municipal agencies and their private contractors routinely release 'de-identified' mobility datasets that can be re-identified using publicly available information and spatial-temporal sparseness, as demonstrated in Toronto’s Sidewalk Labs project where 99.98% of individuals in a dataset could be uniquely identified with just 15 minutes of movement data. This creates a systemic risk not from malicious intent but from institutional reliance on statistical fictions of privacy, normalizing data exposure while certifying it as safe—what functions as privacy protection is, in practice, a ritualized justification for data extraction.

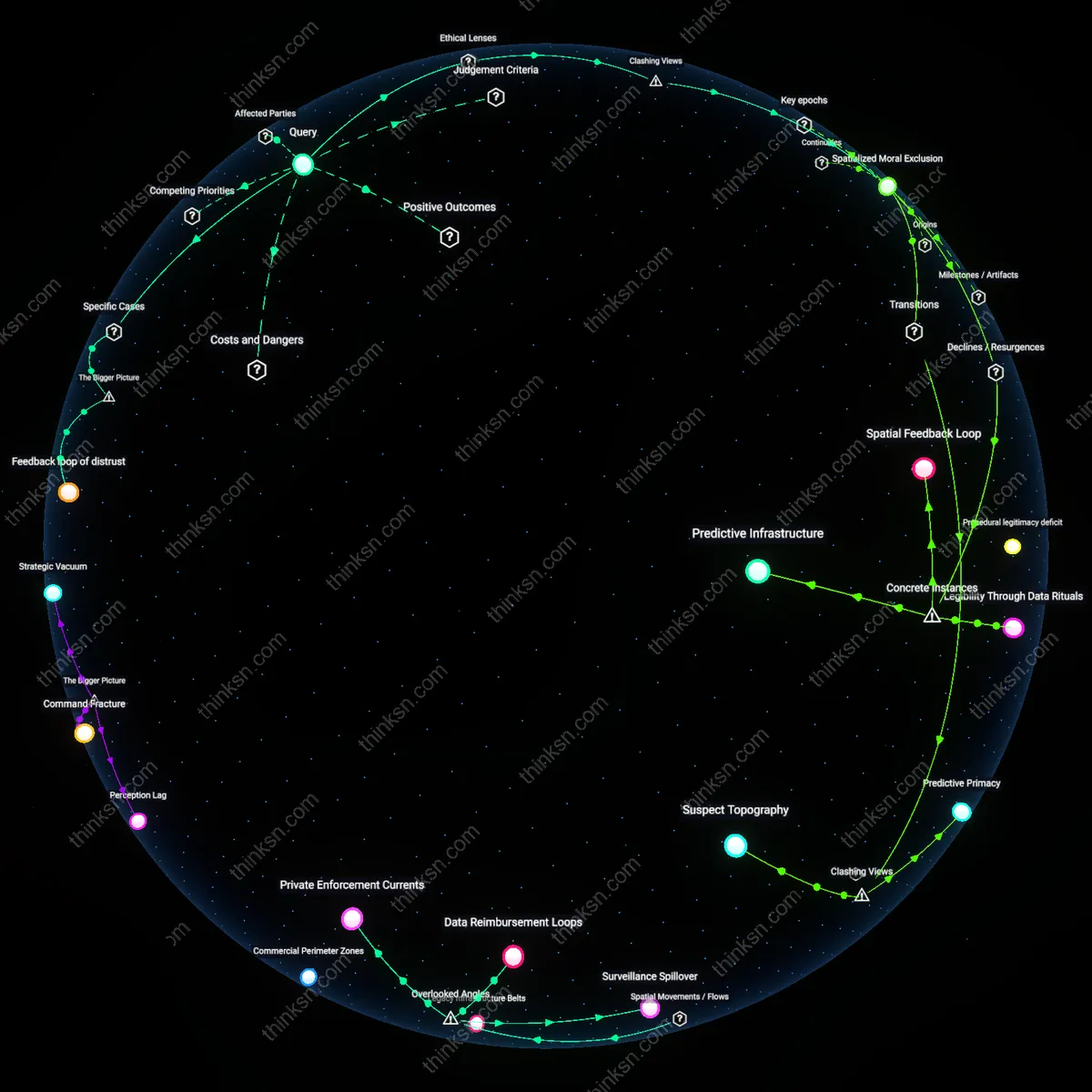

Temporal Dispossession

The benefit to society from using location data in urban planning does not justify the erosion of individual anonymity because longitudinal aggregation transforms episodic location traces into permanent behavioral profiles that are weaponized in time, not space. Transportation departments and smart city platforms store mobility histories far beyond the planning cycle, enabling future governance actions—such as policing or insurance risk assessment—that individuals cannot anticipate or contest at the moment of data collection. This temporal drift of data use circumvents consent not through overt violation but through institutional memory, where today’s traffic optimization becomes tomorrow’s grounds for social sorting—revealing that anonymity is not only eroded at collection but foreclosed in possibility across time.

Spatialized Blame

The benefit to society from using location data in urban planning does not justify the erosion of individual anonymity because mobility patterns are falsely framed as neutral inputs when they actually encode and reify pre-existing spatial inequalities under algorithmic legitimacy. In cities like Los Angeles and Chicago, aggregated location data derived from mobile devices overrepresents affluent, tech-connected populations while rendering marginalized communities invisible or deviant, leading planners to build infrastructure that amplifies displacement and criminalizes poverty under the guise of data-driven objectivity. This misrecognition is not an error but a systemic output—the data appears anonymized but ends up assigning moral valence to space, converting statistical absence into policy neglect and turning correlation into justification for exclusion.

Surveillance Legitimacy

The use of anonymized mobile phone data by the New York City Metropolitan Transportation Authority to redesign bus routes during the 2019 transit crisis legitimized ongoing location surveillance by framing privacy trade-offs as civic contributions under utilitarian governance. By aggregating commuting patterns from cellular carriers through data-sharing agreements with private firms like Safegraph, city planners optimized transit efficiency for millions while operating outside warrant-based oversight, thereby embedding surveillance within routine administrative rationality. This case reveals how emergency-driven urban interventions can normalize data appropriation when justified through consequentialist ethics, particularly when weighed against systemic public service failure. The non-obvious insight is that privacy erosion becomes politically palatable not through deception, but through visible improvements in public welfare that reconfigure surveillance as stewardship.

Consent Asymmetry

The rollout of Sidewalk Labs’ Quayside smart city project in Toronto established a precedent where individual anonymity was structurally negated by retroactively defined public consent, justified under liberal-pluralist ideals of participatory governance. Despite extensive civic consultations, the integration of location-tracking sensors for traffic and pedestrian flow modeling proceeded on the basis of institutional interpretations of implied public will, rather than opt-in individual permissions. Backed by the Waterfront Toronto development authority and framed as innovation in urban sustainability, the project treated aggregated geodata as collective property, thereby shifting ethical accountability from personal rights to communal benefit. The underappreciated dynamic is that democratic legitimacy can be leveraged to bypass individual autonomy, especially when data practices are obscured within complex public-private governance frameworks.

Spatial Coercion

In Johannesburg, the deployment of automated number plate recognition (ANPR) cameras under the Strategic Traffic Law Enforcement Programme was justified as a crime-reduction tool that improved urban safety planning through real-time movement analysis. Funded by municipal security budgets and integrated with predictive policing algorithms, the system generated mobility profiles that disproportionately mapped Black and low-income neighborhoods, reinforcing spatial containment under the guise of neutral data analytics. Justified through a Rawlsian 'veil of ignorance' logic in post-apartheid urban policy, the program advanced societal benefit claims while entrenching historical patterns of surveillance. The critical revelation is that location data systems can replicate coercive geographies even when technically anonymized, as urban planning becomes a vector for structural discrimination masked as efficiency.