Bureaucratic Inscription

Biometric systems first excluded populations not through technological failure but by aligning with colonial administrative logics that privileged legible, sedentary subjects over mobile or stateless ones. In British India and French West Africa, fingerprinting and bodily measurement were used to fix identities within tax and labor regimes, rendering nomadic groups, untaxed women, and lower-caste communities invisible not because they were unrecorded but because they were intentionally unclassified. This established a precedent where exclusion was not a flaw but a function—biometric infrastructures were designed to sort populations for governance, not inclusion. The non-obvious truth is that today’s gaps in facial recognition or iris scanning repeat colonial scripts where the state defines who counts by deciding who must be counted.

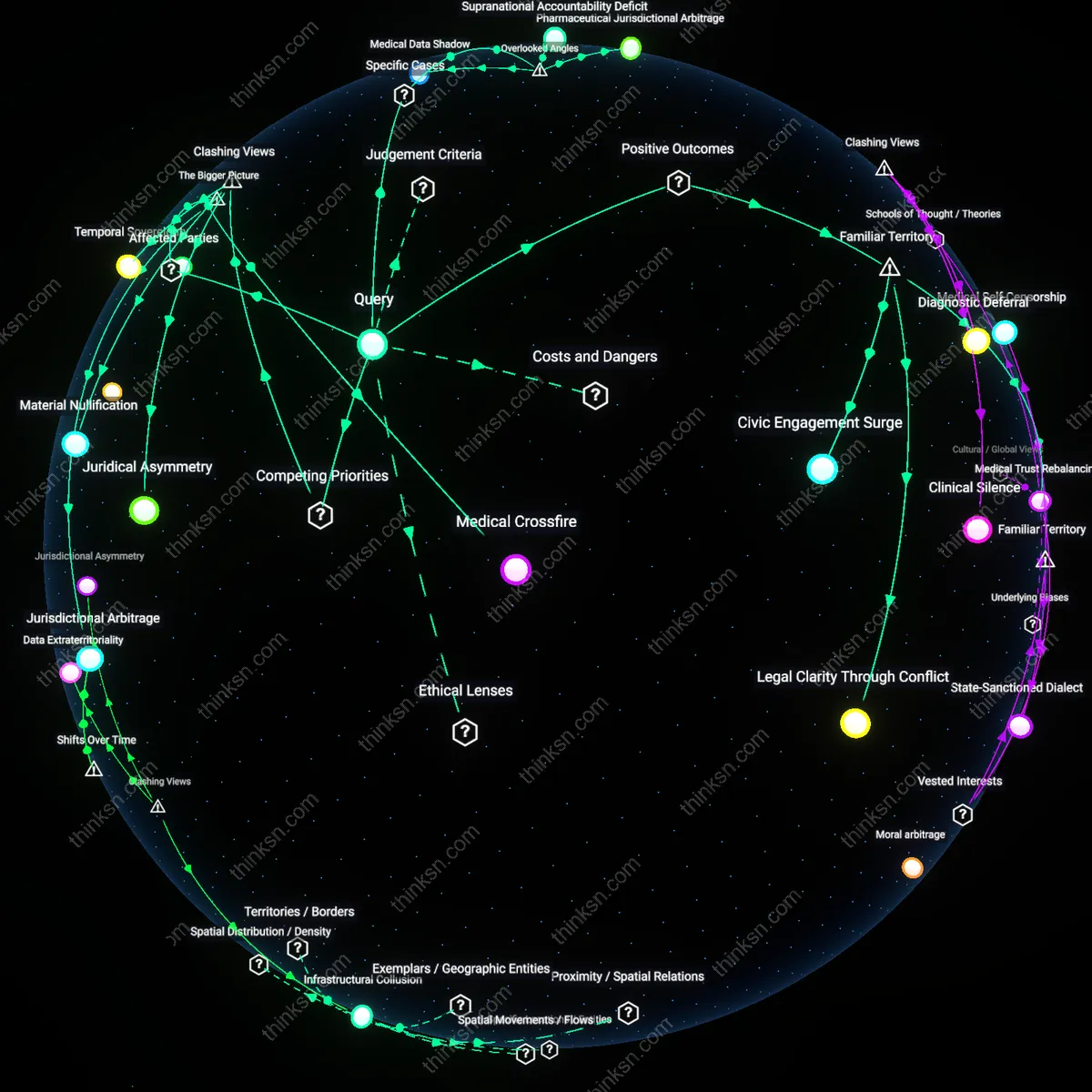

Threshold Evasion

The earliest biometric systems in 20th-century border controls and welfare programs did not simply fail to include marginalized people—they created thresholds of bodily standardization that certain bodies could not meet, thereby enabling their lawful exclusion. In Nazi Germany and later in US immigration checkpoints, biometric categorization relied on physiognomic norms that pathologized non-normative bodies, including those of disabled people, ethnic minorities, and gender-nonconforming individuals. These systems elevated a specific regime of legibility—what counted as a 'readable' face or fingerprint—such that deviation meant erasure. The dissonance lies in recognizing that exclusion was not a breakdown of accuracy but the fulfillment of a design intended to filter out bodies deemed socially disruptive or undeserving.

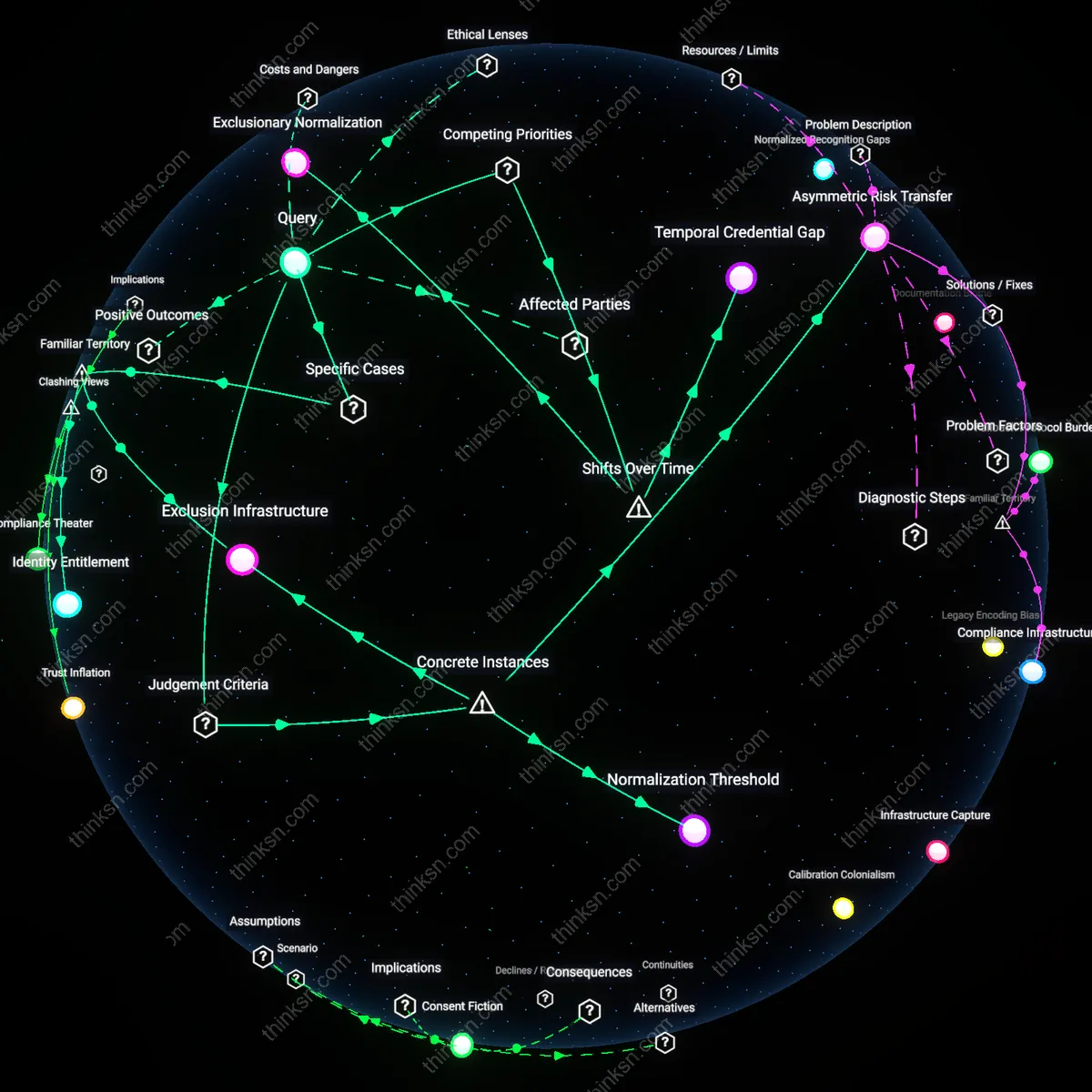

Consent Fiction

Biometric inclusion today hinges on a false equivalence between consent and access, a construct rooted in post-1980s humanitarian systems where refugees in Jordan or Kenya 'agreed' to iris scans in exchange for food rations, reinforcing a neoliberal framework of data-as-currency. These systems, funded by international NGOs and Western governments, framed biometric enrollment as voluntary when refusal meant starvation, thus normalizing biometric sacrifice among the displaced. The overlooked mechanism is how this redefined bodily integrity not as a right but as a negotiable asset, producing a global underclass trained to barter biometrics for survival. This reframes contemporary exclusion not as absence from databases but as coerced entry under asymmetrical terms—a soft compulsion that masks structural violence as choice.

Legacy Encoding Bias

Biometric systems initially designed using narrow demographic datasets entrenched exclusion by normalizing specific physiognomic traits as default, which inscribed historical racial and colonial categorization frameworks into technical standards. Standardization bodies like ISO/IEC and developers such as early facial recognition engineers at defense contractors (e.g., MITRE, NEC) treated Eurocentric facial morphology as the implicit baseline, making error rates spike for darker-skinned individuals not because of inherent technical limits but because the pivot from manual to automated identification reproduced past bureaucratic exclusion mechanisms under technological neutrality. This shift matters because it transferred the authority of exclusion from overtly discriminatory officials to seemingly objective algorithms, obscuring the continuity of racial sorting in governance systems—an underappreciated mechanism where technical interoperability requirements lock in early biases across generations of systems.

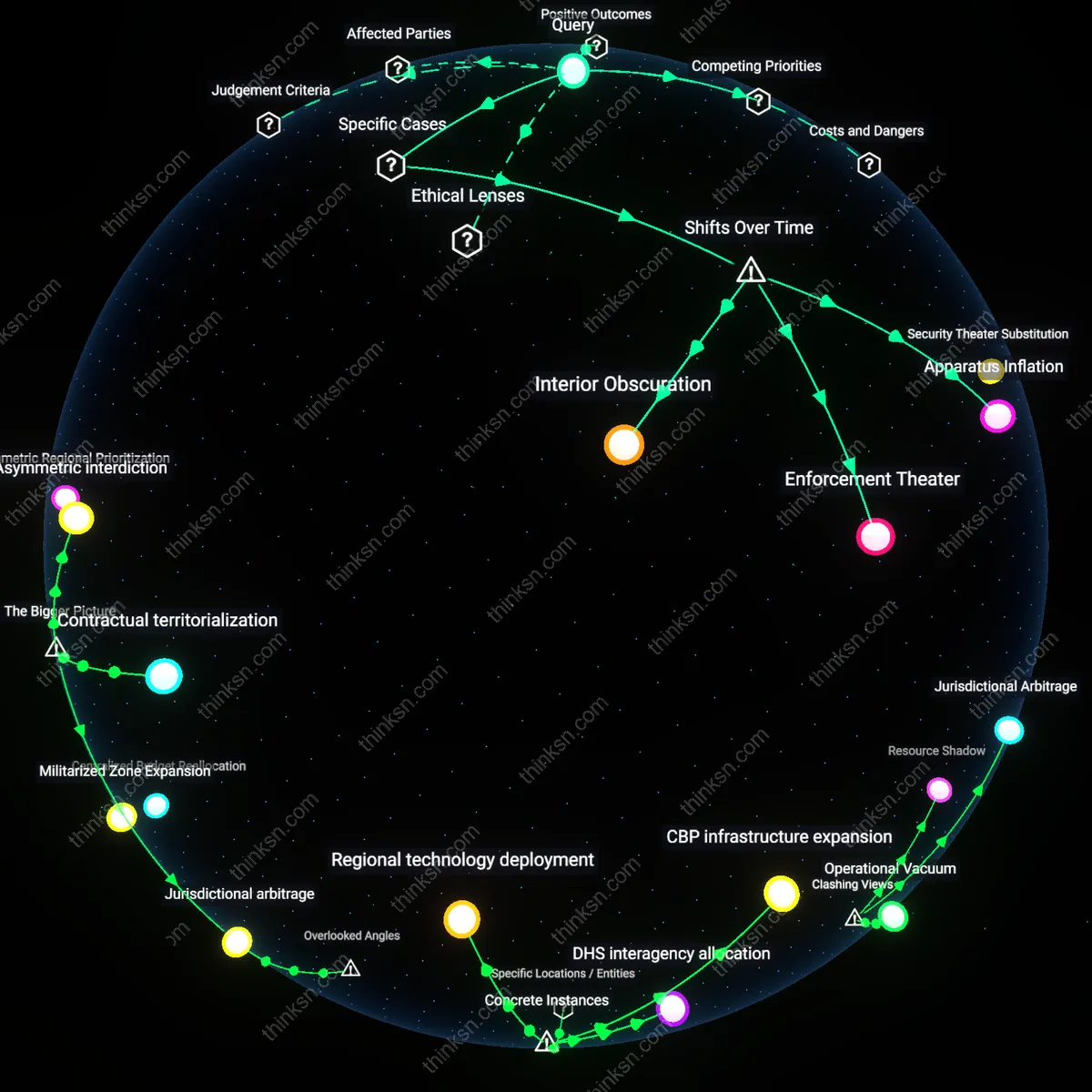

Threshold Governance

The pivot from identity verification as a human-administered process to automated pass-fail authentication recalibrated inclusion as a function of statistical confidence thresholds set by system designers in corporate or state labs, rather than individual discretion. This structural shift, codified in systems deployed at borders (e.g., U.S. Customs and Border Protection’s biometric exit program) or banking APIs, embeds actuarial logic into access decisions—where refugee populations with altered facial features due to trauma or malnutrition systematically fall below acceptable match thresholds, not due to flawed data but because the system was built to minimize false positives over false negatives. The underappreciated consequence is that inclusion today depends less on identity per se and more on alignment with precomputed statistical norms, making threshold-setting a hidden site of policy power operated by engineers and procurement contracts rather than legislators.

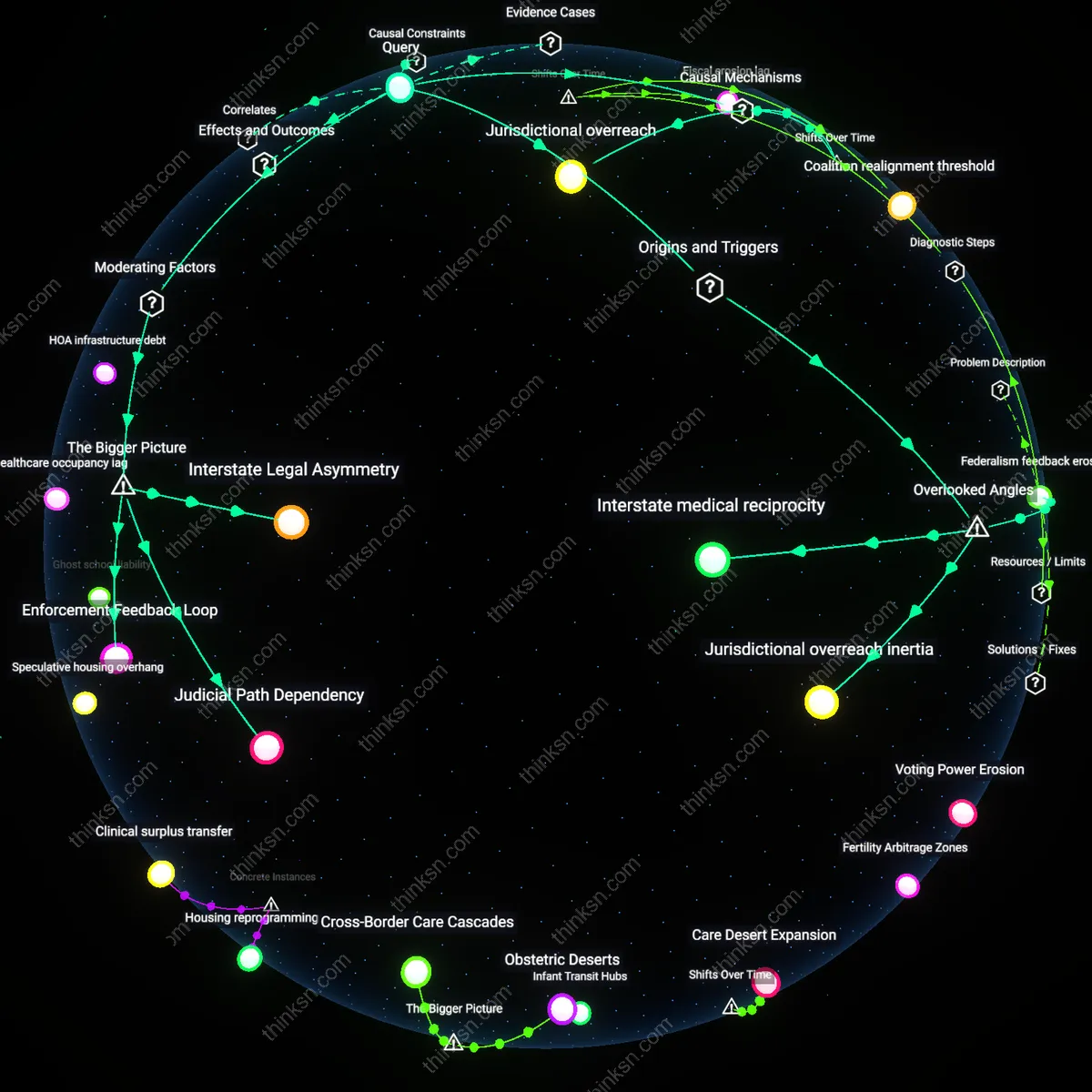

Infrastructure Capture

Early adoption of fingerprint and iris-based biometrics in development programs—such as India’s Aadhaar—established a path dependency where exclusion became institutionalized through irreversible investments in centralized identification infrastructure backed by public-private partnerships. Once UIDAI (Unique Identification Authority of India) and technology vendors like L1 Identity Solutions locked in large-scale deployment, the pivot from voluntary enrollment to welfare access conditional on biometric authentication transformed temporary technical limitations (e.g., fingerprint degradation among manual laborers) into permanent governance constraints. The overlooked dynamic here is that international development agencies (e.g., World Bank) and fintech investors enabled this capture by framing biometric IDs as efficiency solutions, thus embedding infrastructural assumptions that now preclude alternative, less exclusionary models from gaining political or financial traction.

Racial default encoding

The 1960s FBI fingerprint classification system excluded non-white populations from its training sample, producing a technical standard that assumed white physiological norms as universal; this excluded Black, Indigenous, and Latinx fingerprint ridge density patterns, which were later replicated in digital biometric databases like IAFIS, thereby embedding a racial default into automated identity verification that persists in facial recognition false match rates today. The system’s design treated whiteness not as a racial category but as a technical baseline, making racial exclusion invisible to algorithmic audit while structuring downstream disparity. This reveals how early data curation choices become locked in as operational neutrality, rendering difference a failure state rather than a legitimate variation.

Infrastructure refusal

In 2017, the U.S. Department of Homeland Security’s facial recognition system for airport screening failed to recognize transgender travelers due to binary sex classifications hardcoded into the biometric matching algorithm, a design inherited from driver’s license and passport systems that assume static, binary gender; this technical refusal reflects a broader institutional pattern in which biometric infrastructure actively denies recognition to non-normative identities by treating variation as error. The refusal is structural rather than incidental, as the system requires conformity to function, thereby producing invisibility as a condition of non-conformance. This demonstrates how biometric exclusion operates through infrastructural inflexibility, not merely data gaps.

Template Inertia

Biometric systems initially excluded populations with atypical physiological traits because early fingerprint and iris templates were calibrated on narrow demographic samples, particularly military-aged men in Global North countries; this technical path dependency persists because legacy template standards like ISO/IEC 19794 became entrenched in global interoperability frameworks, forcing modern systems to maintain backward compatibility that silently perpetuates exclusion at the data structure level. Most analyses focus on algorithmic bias or dataset diversity, but the unnoticed rigidity in template design—the predefined shape and range of biometric data that systems accept—acts as a hidden gatekeeper, filtering out those whose bodies deviate from mid-20th-century Eurocentric norms. This reveals that biometric exclusion is not only reproduced through machine learning but hardwired into the pre-processing layers of enrollment infrastructure.

Calibration Colonialism

Modern biometric systems continue to fail high-pigmentation skin tones not because of intrinsic technological limits but because sensor calibration protocols originated in Western labs using predominantly light-skinned test subjects, embedding a racialized baseline into hardware performance benchmarks that persists even when algorithms are retrained; this material bias in optical and thermal sensors is rarely scrutinized because industry certification focuses on software accuracy, not hardware sensing thresholds. The underappreciated dynamic—how sensor manufacture, not just data or code, encodes exclusion—shifts accountability from data scientists to equipment engineers and standards bodies like NIST, which historically overlooked variation in melanin reflectance. This surfaces a deeper lineage in which colonial observational practices evolved into technical norms governing whose physiological signals are considered legible by machines.

Legacy Exclusion Protocols

Early fingerprint classification systems like the Henry System embedded racialized assumptions about physiological difference that became foundational to forensic and immigration biometrics, privileging certain hand morphologies while misclassifying others. These protocols were standardized in colonial administrations and later adopted by U.S. and European border control, mechanically disqualifying individuals with worn or atypical dermal ridges—common among laborers—through technical thresholds disguised as neutrality. The non-obvious truth is that the bias wasn’t an artifact of digitization but baked into the analog logic of categorization itself, which assumed a normative bodily standard.

Normalized Recognition Gaps

The rollout of facial recognition in post-9/11 airport security infrastructure treated white male faces as the operational baseline, leading to systematically higher false rejection rates for women and people of color that were dismissed as 'edge cases' rather than systemic flaws. This normalization, codified in datasets like the FERET program and embedded in TSA trial logs, reinforced a feedback loop where repeated failures did not trigger recalibration but instead redefined who counted as recognizably legitimate. The underappreciated dynamic is how routine operational tolerances for error became social tolerances for exclusion, making invisibility administratively invisible.

Documentation Biome

India’s Aadhaar biometric ID project formalized the link between state recognition and bodily data by requiring iris and fingerprint scans for access to welfare, yet millions were denied services when their biometrics failed—particularly the elderly and manual workers whose physical changes defied database stability. This ecosystem of exclusion emerged not from technical failure but from the merging of colonial record-keeping impulses with digital permanence, turning transient bodily variation into permanent disqualification. What remains hidden is how the very push for inclusive documentation reproduced marginality by treating biometric consistency as a prerequisite for personhood.