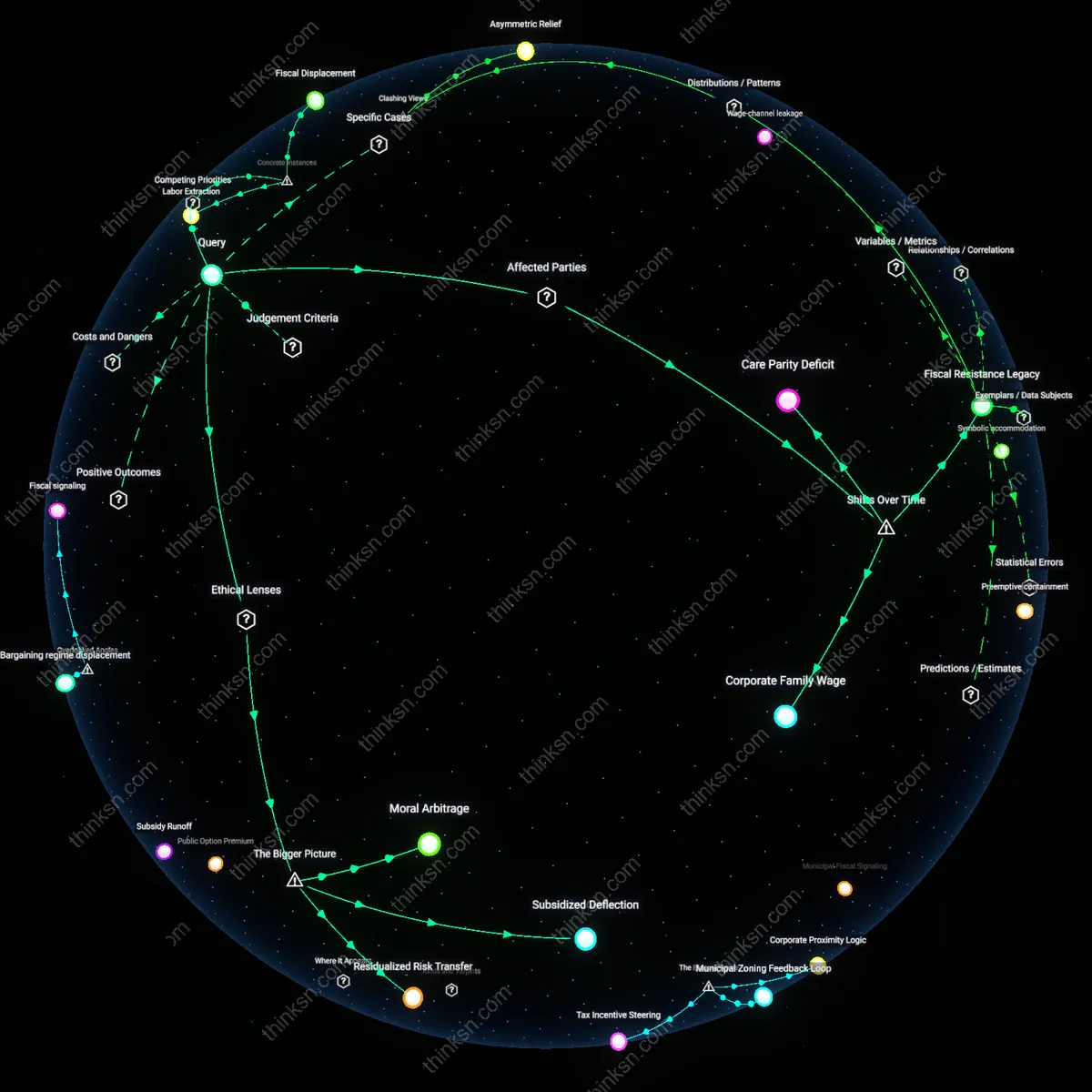

Precarity Lock-in

The rise of gig workplaces transformed confidentiality agreements in settlements from discrete legal compromises into structural features that reproduce worker precarity by dissolving the link between collective exposure and systemic reform, as seen when gig drivers in California settled misclassification suits while forfeiting rights to publicly challenge algorithmic wage suppression. Enabled by federal preemption of state labor laws and the erosion of class-action avenues, these settlements function not as endings but as maintenance routines for platform capitalism—where the suppression of disclosure sustains the very conditions that produce grievances. What remains hidden is that confidentiality does not just hide abuse; it reorganizes time, preventing past harms from becoming shared reference points for future resistance.

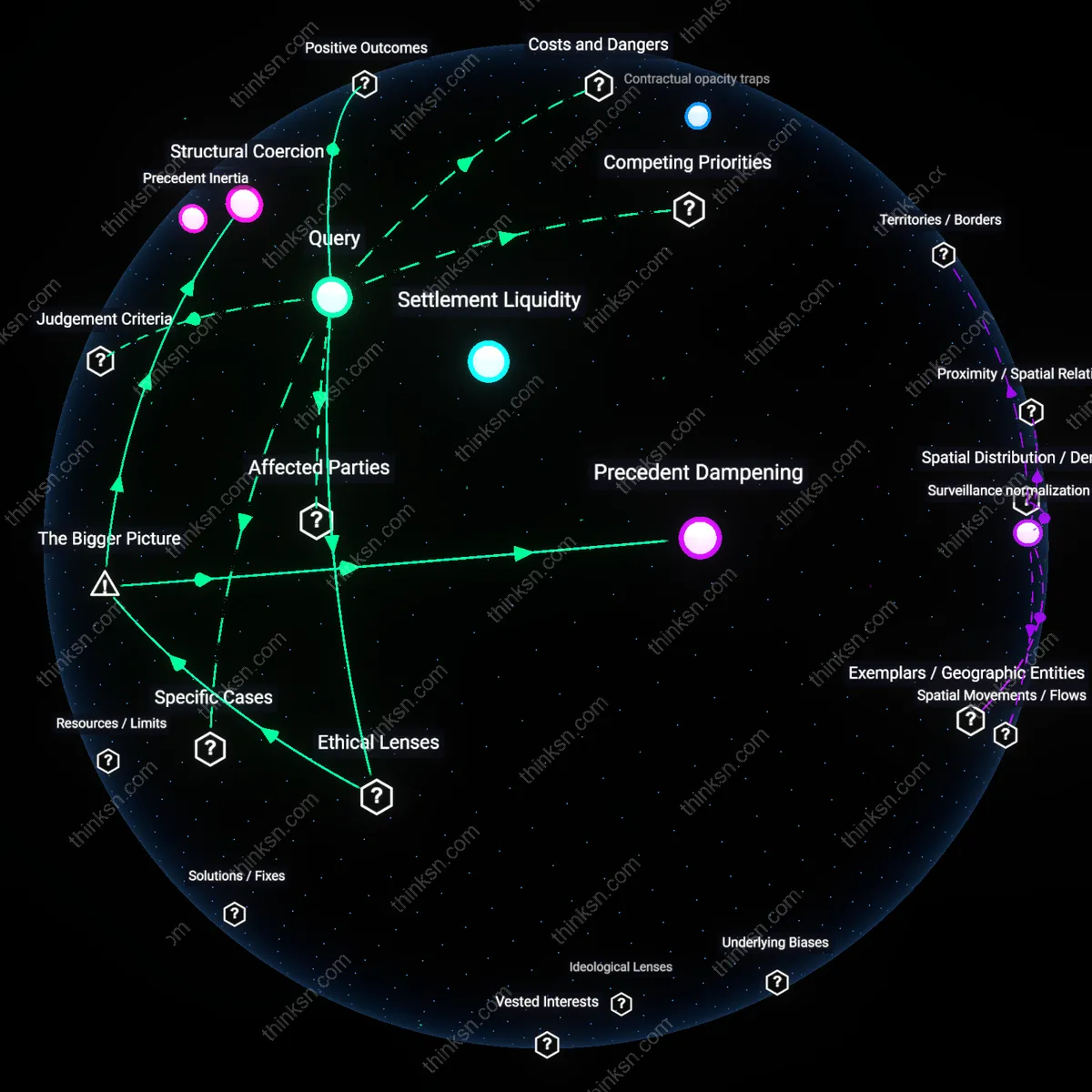

Contractual opacity traps

Confidentiality in gig worker settlements intensified not as a privacy safeguard but as a structural mechanism to conceal algorithmic management defaults, locking workers into unknowing compliance. Platforms like Uber and DoorDash embed non-disclosure clauses in arbitration agreements that prevent collective sense-making about how scheduling algorithms penalize cancellations or optimize labor costs through deactivation threats, making each dispute appear isolated and idiosyncratic. This opacity sustains a feedback loop where workers cannot trace patterns of coercion across cases, allowing companies to maintain control without formal employment obligations. What is overlooked is that confidentiality functions not primarily to protect trade secrets but to mask systemic operational cruelties embedded in code—rendering algorithmic discipline invisible and legally unchallengeable in aggregate.

Surveillance normalization debt

The expansion of surveillance in gig work reshaped confidentiality by treating worker monitoring data as proprietary inputs in settlement design, thereby shifting the burden of proof onto workers while discrediting their testimony. Companies like Lyft and Instacart use granular location logs, app interaction timestamps, and performance scores to preemptively discredit worker claims before disputes arise, framing deviations from expected behavior as voluntary breaches. Confidentiality clauses then formalize this asymmetry by silencing worker counter-narratives that might expose how surveillance metrics are gamed to justify punitive scheduling or exclusion. The overlooked dynamic is that surveillance generates a form of evidentiary debt—accumulated data asymmetries that make resistance appear irrational or isolated, thus making confidentiality feel like a personal compromise rather than a systemic suppression.

Silent Compliance Pact

Confidentiality in gig worker settlements became a passive enforcement tool as companies institutionalized surveillance and algorithmic scheduling, turning nondisclosure into an expected norm rather than a negotiated condition. Platforms like Uber and DoorDash embedded arbitration clauses in onboarding agreements, automatically binding workers to secrecy when disputes arose, which minimized public scrutiny of labor practices; this shift made confidentiality function not as privacy protection but as systemic silence maintenance. The non-obvious insight is that workers don’t actively agree to secrecy in dispute moments—instead, they inherit it through onboarding architectures framed as routine onboarding formalities, making resistance feel futile rather than forbidden.

Operational Invisibility Loop

As gig platforms expanded real-time tracking and predictive scheduling, confidentiality evolved from a legal term in settlements to a behavioral byproduct of constant monitoring, where workers self-censored to avoid deactivation. Since algorithmic management penalizes deviations—such as discussing pay or organizing breaks—workers internalize secrecy as job-preserving conduct, not contractual obligation. This reframes confidentiality not as a signed agreement but as enforced discretion emerging from a feedback loop between surveillance intensity and job precarity, revealing how familiar notions of privacy erode under the guise of operational neutrality.

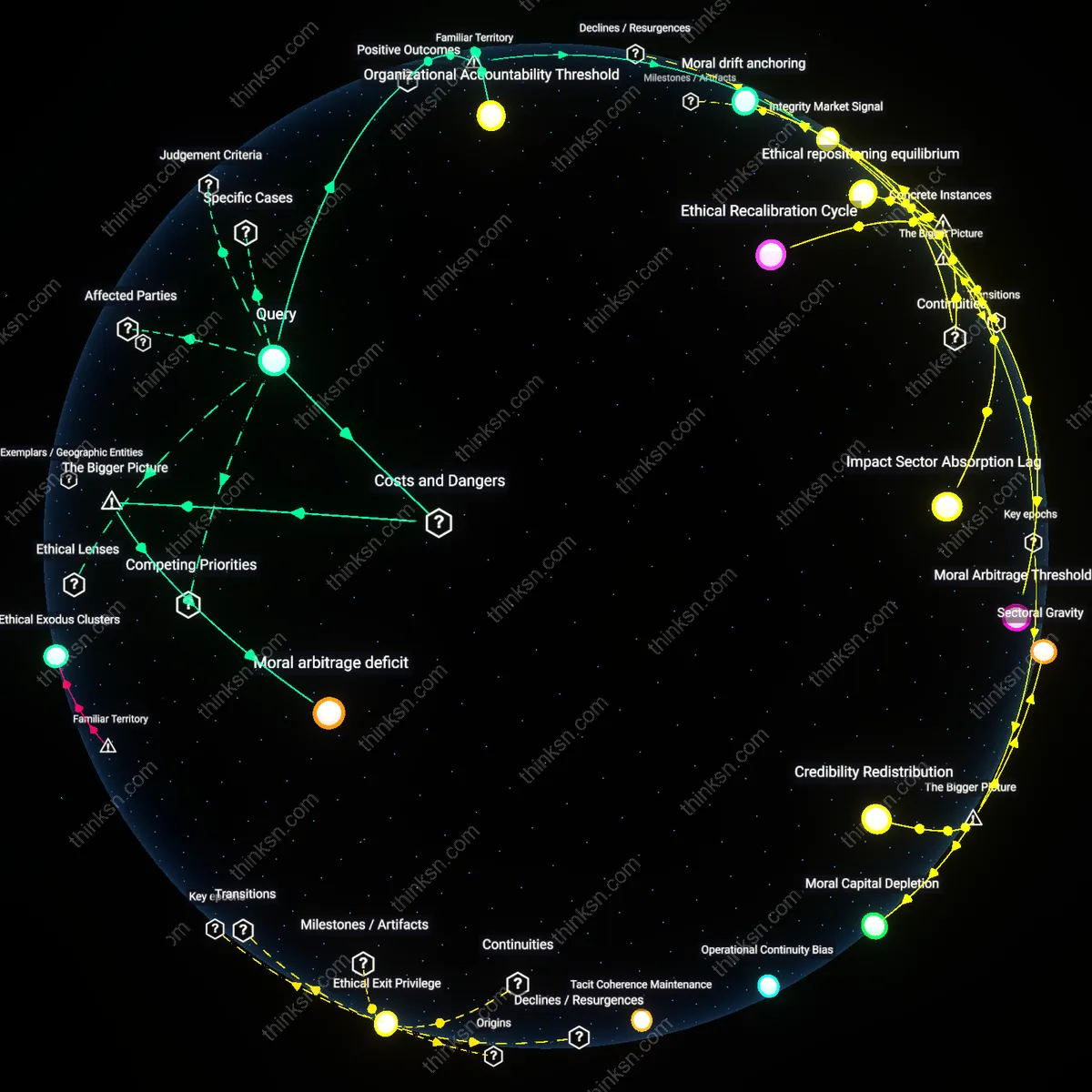

Normalization Drain

The repetition of identical settlement terms across thousands of gig workers diluted confidentiality from a protective shield into a procedural default, mirroring how arbitration clauses became invisible through ubiquity. As courts upheld these terms en masse and media coverage shifted from individual lawsuits to systemic critiques, the public stopped seeing confidentiality as a legal mechanism and began treating it as an inevitable cost of gig work. The underappreciated consequence is that familiar outrage over secrecy faded not because the issue resolved, but because constant exposure to non-disclosure outcomes drained its emotional and political salience, turning protest into passive expectation.

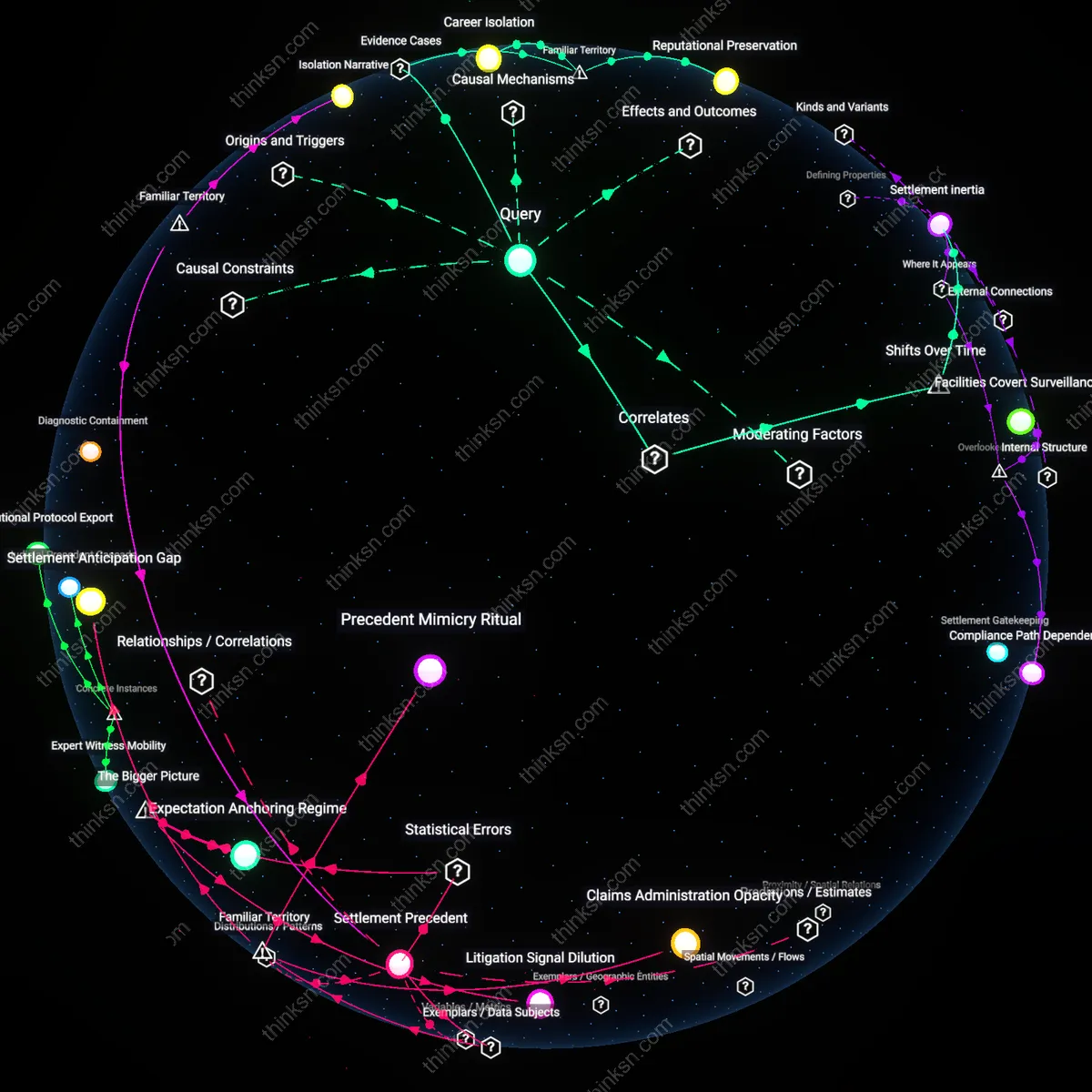

Algorithmic NDA Enforcement

Uber’s 2017 internal policy mandating automated suppression of driver dispute details through its claims management system directly tied confidentiality to algorithmic oversight, embedding nondisclosure as a condition of dispute resolution within its digital platform infrastructure. This policy required drivers to accept binding arbitration and silence on penalty of deactivation, enforced through the same app interface used for work scheduling, making confidentiality an operational layer of algorithmic control rather than a standalone legal agreement. The mechanism fused labor discipline with data governance, treating worker speech as a breach of system integrity—revealing that nondisclosure evolved not merely as legal protection but as a structural component of real-time platform governance. What is underappreciated is that the enforcement mechanism itself—automated, embedded in the workflow—rendered confidentiality invisible and frictionless, normalizing silence through design rather than overt coercion.

Surveillance-Based Waiver Triggers

In 2020, Amazon Flex’s updated Terms of Use introduced a clause automatically revoking driver access upon any public commentary about working conditions, where monitoring occurred via geofenced delivery zones and app-based behavior tracking that logged metadata patterns associated with protest coordination. This shift tied confidentiality not to signed agreements alone but to behavioral compliance measured through operational surveillance systems—making the act of speaking out retroactively invalidate prior settlement terms through automated detection. The dynamic reframed confidentiality as a revocable privilege contingent on continuous data obedience, transforming settlement outcomes into perpetually enforceable contracts monitored by logistics algorithms. The non-obvious insight is that the artifact—the Terms of Use update—wasn’t merely contractual but calibrated to exploit the worker’s dependence on uninterrupted app access, weaponizing scheduling control as a disciplinary tool.

Controlled Disclosure Pathways

DoorDash’s 2019 implementation of a proprietary ‘Resolution Center’ forced gig workers into a closed-loop digital interface for all payout disputes, where any settlement required using the platform’s in-app form that disabled external communication and routed all data through internal compliance logs. This artifact centralized dispute resolution within a surveilled architecture, making confidentiality the default operational state—workers could not exit the system to seek third-party legal or public support without forfeiting compensation. The system operated by treating information leakage as a technical failure, positioning the gig platform as the sole legitimate interpreter of labor grievances. What remains underappreciated is that this did not simply restrict speech but replaced the legal arena with a privatized, data-capturing tribunal where confidentiality was engineered into the user journey itself.