Free Cloud Storage: Whose Data Risks Political Manipulation?

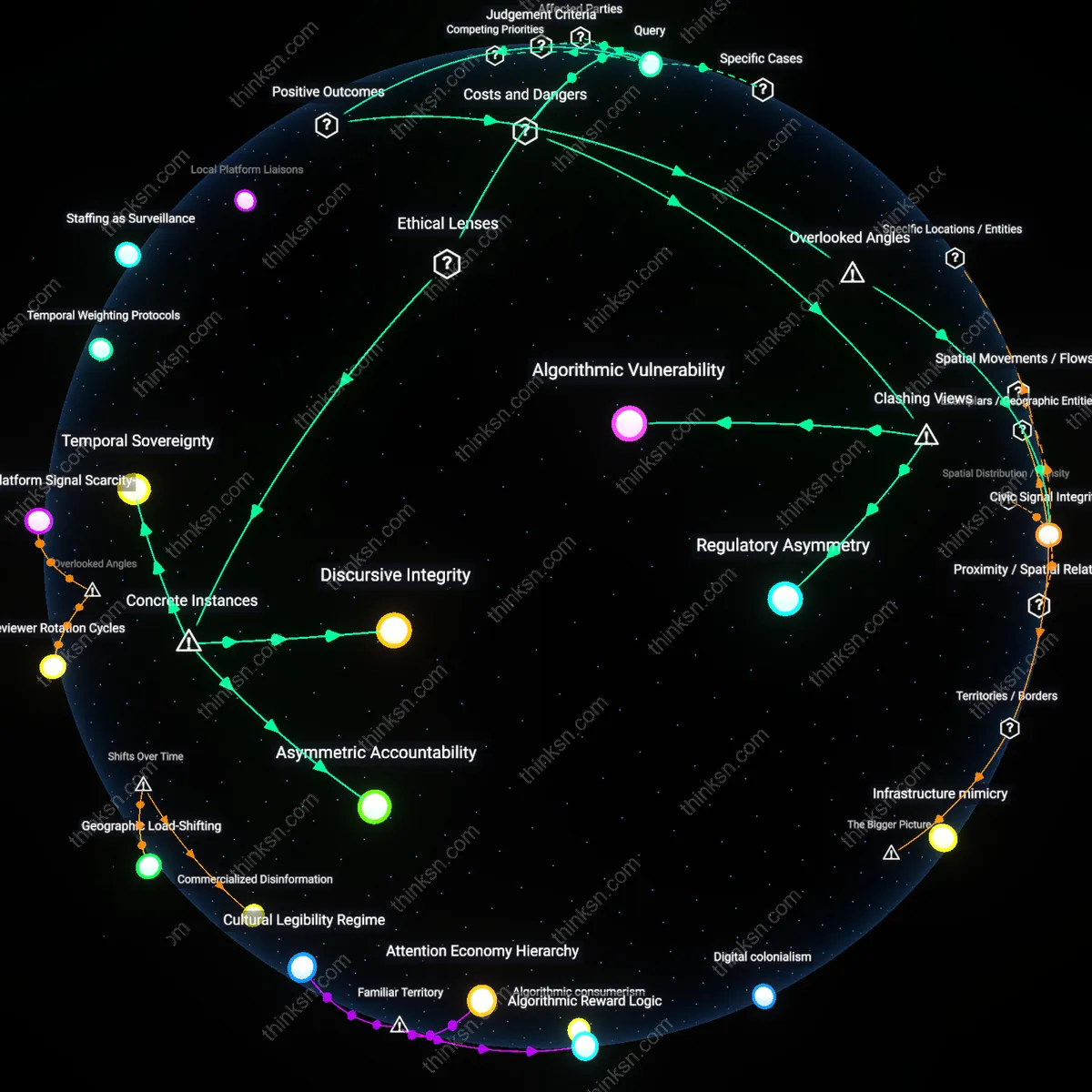

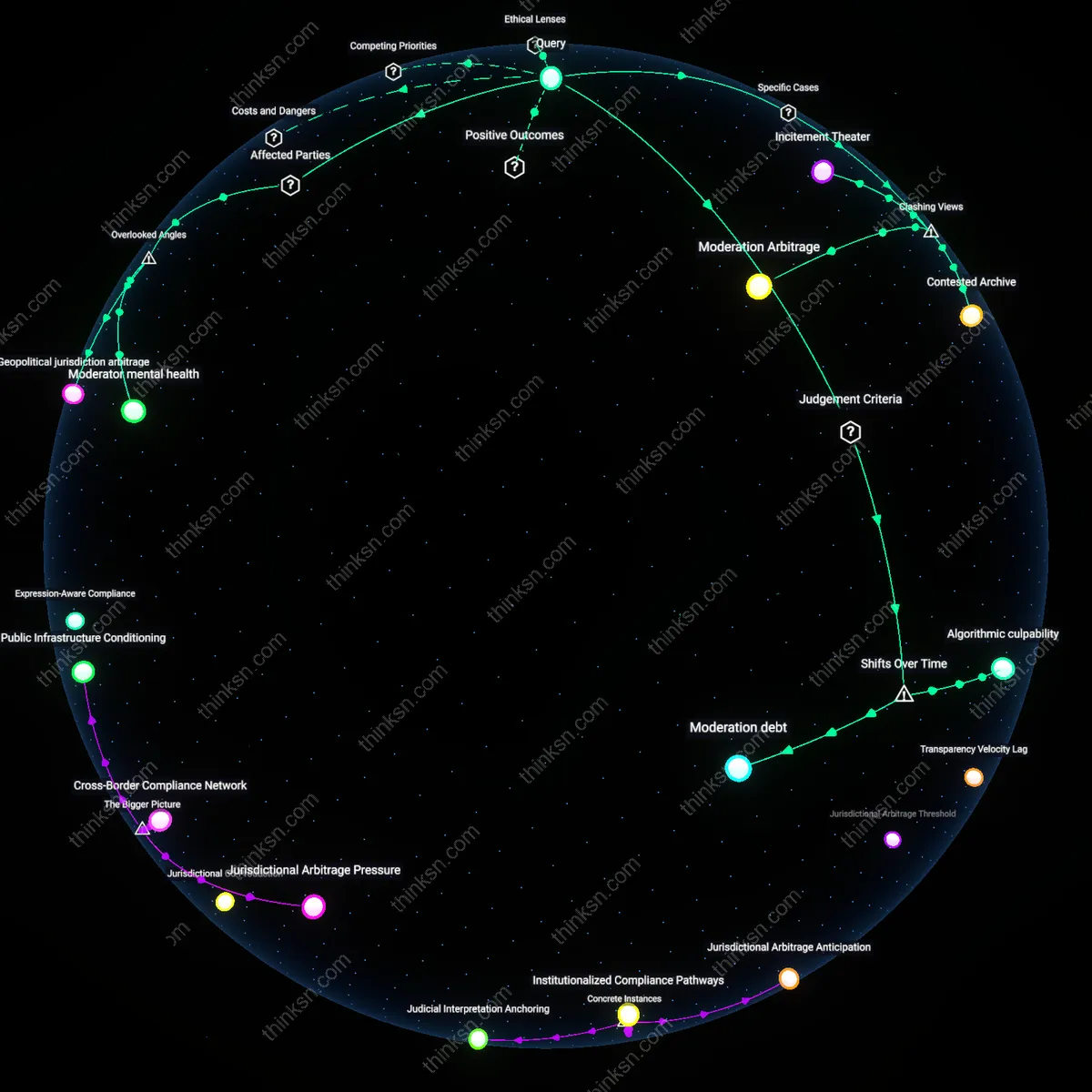

Analysis reveals 8 key thematic connections.

Key Findings

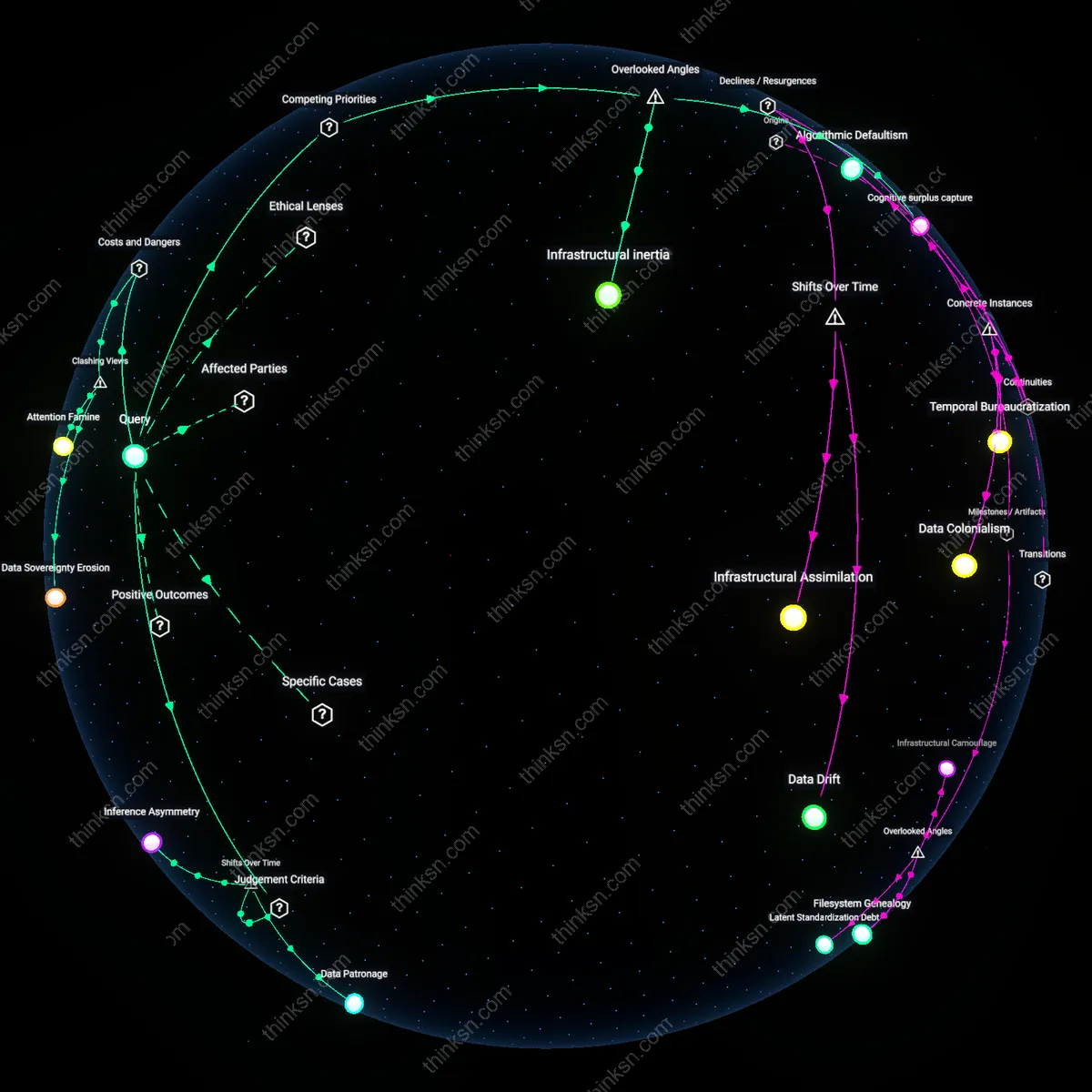

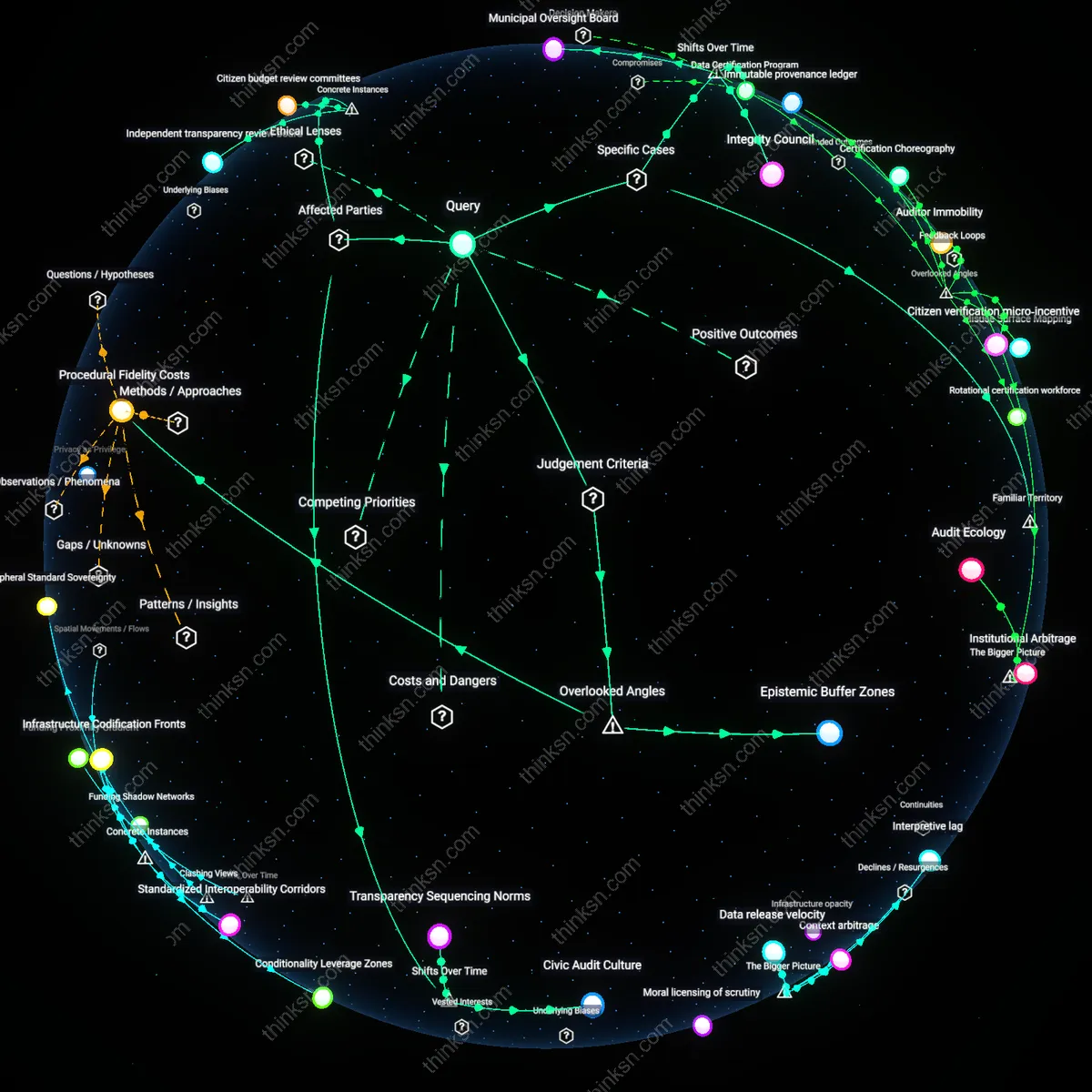

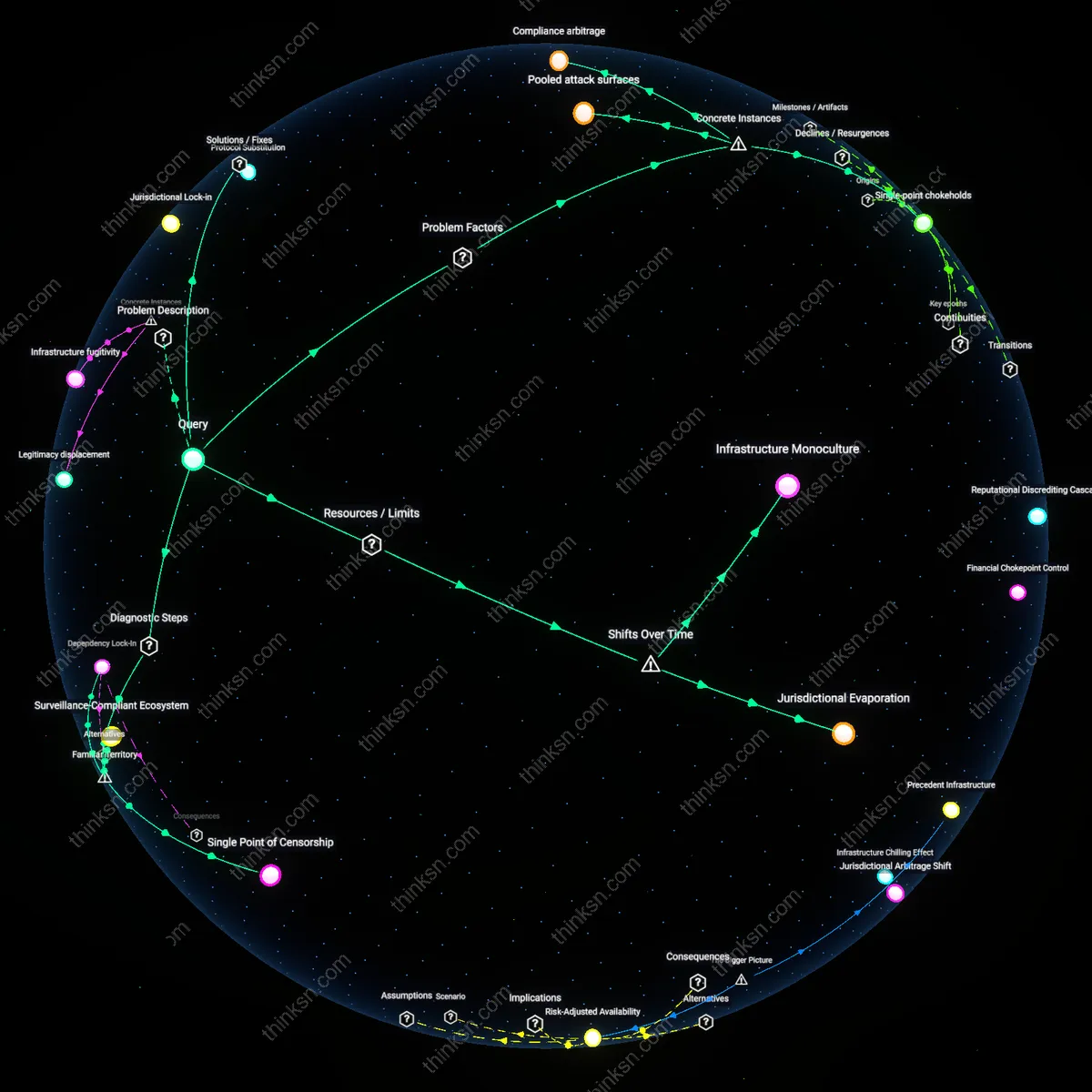

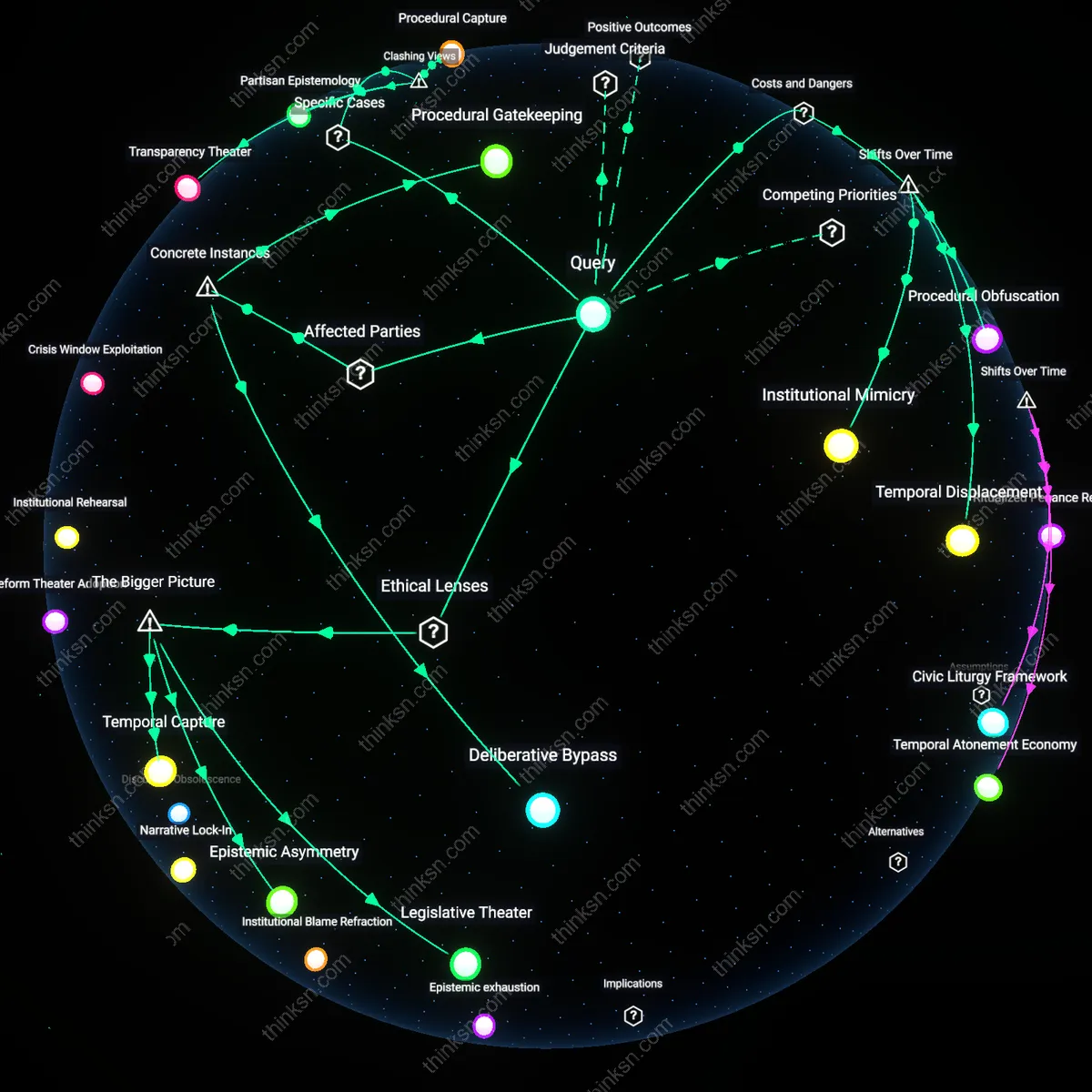

Data Patronage

Free cloud storage should be regulated as a public utility because its shift from personal utility to infrastructure for mass data aggregation since the 2010s has embedded user dependency in opaque corporate ecosystems, where access is exchanged for behavioral data that can be repurposed through algorithmic profiling; this mechanism mirrors historical utility capture, where essential services become vectors of control under the guise of convenience, revealing how sovereignty is now negotiated through digital dependency rather than direct state action.

Consent Obsolescence

Users must be granted dynamic data withdrawal rights because the post-2016 evolution of cloud platforms—from static repositories to real-time data synthesis engines—has rendered informed consent functionally meaningless, as data once shared in isolation becomes politically actionable only when aggregated and recontextualized over time; this shift exposes consent as a ritual of legitimacy rather than a mechanism of control, revealing how temporal recomposition of data undermines foundational privacy paradigms.

Inference Asymmetry

Political exposure from aggregated cloud data should be mitigated by limiting third-party inference rights because the transition from user-generated content platforms (pre-2012) to AI-driven behavioral prediction systems (post-2018) has decoupled data collection from intent, enabling actors like political consultancies to exploit metadata patterns never visible to the individual; this shift reveals that harm arises not from what users disclose but from what can now be inferred at scale, exposing a structural imbalance between predictive power and individual agency.

Data Sovereignty Erosion

Free cloud storage accelerates the de facto transfer of national data governance to transnational tech firms, enabling political manipulation not through targeted disinformation but through systemic exclusion of public institutions from oversight of critical information infrastructure. Governments unable to regulate or access data concentrated in foreign-owned cloud ecosystems lose leverage over electoral processes, public discourse, and civic identity formation—particularly in postcolonial states reliant on U.S.-based platforms. This structural dependency, masked as consumer convenience, entrenches digital neocolonialism by rendering state sovereignty contingent on corporate compliance. The non-obvious risk is not misuse of data per se, but the quiet dismantling of democratic accountability through infrastructural asymmetry.

Attention Famine

The real danger of free cloud storage lies not in data aggregation for manipulation but in how it perpetuates an attention economy that starves democratic deliberation of cognitive resources, making populations more susceptible to manipulation irrespective of data exploitation. By enabling limitless storage and seamless content delivery, cloud platforms eliminate natural friction in information consumption, fostering compulsive engagement that depletes the mental bandwidth necessary for critical political judgment. Political manipulation thus thrives not because data is weaponized, but because users are cognitively exhausted before they even encounter it. The overlooked mechanism is not surveillance, but the industrial-scale depletion of reflective capacity under conditions of information abundance.

Infrastructure Co-option

Political manipulation emerges less from intentional data mining than from the repurposing of free cloud storage systems by authoritarian regimes to retroactively reengineer historical narratives through centralized control of digitized cultural and bureaucratic records. When governments adopt cloud services for public administration, they inadvertently place archival memory—birth records, legal documents, educational histories—under the operational control of platforms subject to foreign jurisdiction and algorithmic opacity. This enables gradual, automated revisionism where access to the past is filtered through privately governed systems optimized for efficiency, not truth preservation. The underappreciated risk is not surveillance capitalism, but the silent colonization of collective memory by infrastructure designed for scalability, not fidelity.

Infrastructural inertia

Prioritizing free cloud storage entrenches state dependency on privately owned digital infrastructure, which over time diminishes public-sector capacity to maintain sovereign data ecosystems; this occurs as governments outsource storage and computation to global platforms like Google Cloud or AWS, inadvertently ceding operational control over citizen data flows to firms whose governance models are opaque and incentive structures misaligned with democratic accountability. The non-obvious risk is not the data breach or surveillance event itself, but the gradual erosion of institutional ability to exit these systems when political manipulation emerges—because migrating petabytes of aggregated data across jurisdictions or ownership models becomes technically and fiscally prohibitive. This structural lock-in is rarely factored into policy cost-benefit analyses, which treat cloud access as a reversible, temporary convenience rather than a path-dependent commitment that reshapes state sovereignty.

Cognitive surplus capture

Free cloud storage acts as a subsidy that converts user attention and behavioral compliance into invisible inputs for training political AI systems, where the act of organizing personal files or syncing calendars generates labeled, time-stamped data used to refine models that later categorize populations by ideological predictability; this occurs through backend machine learning pipelines at firms like Alibaba Cloud or Dropbox, which repurpose routine digital hygiene as training data for sociopolitical classification. The overlooked mechanism is not surveillance but participation—users unknowingly contribute cognitive labor to systems that will eventually sort them into political risk categories, mistaking convenience for neutrality. This transforms the privacy-security trade-off into a labor extraction model, where the 'free' tier functions as a data plantation harvesting user cognition for downstream governance applications.