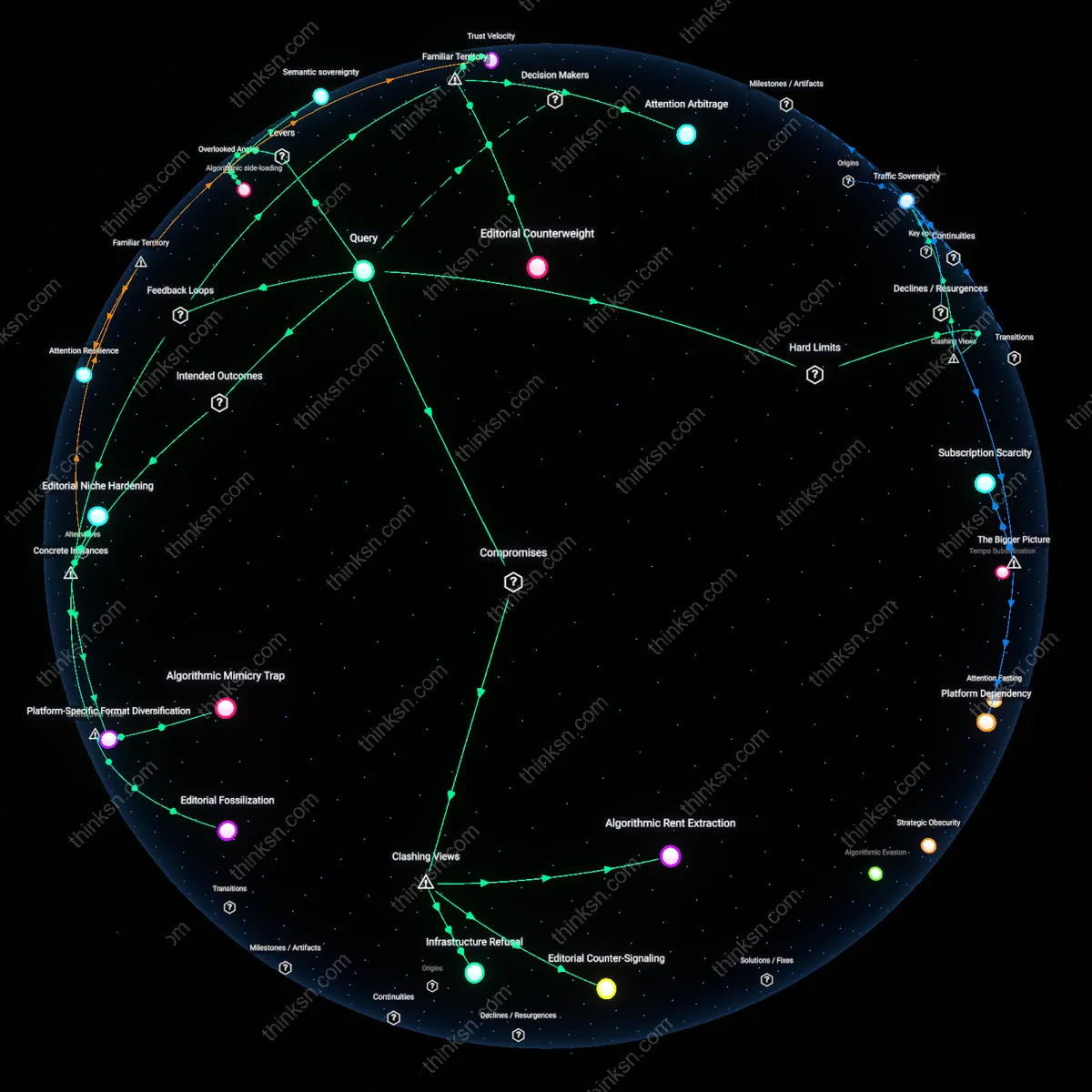

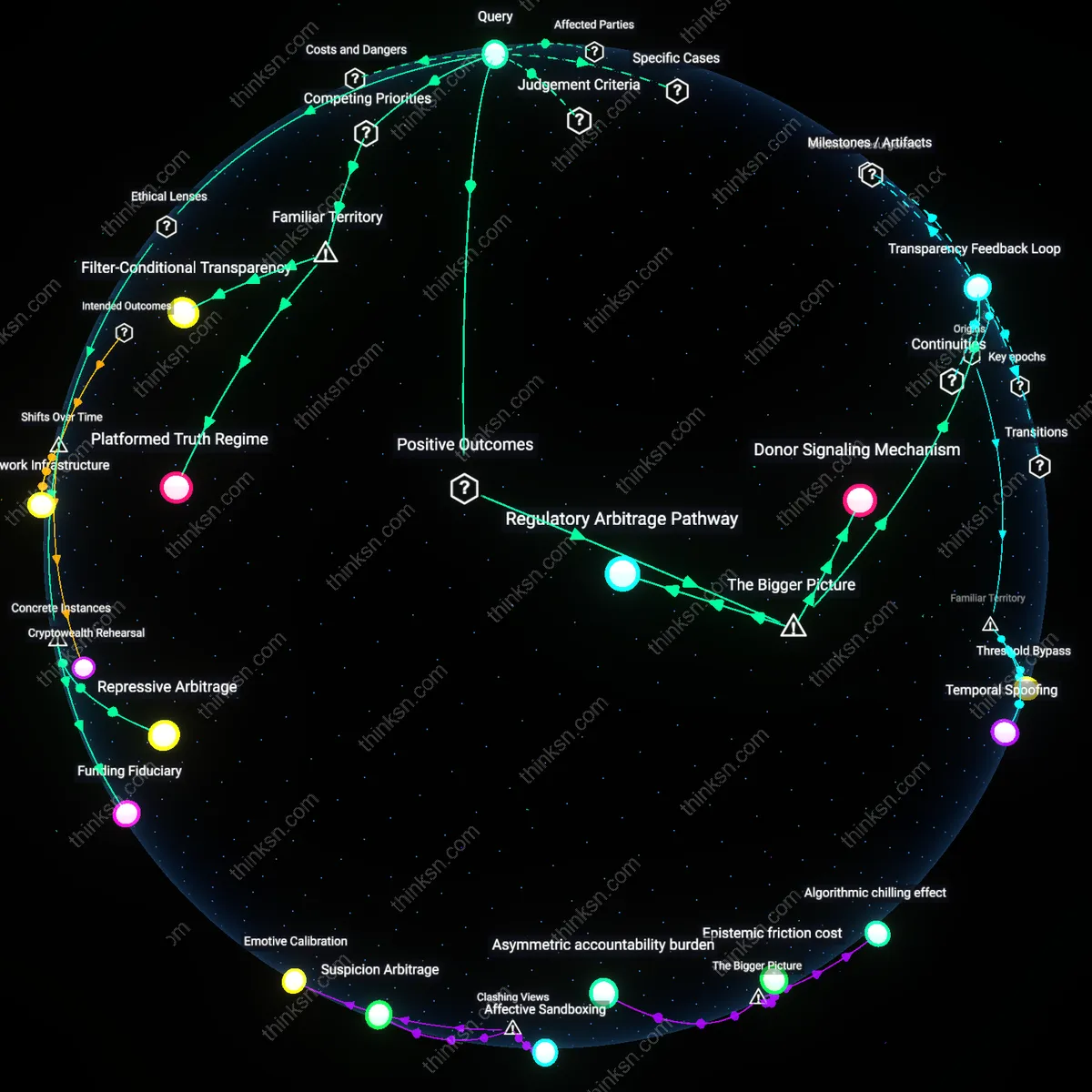

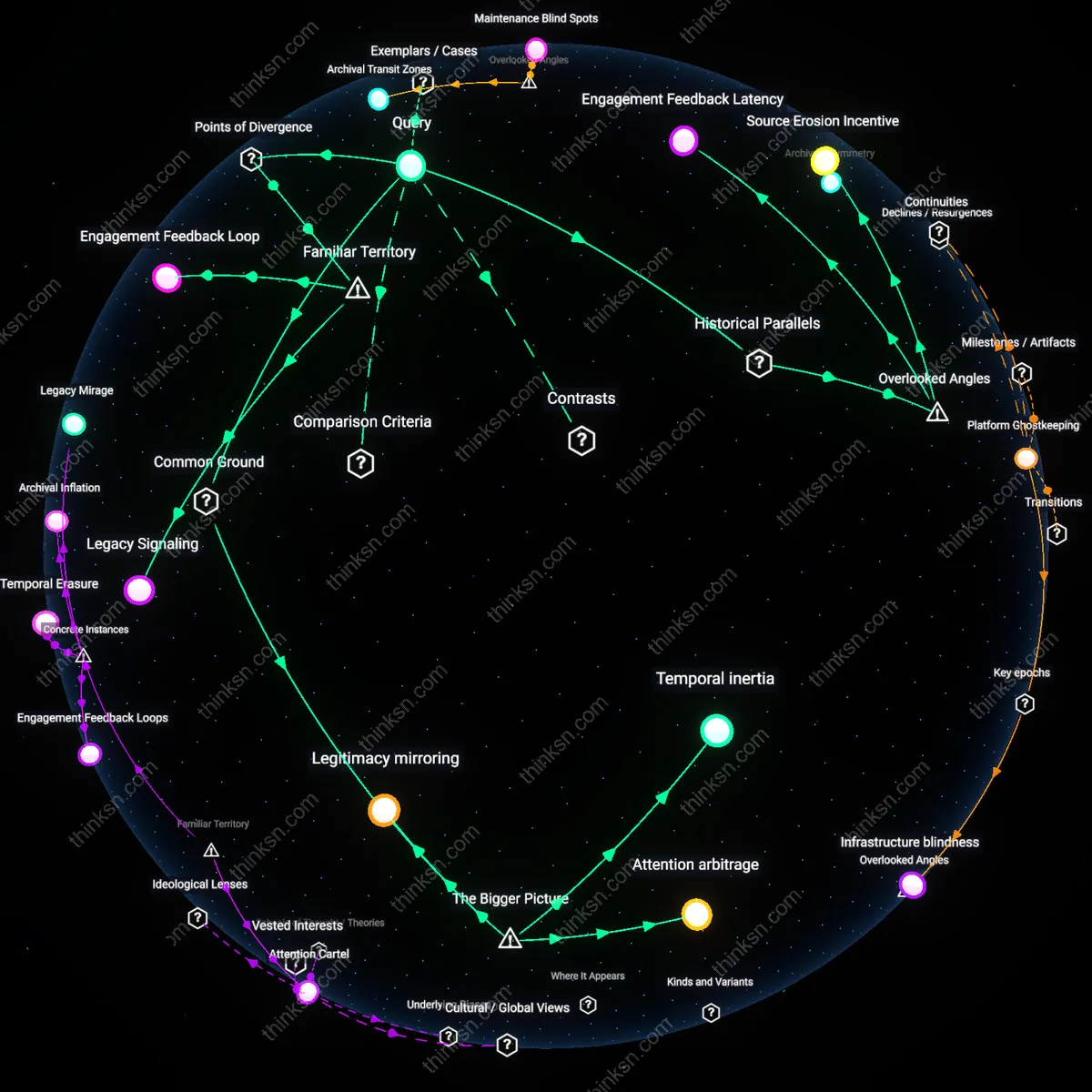

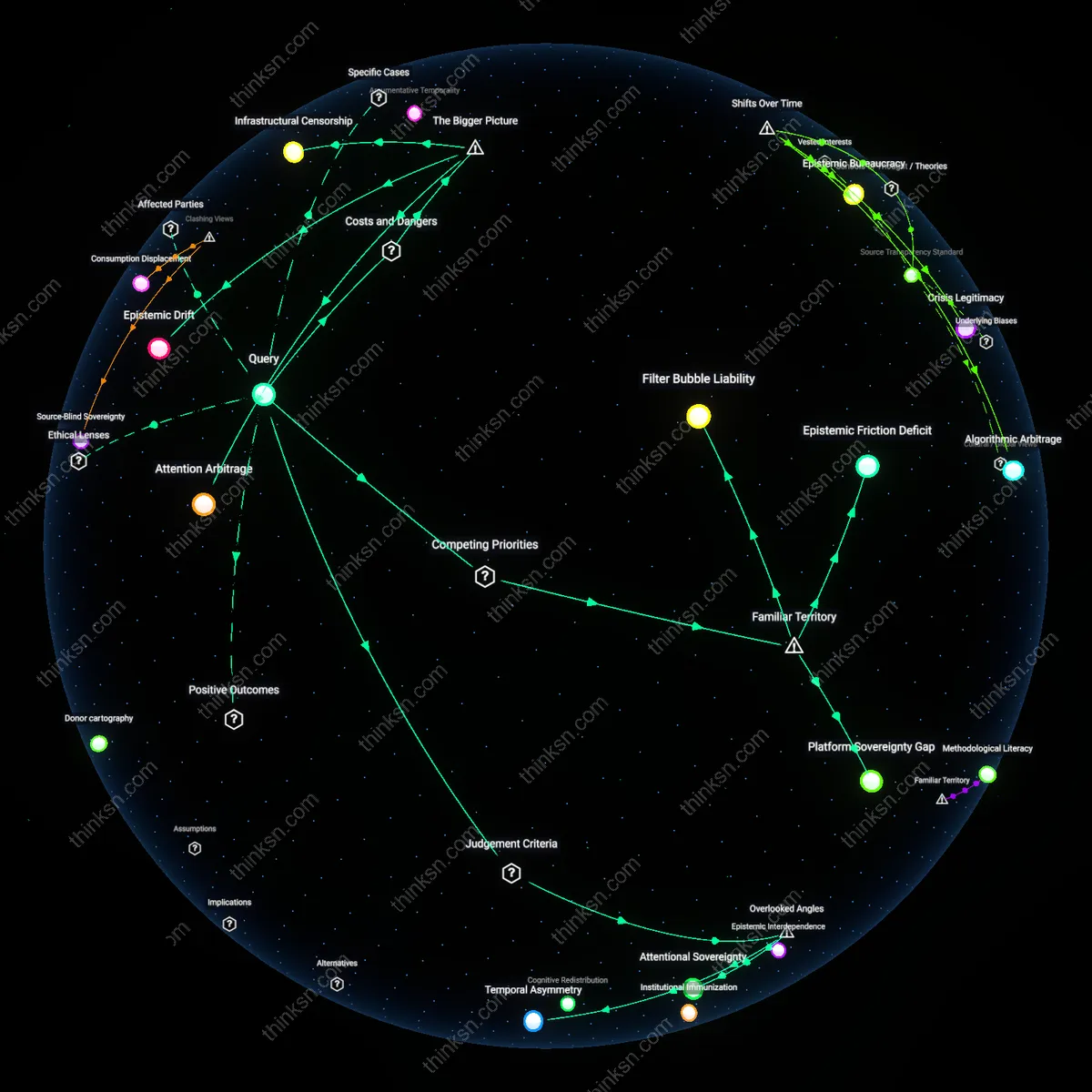

Is Relying on One Search Algorithm Threatening News Independence?

Analysis reveals 15 key thematic connections.

Key Findings

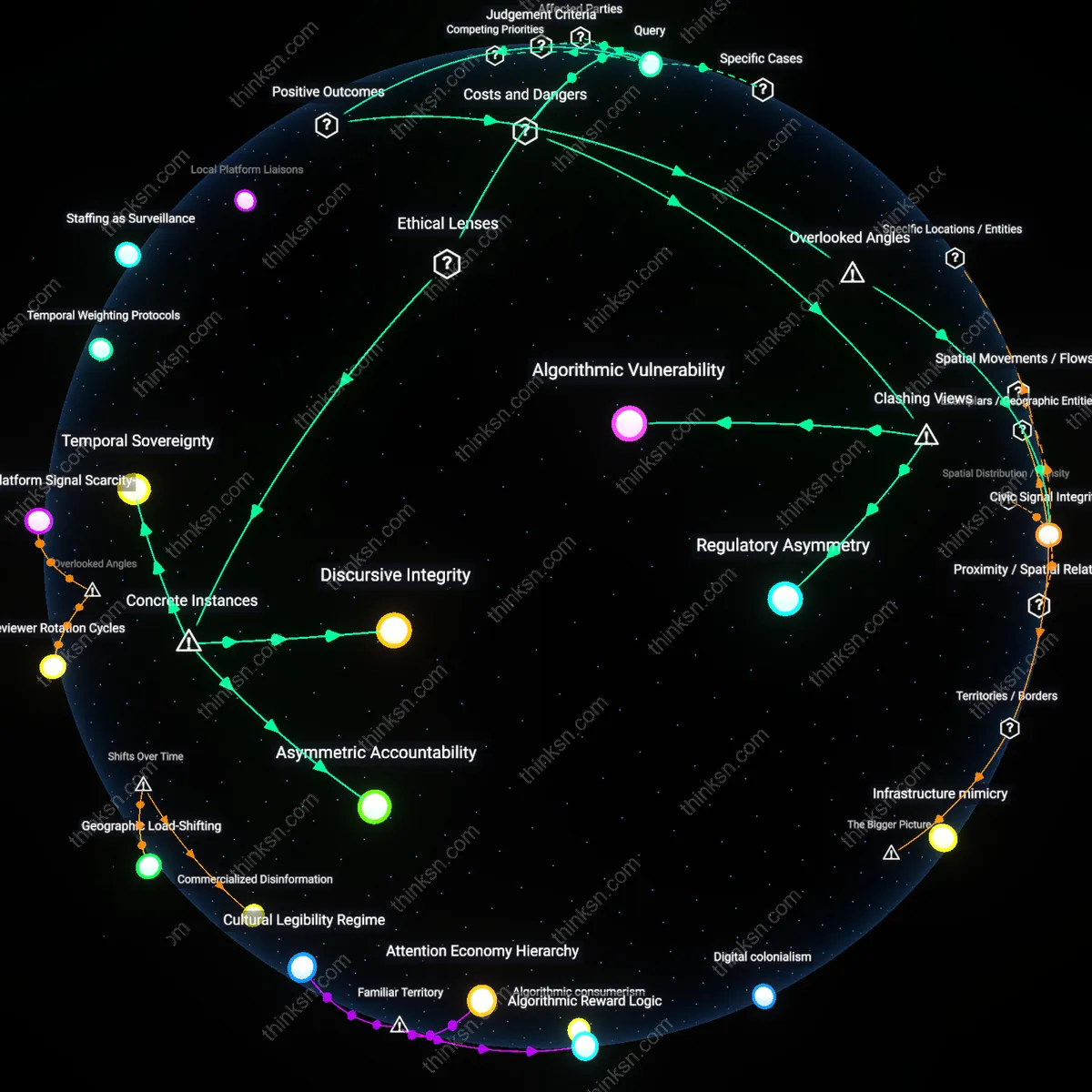

Algorithmic Arbitrage

A small news outlet can maintain editorial independence by strategically gaming the search algorithm’s engagement metrics without aligning with its ideological incentives. Journalists and editors exploit known ranking triggers—such as headline structure, keyword density, and meta-description timing—not to compromise content integrity, but to reroute algorithmic amplification toward stories they deem editorially vital; this operates through reverse-engineering SEO patterns from dominant outlets while preserving dissident narratives beneath compliant packaging. What is non-obvious is that algorithmic dependency does not necessitate ideological concession—search platforms reward predictability, not alignment—enabling editorial resistance to thrive in the shell of compliance.

Traffic Sovereignty

A small news outlet can preserve editorial independence only by rejecting the premise that search-driven traffic must be maximized, instead capping algorithmic exposure at a threshold that avoids dependency. Editors set technical and editorial limits—such as disabling SEO metadata beyond a certain reach or rotating publishing domains—so that no single algorithm can become the sole arbiter of survival. This functions through deliberate under-monetization and audience fragmentation, prioritizing reader loyalty over scale. What challenges the dominant view is the assertion that independence requires not adaptation to the algorithm, but enforced irrelevance to it—revealing that survival in the attention economy may depend on refusing its core logic.

Algorithmic side-loading

A small news outlet should develop alternate distribution channels that mimic the algorithmic logic of dominant platforms but operate independently through syndicated email digests curated by audience behavior patterns. By reverse-engineering engagement signals—such as time-on-page and sharing velocity—into a lightweight recommendation engine for newsletters, the outlet gains reach without ceding control to external algorithms. This leverages user behavior data as a tactical asset rather than a byproduct, inserting editorial curation into algorithmic logic—a dimension typically obscured by platform opacity. The overlooked dynamic is that algorithmic dependence stems less from technological disparity than from passive data consumption; actively repurposing engagement metrics subverts extraction-based dependencies.

Semantic sovereignty

A small news outlet must assert control over metadata structuring—specifically schema.org tagging and on-page semantic markup—to shape how search algorithms interpret and prioritize its content. By customizing structured data to emphasize context, authorship, and editorial intent rather than click-optimized signals, the outlet influences algorithmic representation without altering headlines or angles. This shifts power to an infrastructural layer usually relegated to technical SEO teams, revealing that editorial independence includes syntactic control over how meaning is machine-readable. The non-obvious insight is that meaning is not just authored by journalists but co-constructed through backend code, making semantic design a covert editorial lever.

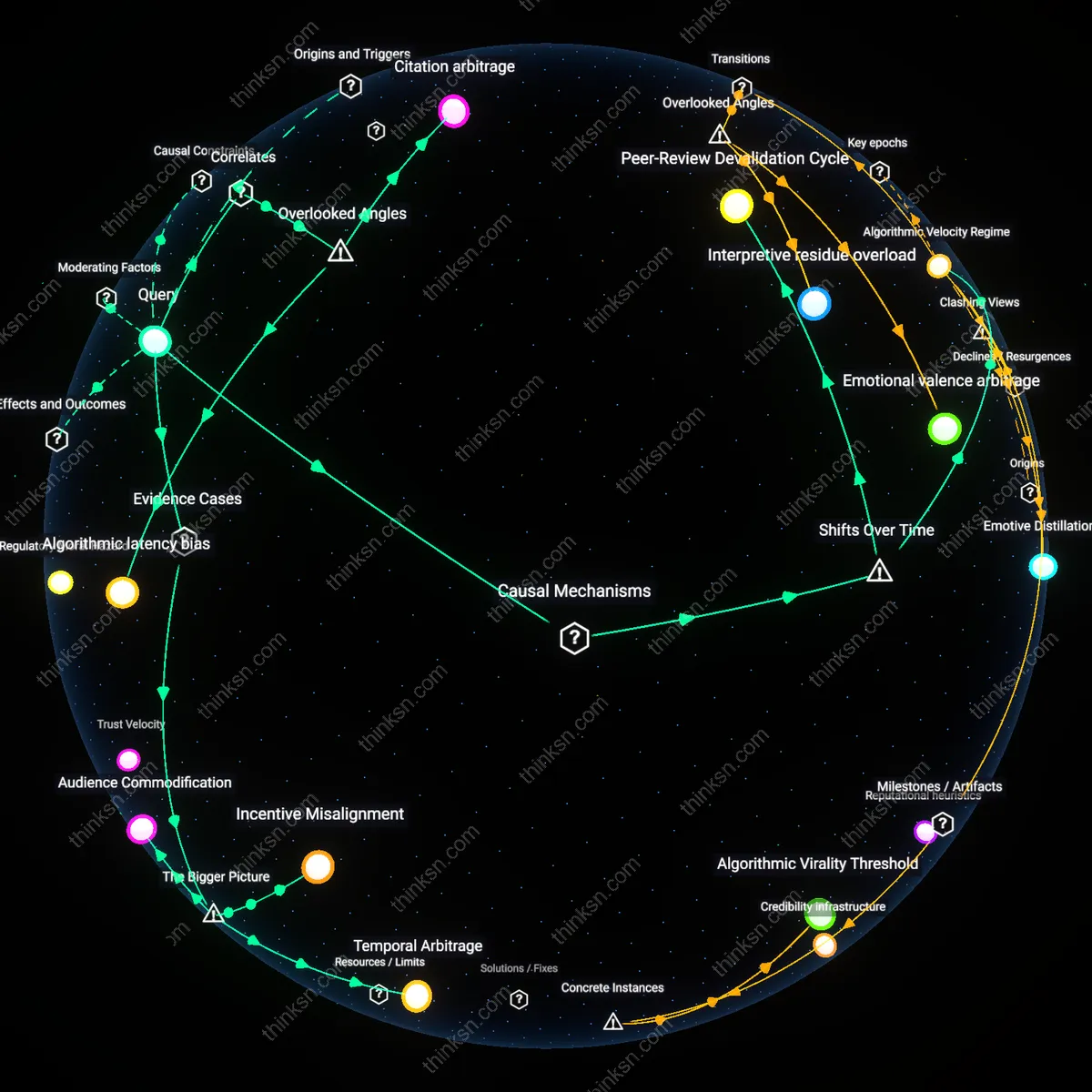

Algorithmic Mimicry Trap

A small news outlet can maintain editorial independence by decoupling its content strategy from search algorithm feedback loops through external validation mechanisms like nonprofit fellowships or academic partnerships. This breaks the reinforcing loop where algorithmic favor increases traffic, which incentivizes algorithm-aligned content, further entrenching dependence—now dominated by outlets that mimic algorithmic preferences rather than public interest. Historically, the shift from print-distributed curation (pre-2000s) to algorithmically mediated distribution (post-2010s) transformed editorial survival into a function of machine readability over journalistic integrity, revealing mimicry not as adaptation but as systemic capture. What's underappreciated is that outlets resisting this loop don't just preserve autonomy—they expose algorithmic mimicry as a developmental dead end, not an evolutionary adaptation.

Editorial Fossilization

By anchoring editorial priorities to historically rooted community roles—such as local accountability or investigative oversight—small outlets can resist algorithmic assimilation through balancing feedback from long-standing civic expectations. This works because legacy audience relationships, particularly in geographically bounded or identity-based communities (e.g., rural weeklies or ethnic press), generate counter-pressure when algorithm-driven content displaces mission-driven reporting, triggering corrective adjustments in editorial practice. The pivotal shift occurred between 2008–2015, when SEO-driven analytics displaced circulation-based metrics as the primary feedback mechanism, transforming audience responsiveness from a spatially anchored dynamic to a behaviorally extracted one. The residual state—editorial fossilization—emerges when independence is preserved not through resistance but through the inertial weight of institutional memory, making change costly and visibility low, but coherence intact.

Traffic Diversification Threshold

A small news outlet sustains editorial independence only after crossing a traffic diversification threshold where no single algorithm contributes more than 40% of total referrals, achieved via coordinated platform pluralism—simultaneously cultivating email newsletters, podcast syndication, and social micro-communities. This shifts the system from a reinforcing loop (algorithmic traffic begets resource allocation, which optimizes for more algorithmic traffic) to a balancing one, where diminishing returns from algorithmic platforms trigger investment in owned or peer-based channels. The defining transition began around 2018, when Google’s algorithm updates systematically deprioritized pure content aggregators, forcing early-mover outlets to treat algorithmic traffic as volatile rather than foundational. The underappreciated insight is that independence is not a baseline condition but a phase change occurring only after structural redundancy is achieved, revealing algorithmic dependence as a developmental stage, not a permanent constraint.

Attention Arbitrage

A small news outlet can balance algorithmic dependence and editorial independence by diversifying referral sources to reduce exposure to any single platform’s ranking logic. Editors and growth teams must actively distribute content across alternative channels—such as email newsletters, podcast appearances, or cross-platform social posting—not just to gain audience, but to create feedback insulation when search algorithms shift suddenly. This counters the reinforcing loop where algorithmic visibility begets more traffic, which incentivizes editorial mimicry of algorithm-favored formats, further entrenching dependence. What’s underappreciated is that diversification isn’t just about audience growth— it structurally weakens the platform’s ability to dictate newsroom behavior by making attention less contingent on algorithmic approval.

Editorial Counterweight

A small news outlet should designate an internal role tasked explicitly with auditing content decisions against platform-driven incentives to preserve core editorial values. This person or committee reviews story selection, headlines, and formatting not for SEO compliance or algorithmic friendliness, but for alignment with the outlet’s mission, resisting the feedback loop where high-performing algorithmic content skews coverage toward sensational or repetitive topics. The mechanism operates through institutional memory and normative pushback, injecting friction into production cycles where speed and optimization would otherwise dominate. The non-obvious insight is that the most familiar solution—having principles—is ineffective unless embedded in a role that actively disrupts the reflex to chase algorithmic rewards.

Trust Velocity

A small news outlet can reposition trust with its audience as a faster-growing asset than algorithmic reach, thereby reducing the perceived cost of defying algorithmic incentives. By transparently explaining editorial choices—such as why a slow investigation is prioritized over a trending topic—the outlet builds audience loyalty that functions as a balancing loop against platform dependency, because trusted audiences seek the outlet directly rather than via search. This leverages the familiar idea that trust matters, but reframes it as a dynamic variable that can accelerate in response to deliberate signaling, not just accumulate passively over time. The underappreciated point is that trust isn’t just a shield—it can be operationalized as a growth metric that competes with algorithmic metrics like click-through rate.

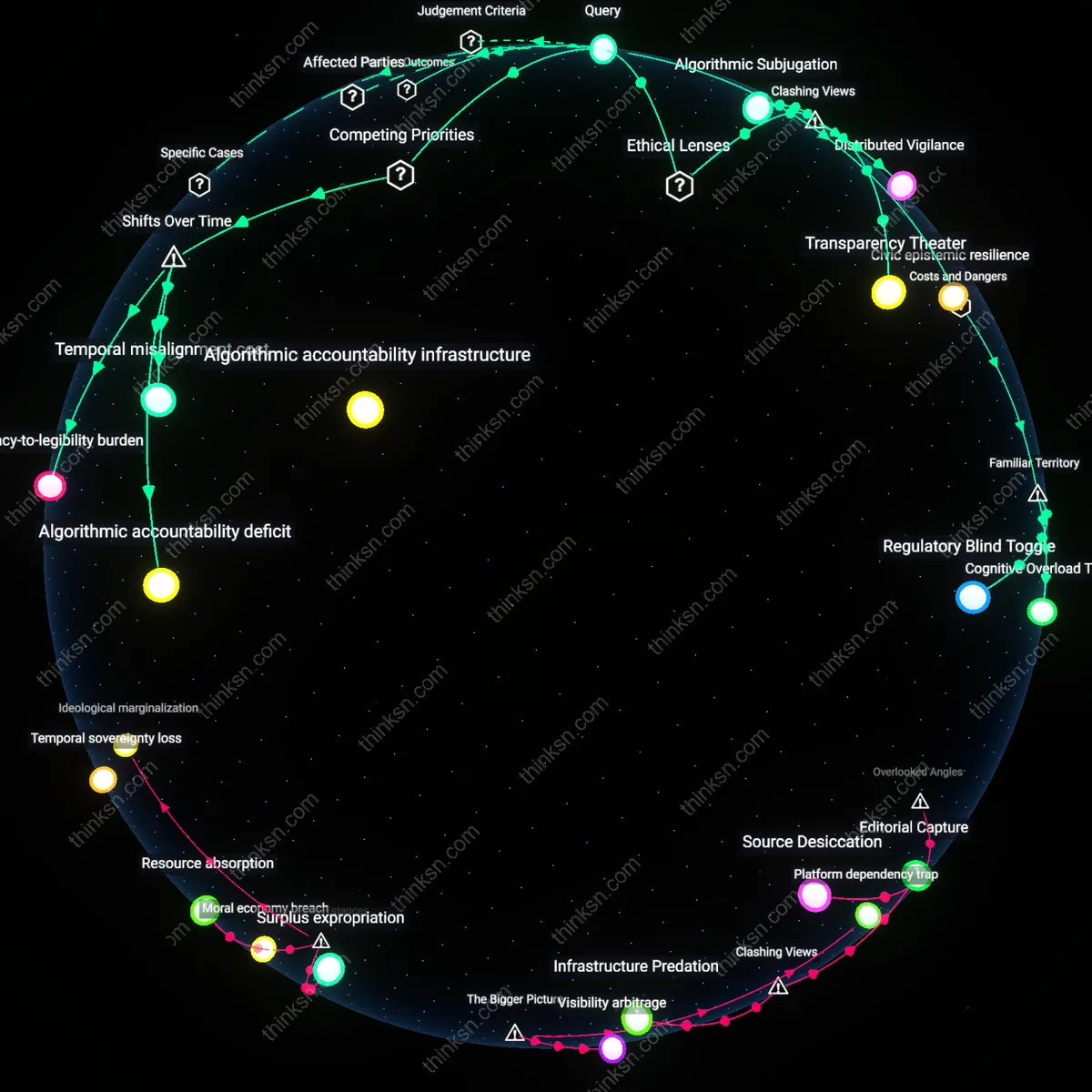

Algorithmic Rent Extraction

A small news outlet can preserve editorial independence by refusing to treat algorithmic platforms as primary distribution channels and instead treating them as secondary amplifiers of a self-owned audience base. This shift forces outlets to prioritize direct reader relationships through email newsletters or membership models, thereby reducing dependency on algorithmic whims; the real mechanism is not user acquisition but revenue decoupling from platform-mediated traffic. The non-obvious insight is that dependence on search algorithms functions less like a technical limitation and more like a form of rent extraction, where editorial compliance is the implicit lease payment for audience access—challenging the assumption that reach must be earned through platform alignment.

Editorial Counter-Signaling

Small news outlets can reclaim autonomy by deliberately producing content that performs poorly under dominant search algorithms but strengthens trust among niche, high-engagement audiences. By optimizing for semantic ambiguity, local context, or investigative depth—features that evade algorithmic classification—outlets create a signaling deficit for platforms while reinforcing editorial credibility with readers; this operates through a mechanism of strategic incomprehensibility. This approach disrupts the intuitive belief that visibility must be maximized algorithmically, revealing instead that obscurity can be weaponized as a marker of authenticity.

Infrastructure Refusal

The most effective way for a small outlet to maintain independence is to refuse integration with platform measurement and tracking infrastructures altogether, denying algorithmic systems the behavioral data needed to index and rank their content. Without click-tracking pixels, SEO metadata, or social sharing buttons, the content becomes invisible to optimization loops, forcing the outlet to distribute through offline or peer-to-peer networks like newsletters or community hubs. This act of infrastructural sabotage reveals that algorithmic dependence is not a content issue but a data extraction condition—challenging the widespread assumption that visibility is inherently desirable or necessary for survival.

Platform-Specific Format Diversification

A small news outlet can reduce dependence on a single search algorithm by producing content in platform-specific formats that bypass algorithmic gatekeeping on any one channel, as seen when the Houston Defender shifted from Google-dependent SEO to creating TikTok-native video explainers and WhatsApp newsletter drops targeting local Black communities, using platform-fragmented distribution to achieve measurable reach outside Google’s ecosystem. This strategy leverages the differing content valuations across platforms—TikTok rewarding engagement velocity, WhatsApp enabling direct subscriber access, and Google prioritizing domain authority—thereby distributing audience acquisition risk; the non-obvious insight is that algorithmic dependence is not mitigated by resistance but by strategic pluralism across algorithmic regimes, each with distinct affordances and audience pathways.

Editorial Niche Hardening

A small news outlet can maintain editorial independence by intensifying commitment to a narrowly defined, community-embedded editorial niche immune to algorithmic fluctuations, exemplified by The City in New York City, which abandoned broad SEO-driven topics in favor of hyperlocal investigative reporting on neighborhood land use and tenant rights, resulting in sustained subscriber growth and platform-agnostic audience loyalty despite Google algorithm updates. This works because algorithmic reach depends on generalizable engagement signals, whereas deep local expertise generates irreplaceable value that compels direct audience retention through email and membership; the overlooked mechanism is that independence is preserved not by avoiding algorithms but by rendering them irrelevant via uncontestable domain authority in a non-routinizable subject area.