Are Journalists Betting on One Search Engine?

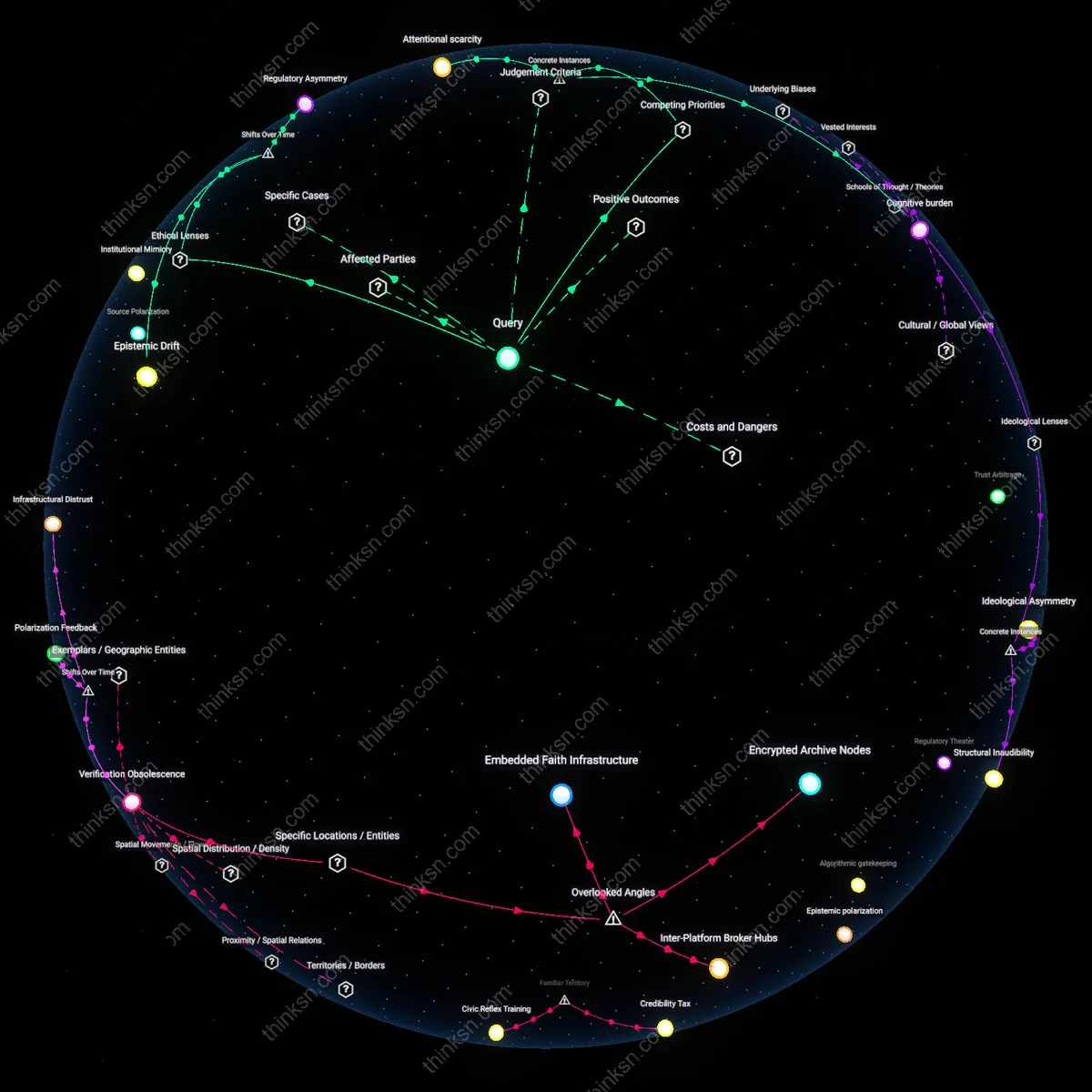

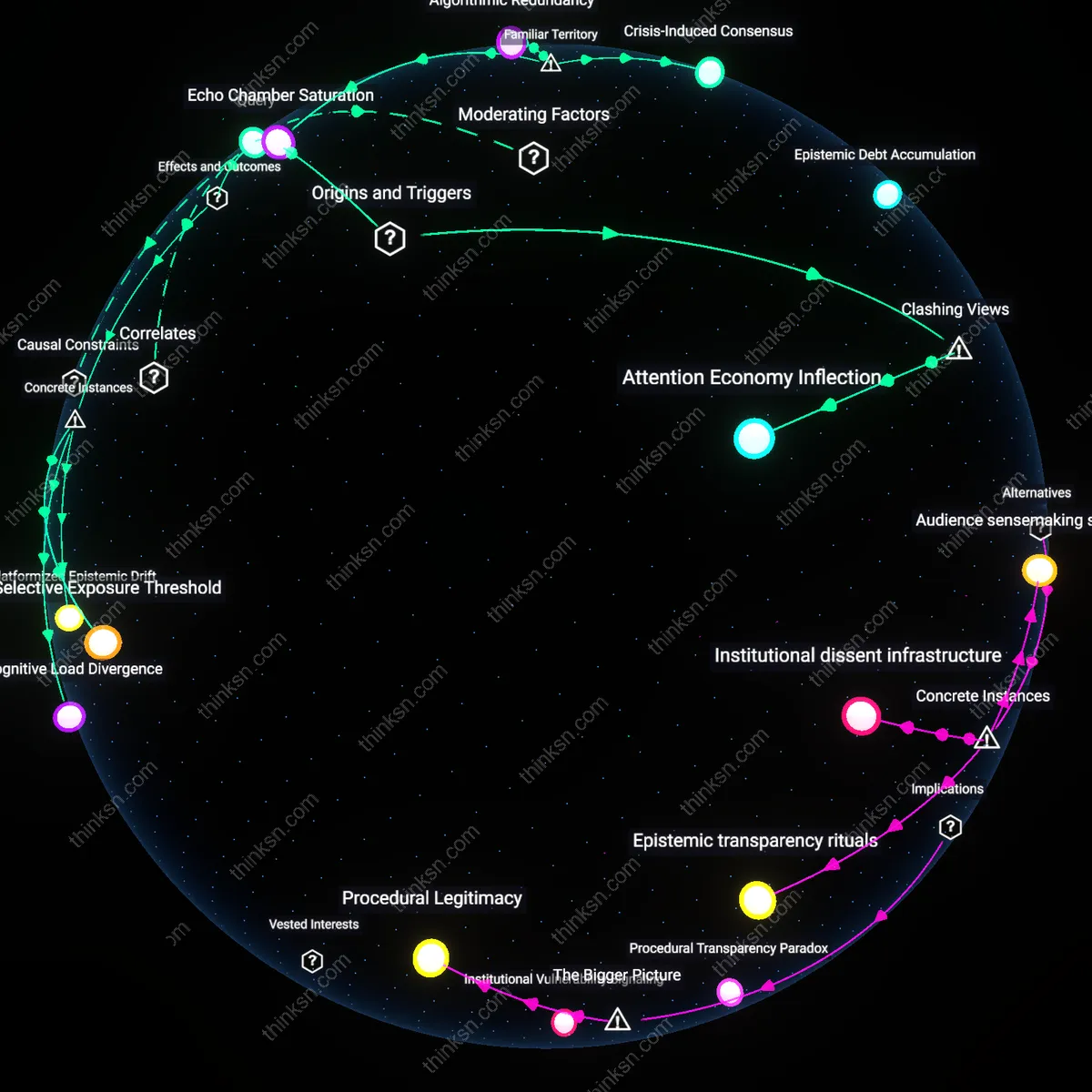

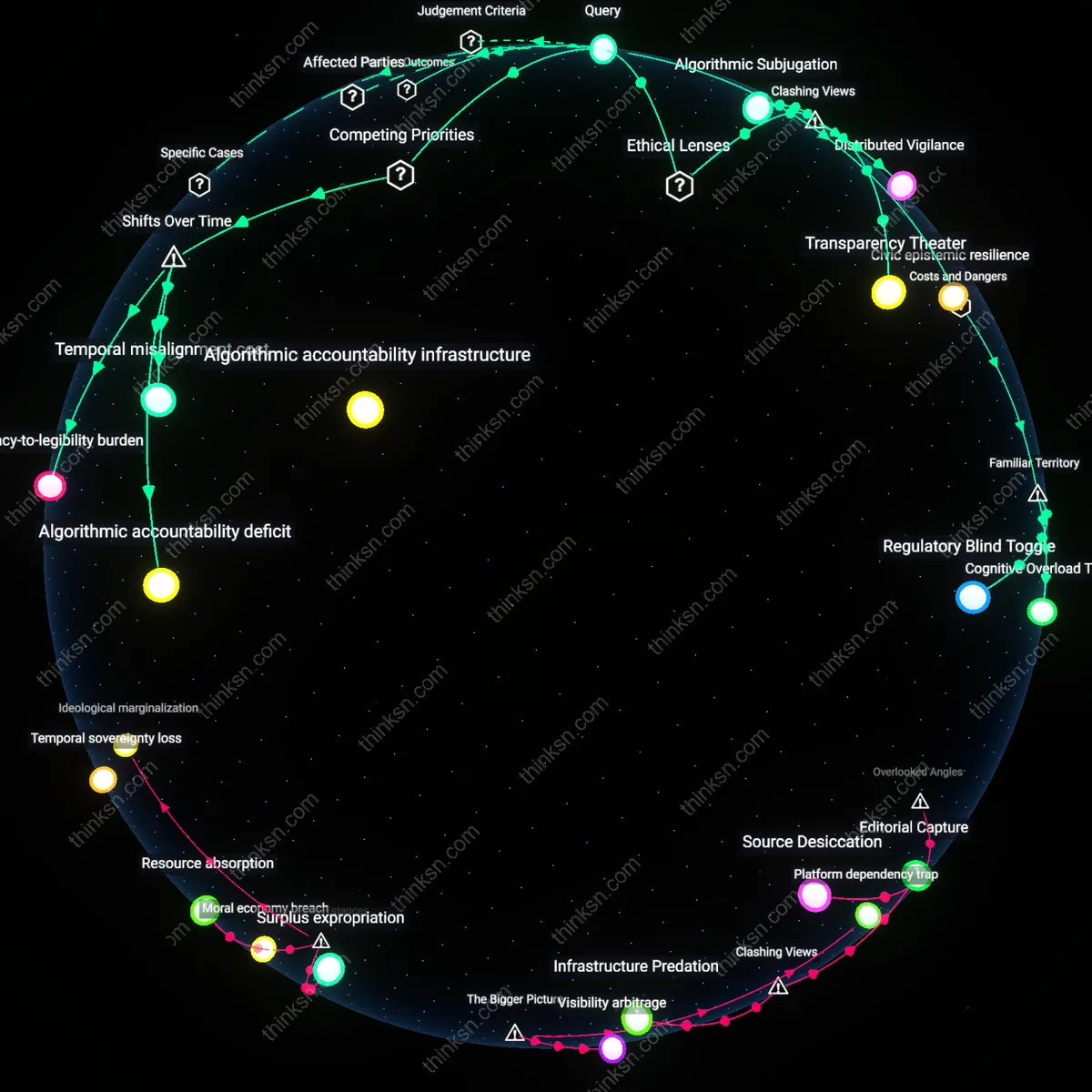

Analysis reveals 16 key thematic connections.

Key Findings

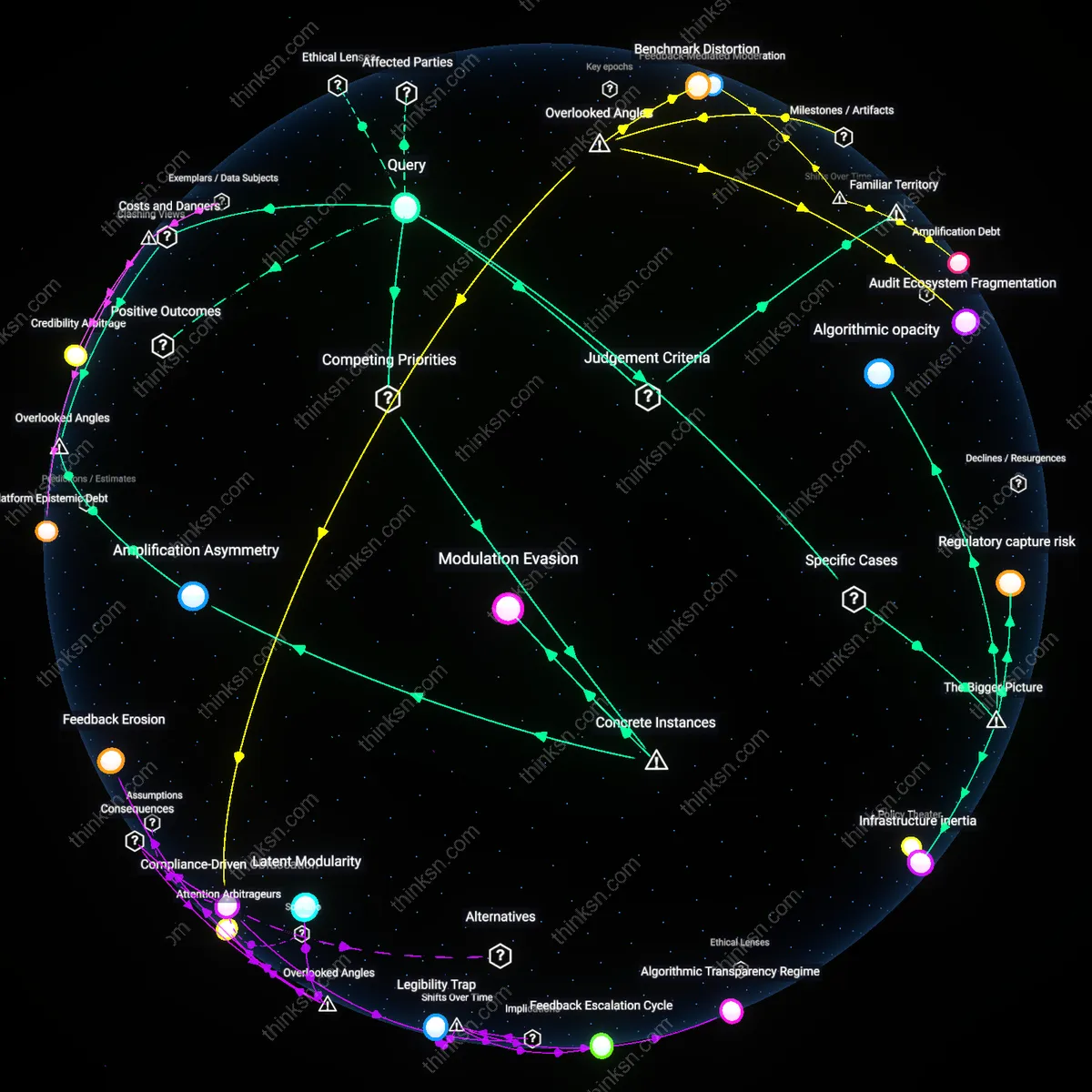

Algorithmic gatekeeping

Journalists can assess the risk of single-search-engine dependency by recognizing that algorithmic gatekeeping now controls access to public discourse, displacing older editorial and institutional filters. This shift—accelerated after 2010 as Google became the primary referral source for news traffic—transferred the responsibility of visibility from newspaper desks to opaque, engagement-driven ranking systems. The mechanism involves search personalization and data feedback loops that systematically deprioritize low-engagement or non-commercial dissenting content, with the practical consequence that journalists may unknowingly treat algorithmic invisibility as epistemic irrelevance. What is underappreciated is that this marks a historical transition from human-mediated judgment of newsworthiness to behaviorally tuned relevance, making bias structural rather than individual.

Epistemic narrowing

The risk of relying on one search engine must be measured against the historical compression of the public knowledge ecosystem that began in the late 2000s, when the decline of library-based research and regional media archives coincided with corporate search dominance. Journalists now navigate an information environment where market logic has replaced pluralistic inquiry as the organizing principle, and where search engines—focused on speed, scale, and monetizable content—exclude non-digital, oral, or marginalized sources that dissenting perspectives often rely on. The shift from polycentric knowledge systems (e.g., public media, university indexes, independent archives) to a singular commercial infrastructure means that the absence of perspectives is masked as neutral retrieval failure. This epistemic narrowing turns algorithmic bias into a silent censor of alternative worldviews, a transformation rarely acknowledged in journalistic practice.

Reputation arbitrage

Journalists face heightened risk when depending on a single search engine because algorithmic ranking now functions as a de facto reputation marketplace, rewarding content aligned with dominant metrics of popularity and penalizing outlier narratives—a dynamic solidified after 2015 with the rise of AI-curated results that amplify ‘trusted’ domains. In this system, dissenting views are marginalized not through overt suppression but through the economic disincentive of low visibility, as outlets conform to SEO norms optimized for Google’s evolving algorithms. The transition from indexing as public service to ranking as competitive positioning means that legitimacy is increasingly derived from algorithmic performance rather than truth or plurality. The underappreciated shift is that journalists now outsource credibility assessment to a system designed for traffic efficiency, not democratic accountability.

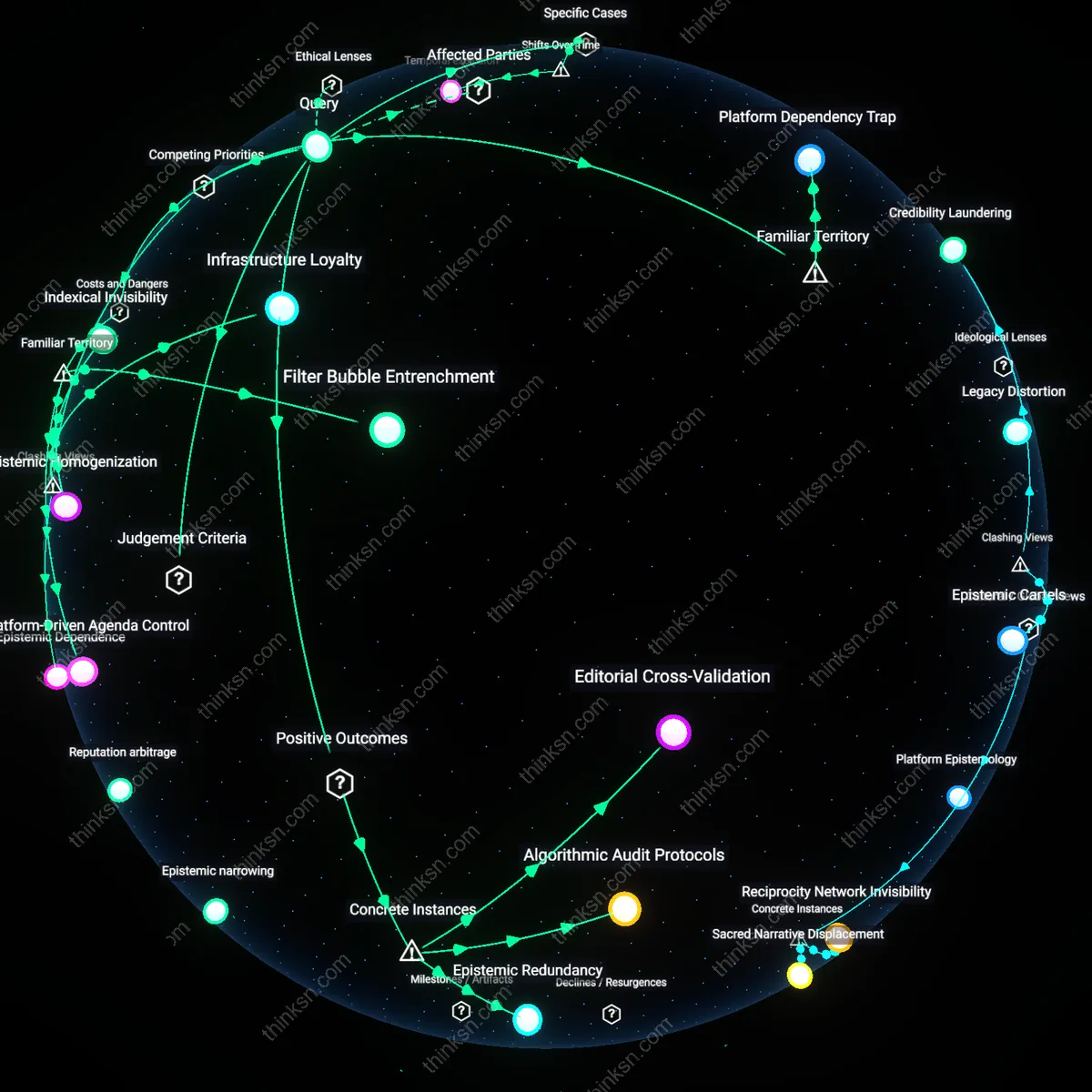

Editorial Cross-Validation

Journalists at The Guardian implemented parallel search queries across Google, DuckDuckGo, and regional archives when investigating the 2019 Hong Kong protests, uncovering suppression of pro-democracy hashtags in Google’s autocomplete and top results; this practice revealed that algorithmic pluralism in content discovery directly mitigates invisibility of marginalized narratives by exposing differential indexing behaviors across platforms, a non-obvious outcome being that search engine diversity functions as a de facto editorial check, not just a technical preference.

Algorithmic Audit Protocols

Reporters from ProPublica used reverse-image searches and cache comparisons across Google and Bing during their 2016 investigation into discriminatory real estate ads, discovering that Google’s image indexing algorithm systematically omitted housing listings targeting Black neighborhoods due to engagement-based filtering; this technical discrepancy enabled the team to isolate algorithmic exclusion as a measurable variable in source selection, revealing that systematic engine comparisons can function as an audit mechanism to detect structural bias in data ingestion layers.

Epistemic Redundancy

During coverage of the 2013 Gezi Park protests in Turkey, where Google Trends data misrepresented grassroots sentiment due to state-coordinated search manipulation, independent journalists from Bianet cross-referenced social media APIs, peer-to-peer networks, and library digital archives to reconstruct protest timelines, demonstrating that reliance on alternative indexing ecosystems preserved access to suppressed perspectives; the non-obvious insight was that decentralized information networks provide epistemic resilience not through superior accuracy but through structural independence from dominant platform logic.

Filter Bubble Entrenchment

Journalists risk normalizing skewed narratives by relying on a single search engine because its algorithm prioritizes engagement-driven content, amplifying mainstream or emotionally charged information while suppressing dissenting voices that don’t fit established user behavior patterns; this creates a feedback loop where investigative leads from marginalized communities or opposition groups become systematically less visible. The mechanism operates through platform-specific personalization filters trained on aggregate user data, meaning even neutral searches return results shaped by what similar users engaged with—not editorial balance or diversity of thought. Most journalists recognize the 'echo chamber' effect intuitively but underestimate how deeply infrastructural dependence on one indexing system codifies this bias into routine newsgathering, making exclusion appear as organic absence rather than algorithmic suppression.

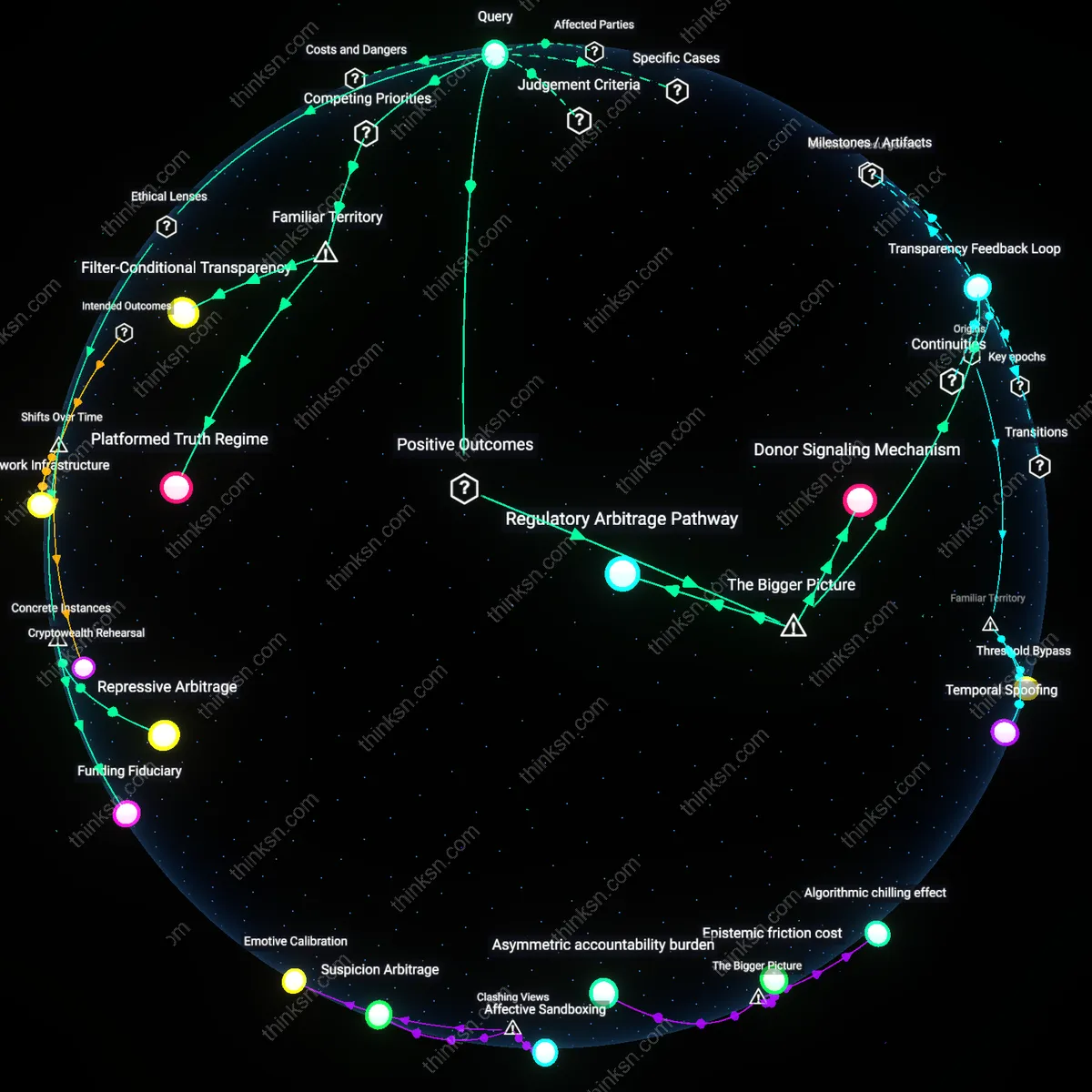

Platform-Driven Agenda Control

Dependence on one dominant search engine allows that platform’s operational priorities to implicitly set news agendas, as journalistic discovery becomes channeled through its ranking logic, which favors content from domains with high inbound-link authority—typically large institutions or legacy media—over grassroots or independent sources. Because search visibility determines which stories appear as viable leads, journalists unknowingly outsource agenda-setting to an entity optimizing for ad revenue and regulatory compliance, not democratic discourse. While it's common knowledge that platforms influence public attention, the underappreciated risk is that search dependency embeds corporate content policies directly into the story-generation pipeline, transforming algorithmic moderation into invisible editorial gatekeeping.

Epistemic Homogenization

When journalists across outlets use the same search engine for research, they converge on identical source pools and narrative frames, producing a uniformity of coverage that mimics pluralism but lacks genuine viewpoint diversity, especially when algorithmic rankings demote non-English, regionally hosted, or low-traffic sites expressing dissent. This homogenization emerges not from deliberate censorship but from synchronized reliance on a single discovery infrastructure whose ranking criteria reward conformity to dominant discourse patterns. Though media echo chambers are a familiar concern, the systemic cost of using homogenous tools—rather than diverse methodologies—is rarely acknowledged as a structural threat to epistemic resilience in journalism itself.

Search Gatekeeping

Journalists risk delegitimizing dissenting perspectives by relying on a single search engine because dominant platforms like Google prioritize content based on engagement and authority signals that systematically favor institutional sources, collapsing pluralism into algorithmically smoothed consensus. This mechanism privileges established media outlets and SEO-optimized actors while sidelining grassroots or counter-narrative producers, making visibility a function of algorithmic compliance rather than newsworthiness. The underappreciated reality is that what appears to be neutral discovery is actually editorial curation by proxy—one that most journalists accept as infrastructure but functions as gatekeeping.

Platform Dependency Trap

Journalists cannot meaningfully audit or correct bias when their discovery workflow is locked into one search engine’s proprietary indexing and ranking logic, as seen with Google’s opaque PageRank and BERT-derived relevance filters that treat political dissidence as noise. This dependency creates a zero-sum trade-off between research efficiency and epistemic diversity, where speed and convenience displace deliberate pluralism in sourcing. The intuitive assumption—that search engines are just tools—obscures their role as invisible editors, leading journalists to mistake ease of access for comprehensiveness, thereby normalizing blind spots as baseline coverage.

Epistemic Dependence

Journalists’ reliance on a single search engine entrenches epistemic dependence, where the capacity to identify credible dissenting perspectives is silently governed by proprietary ranking logic rather than editorial judgment. Google’s dominance in indexing public discourse means that its algorithmic norms—optimized for engagement, recency, and platform-specific signals—actively deprioritize content from marginalized or adversarial sources, even when such content meets journalistic thresholds of relevance. This creates a zero-sum trade-off between efficiency in content discovery and the diversity of viewpoints accessible to newsrooms, particularly those under resourcing pressures to bypass manual sourcing. The non-obvious outcome is that editorial autonomy deteriorates not through overt censorship but via routinized adoption of algorithmically sanitized inputs.

Indexical Invisibility

Assessing risk requires recognizing that algorithmic bias does not merely distort visibility—it produces indexical invisibility, wherein dissenting perspectives are excluded from search results not due to low quality or obscurity but because they fail to conform to behavioral patterns that search engines reward. Platforms like Google prioritize content that generates sustained interaction, privileging formats and domains optimized for virality and data feedback loops, which marginal outlets criticizing power structures often lack the infrastructure to produce. Consequently, journalists depending on these systems mistake absence in search for absence in reality, conflating discoverability with legitimacy. This challenges the intuitive assumption that search engines act as neutral mirrors of public discourse, revealing instead how their technical architecture performs quiet epistemic gatekeeping.

Infrastructure Loyalty

The risk lies not in intentional bias but in the structural capture of journalistic practice by search infrastructure, which compels newsrooms to conform to algorithmic expectations to remain discoverable—an inversion of editorial independence known as infrastructure loyalty. As news outlets increasingly craft content to rank well in Google Search, including headline formatting, keyword use, and publishing cadence, they simultaneously calibrate their epistemic priorities to those of the platform, reducing the likelihood they will surface or produce dissenting narratives that resist search optimization. This creates a feedback loop where journalistic assessment of perspective diversity becomes limited to what the ecosystem already validates, making resistance indistinguishable from irrelevance. The overlooked insight is that self-censorship emerges not from political pressure but from logistical integration into a dominant technical system.

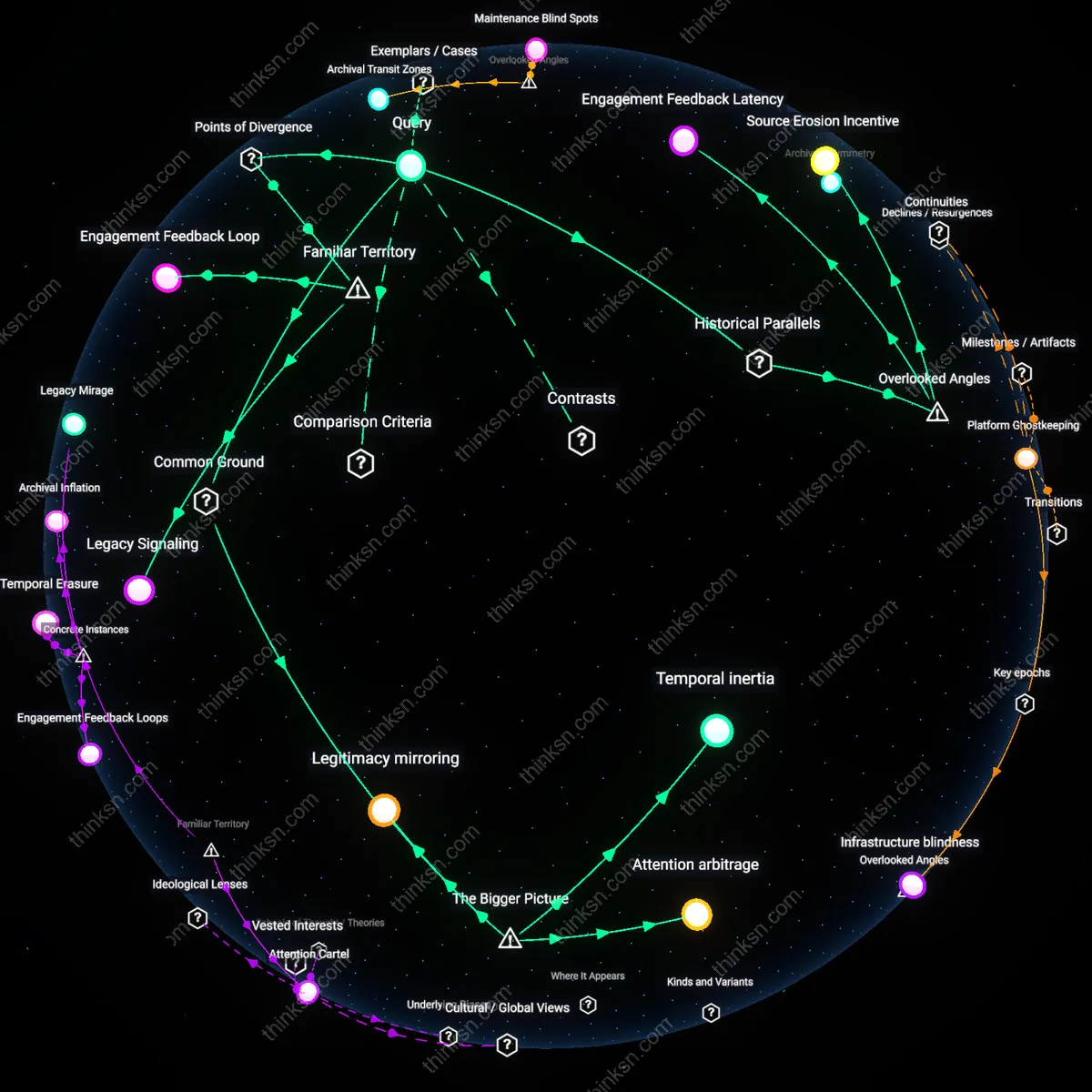

Temporal exclusion

Journalists can evaluate dependence risks by tracking how post-2016 changes to Facebook's news feed algorithm, which began suppressing content from independent media like ProPublica and The Intercept, created a time-based asymmetry in visibility where dissenting perspectives emerged too late to influence public debate. As engagement-driven ranking delayed the spread of investigative reports relative to viral misinformation, the temporal window for corrective narratives shrank, transforming algorithmic sequencing into a mechanism of epistemic delay. This shift—from viewing search as a static index to recognizing it as a dynamic scheduler of relevance—reveals that the core risk is not omission but the systematic postponement of dissent, which undermines its political utility even when eventually surfaced.

Ecological dependence

The reliance on a single search engine can be assessed through the collapse of digital pluralism at outlets like The Guardian following Google’s 2019 BERT update, which altered semantic interpretation in ways that favored technocratic and centrist language over radical or intersectional framing used by journalists covering racial justice. As the update recalibrated 'contextual understanding' around majority discourse patterns, stories employing terms like 'defund the police' were algorithmically isolated despite editorial intent, exposing how search dependence eroded the epistemic ecology of journalism itself. This transition—from pluralistic content distribution to a homogenized linguistic economy governed by a single platform’s interpretation model—demonstrates that the central risk is not bias in isolation but the erosion of conceptual diversity necessary for democratic contestation.