Is Your Privacy Worth Trading for Free Search Engine Convenience?

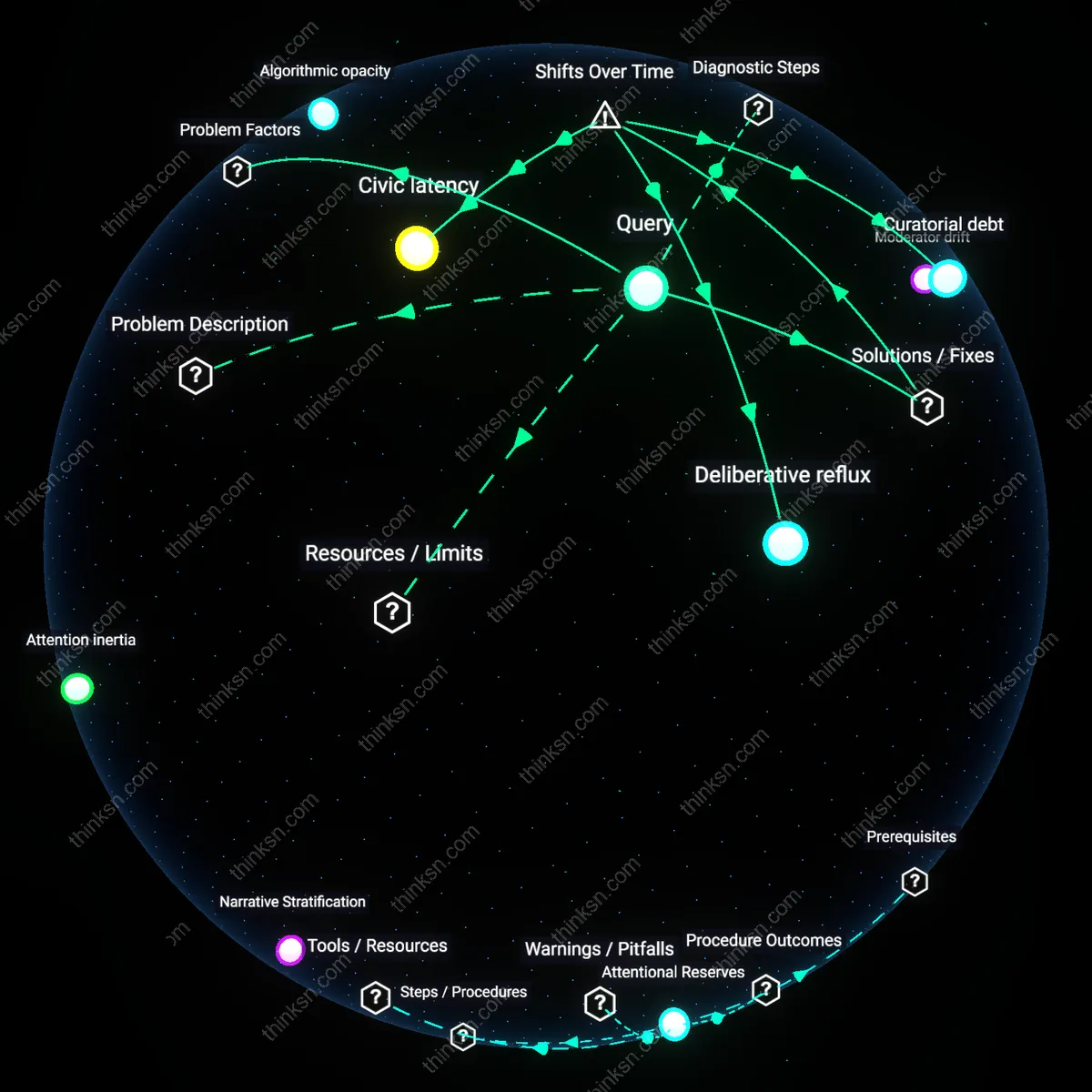

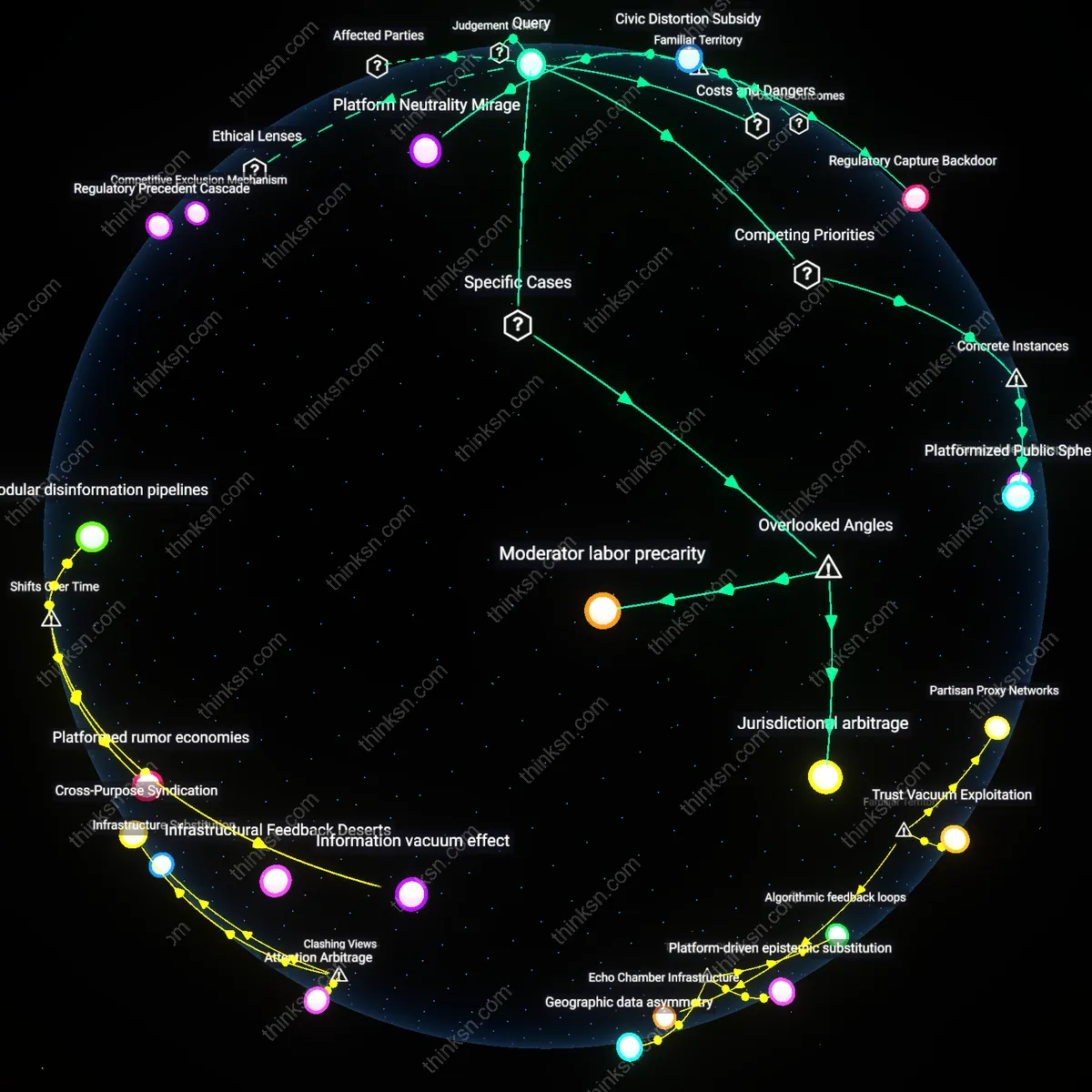

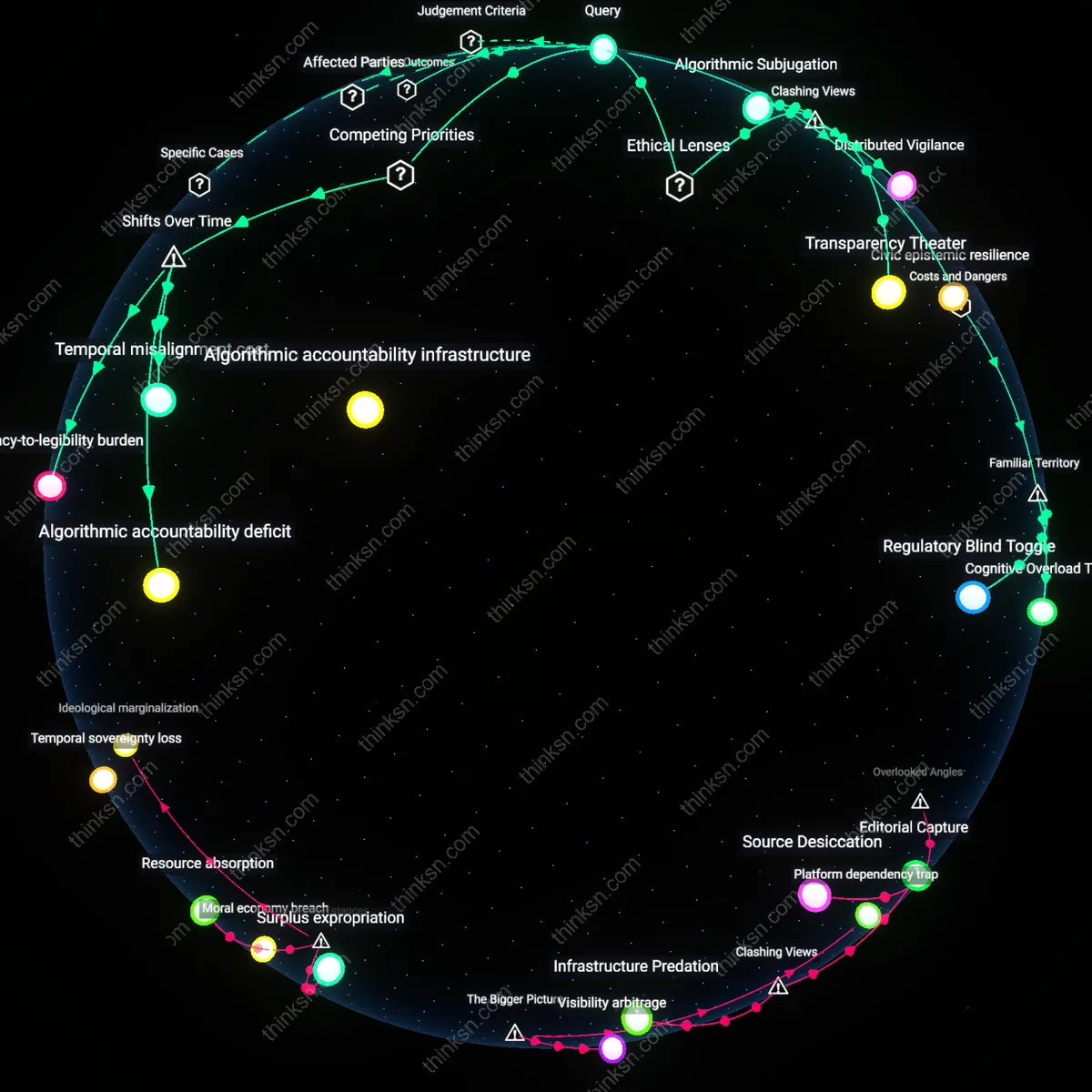

Analysis reveals 9 key thematic connections.

Key Findings

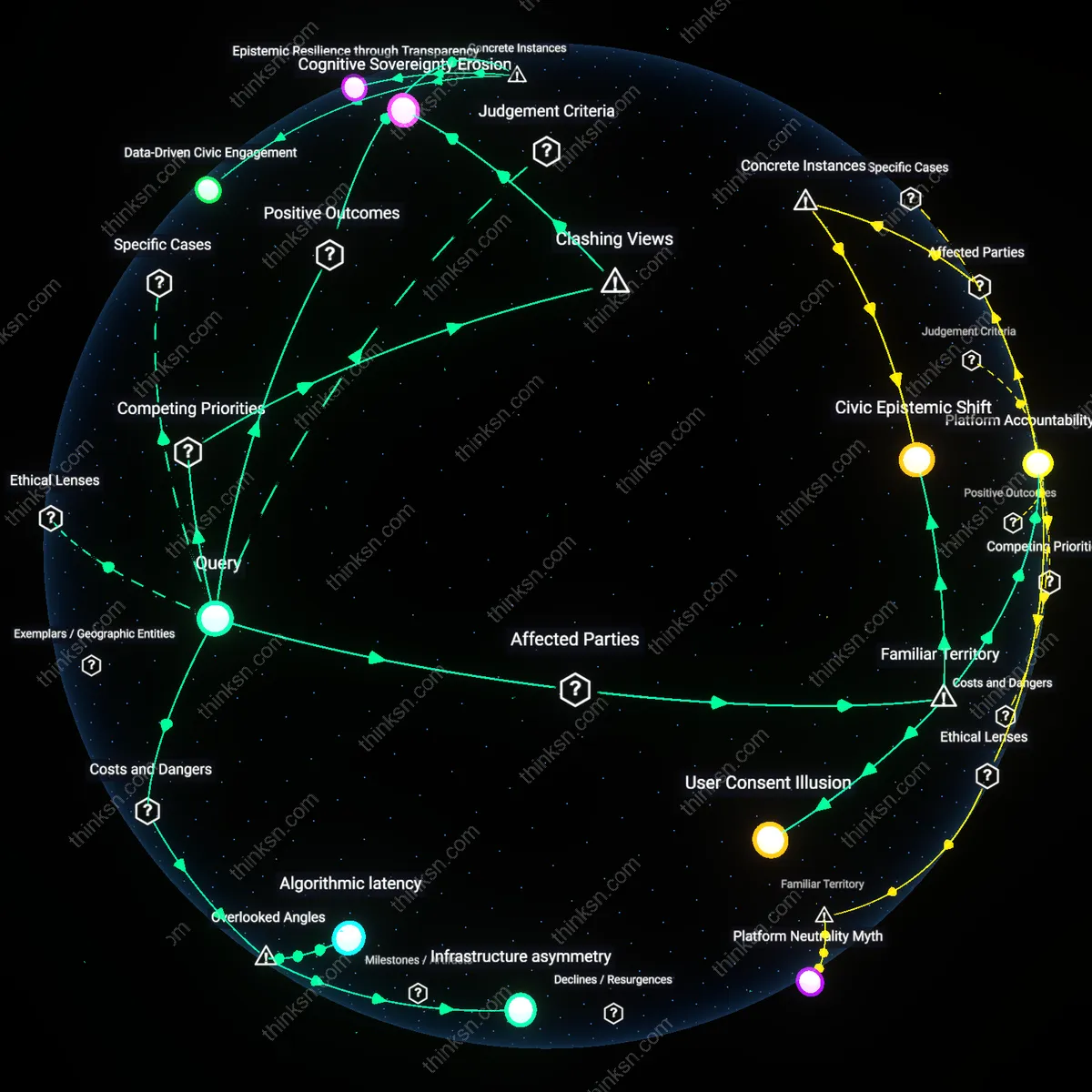

User Consent Illusion

Individuals believe they retain control over their personal data when using free search engines, because they encounter consent prompts during sign-up or browsing. These interfaces suggest informed choice, but the mechanisms of data aggregation, behavioral profiling, and real-time political targeting operate far beyond the scope of what users can practically understand or influence, especially given opaque terms of service and asymmetric power in digital markets. The non-obvious reality is that the illusion of consent masks systemic surveillance infrastructures, where publics are not users but data sources feeding predictive models used in electoral influence campaigns.

Platform Accountability Gap

Technology companies like Google and Microsoft bear responsibility for enabling data exploitation through their search platforms, yet they are not classified or regulated as political actors despite their systems enabling microtargeted disinformation during elections. Their profit model relies on behavioral tracking and ad-based revenue, which creates an inherent conflict between democratic integrity and shareholder returns. The underappreciated condition is that the legal and institutional frameworks governing media and elections still treat platforms as neutral tools, allowing them to avoid liability for downstream political manipulation fueled by their data ecosystems.

Civic Epistemic Shift

Democracies increasingly depend on digital infrastructures where search engines shape public understanding by prioritizing certain information flows, often amplifying polarizing or sensational content due to engagement-driven algorithms. Citizens, political parties, and even intelligence agencies now operate within a terrain where belief formation is indirectly governed by private corporate systems trained on mass behavioral data. The overlooked consequence is that the very basis of shared reality—long taken for granted in democratic discourse—has become a byproduct of commercial data extraction, making political manipulation not an anomaly but a structural possibility embedded in everyday search behavior.

Data-Driven Civic Engagement

The use of personalized search data by Google during the 2008 Obama campaign enabled hyper-targeted voter outreach that increased youth registration and turnout in swing states. By analyzing search behavior patterns, campaign strategists identified information gaps and tailored messaging to address specific concerns about policy, registration, and voting logistics. This mechanism transformed passive data collection into a civic participation engine, revealing how commercial data infrastructures can be repurposed for democratic mobilization when aligned with public interest goals. The non-obvious insight is that mass data aggregation, often criticized for manipulation risks, can simultaneously serve as a civic asset when governance norms and transparency are prioritized.

Epistemic Resilience through Transparency

After the 2016 U.S. election, Google’s launch of the Transparency Report and its ‘Elections Hub’ provided real-time visibility into search trends, ad funding, and political content moderation during electoral cycles in democracies like India and Brazil. This allowed journalists, researchers, and civil society to independently verify the spread of misinformation and track state-linked disinformation campaigns. The system turned proprietary user data into a public accountability tool, demonstrating that search platforms can counter political manipulation by exposing their own data flows. The underappreciated outcome is that data retention, when accompanied by public-facing transparency mechanisms, can strengthen collective epistemic defenses rather than erode them.

Behavioral Insight for Public Health

During the 2009 H1N1 pandemic, Google Flu Trends leveraged anonymous search queries to detect regional outbreaks faster than traditional CDC surveillance systems, which relied on clinical reporting delays. The system identified spikes in symptom-related searches—like 'flu symptoms' or 'fever remedy'—to map infection rates in real time, enabling local governments to allocate medical resources more efficiently. Although later refined due to overestimation issues, the initiative proved that aggregated user data from free search engines can generate early-warning public health intelligence. The core insight often missed in privacy debates is that ambient data collection can yield societal-scale early-response capabilities when ethically anonymized and operationally constrained.

Infrastructure asymmetry

Free search engines entrench a structural dependency where democratic institutions lack parity in data access compared to private platforms, weakening their capacity to anticipate or respond to manipulation. Governments attempting to monitor disinformation or protect electoral integrity operate without real-time behavioral datasets that companies like Google collect at scale, creating a feedback void in public oversight. This asymmetry is rarely acknowledged in ethics debates, which focus on corporate abuse rather than systemic blindness in governance—yet it explains why regulatory responses are chronically reactive. The residual imbalance isn't just about privacy violations; it's about the state being systematically deprived of the very intelligence needed to defend collective self-determination.

Algorithmic latency

Political manipulation via search data often emerges not from immediate exploitation but from the delayed reuse of behavioral patterns years after collection, when users have no recollection of the original queries or context. Platforms store vast troves of ostensibly mundane searches—on health, relationships, or local events—that later serve as training data to model psychological vulnerabilities during elections or crises. This latency effect escapes conventional privacy frameworks, which assume harm requires contemporaneous misuse, yet it enables manipulation that is both retroactive and unchallengeable. The overlooked risk is that today’s benign query becomes tomorrow’s weapon, not by intent but by archival persistence and recombinant analysis.

Cognitive Sovereignty Erosion

The ethical peril of data-collecting search engines lies less in surveillance than in the gradual displacement of individual reasoning by algorithmically curated epistemic environments, as seen in the 2016 U.S. election and the 2019 Philippines midterms, where search autocompletion and result ranking subtly reshaped voters’ perception of factual consensus. This mechanism operates through recursive feedback loops—users trust search engines as objective tools, yet their outputs are calibrated to past behavior, effectively reinforcing ideological contours without overt coercion. The dominant framing of 'informed citizens' assumes cognitive autonomy, but evidence indicates that persistent algorithmic shaping undermines the very capacity for independent judgment, revealing that the cost of seamless access is not privacy lost but self-determination overwritten.