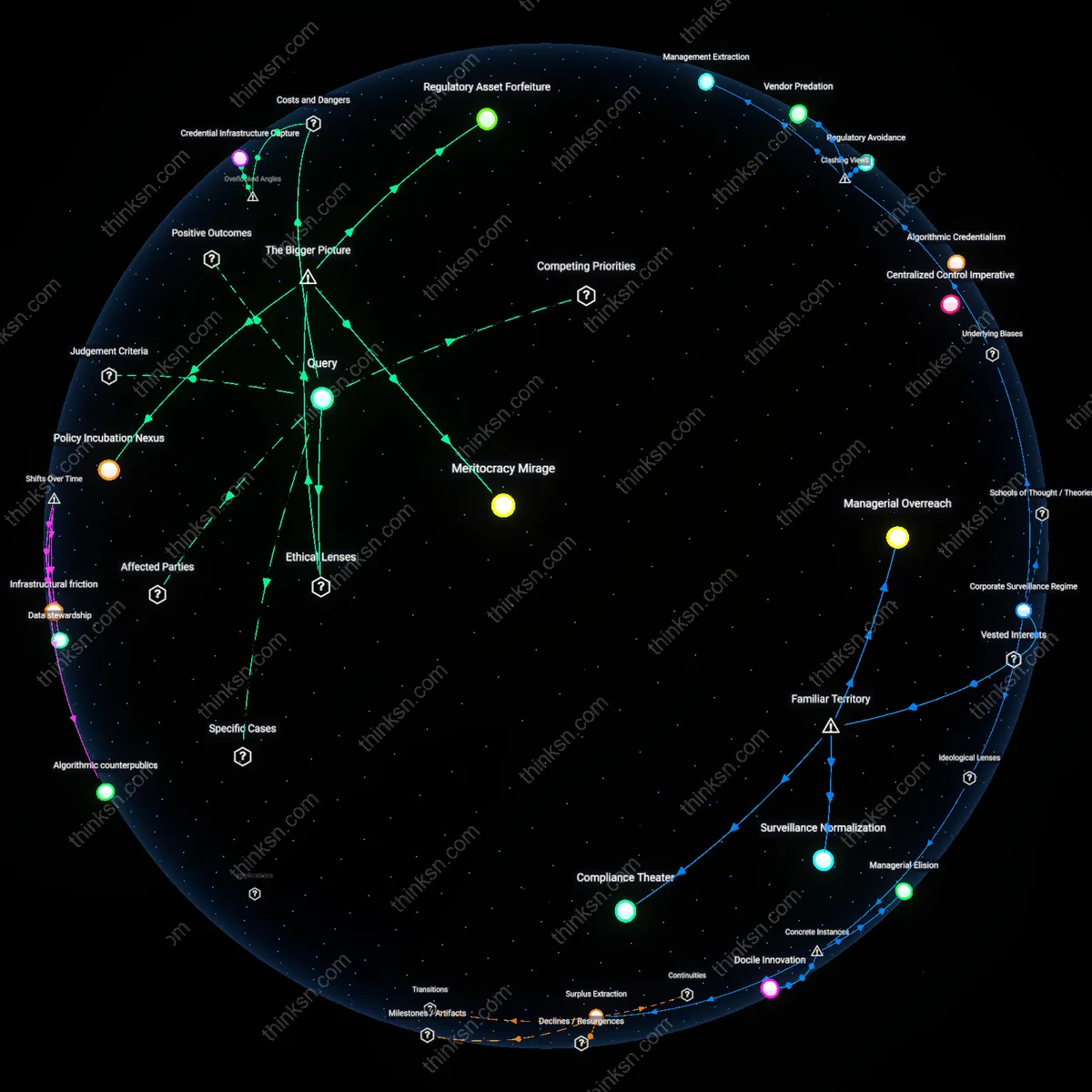

Does AI Workforce Law Help Workers or Corporate Interests?

Analysis reveals 5 key thematic connections.

Key Findings

Credential Infrastructure Capture

U.S. 'AI-ready' workforce legislation enables politically connected private training companies to shape the underlying technical standards of digital credentialing systems, thereby locking in long-term revenue streams while obscuring worker outcomes. These firms influence the design of interoperable badge systems, blockchain-based certifications, and API structures that determine how skills are defined and verified—creating a de facto monopoly on validation that benefits vendors, not workers. This dimension is overlooked because scrutiny focuses on program efficacy or funding allocation, not the governance of the digital infrastructure that determines which skills 'count' and who certifies them. The non-obvious mechanism is not fraud or incompetence, but the quiet privatization of credential architecture.

Workforce Data Exhaust Exploitation

AI-ready workforce programs generate vast troves of granular behavioral data—learning patterns, click-through rates, assessment responses—that private contractors collect and retain under ambiguous data rights clauses, enabling them to refine predictive models for profit-driven labor platforms. This data exhaust becomes a strategic asset repurposed for workforce surveillance or algorithmic staffing tools, enriching contractors while workers lose control over how their digital labor traces are used. Most oversight focuses on training quality or job placement rates, ignoring data as a residual output; the overlooked danger is that the legislation functions as a state-subsidized data extraction pipeline masked as upskilling.

Regulatory Asset Forfeiture

Citizens cannot reliably discern whether U.S. 'AI-ready' workforce legislation serves workers because the rulemaking process is structured to prioritize industry-defined 'readiness' metrics over labor outcomes, enabling private training firms with lobbying access to shape accreditation standards. This occurs through the Department of Labor’s reliance on public-private partnerships like Skillful and IBM’s P-TECH, where curriculum benchmarks are co-developed by corporations that profit from certification mandates. The non-obvious consequence is that worker interests are procedurally submerged beneath neutral-sounding administrative criteria, making exploitation appear as inclusion.

Meritocracy Mirage

Citizens are systematically misled about whose interests workforce legislation serves because the discourse is framed through neoliberal human capital theory, which equates worker 'upskilling' with economic justice while ignoring structural unemployment driven by automation. This ideological framing, embedded in legislative narratives from the CHIPS and Science Act to state-level AI task forces, rationalizes public funding of private training platforms whose business models depend on perpetual reskilling. The underappreciated reality is that meritocratic language functions as a legitimation mechanism for redistributing public wealth to edtech firms under the guise of equity.

Policy Incubation Nexus

Workers’ interests are structurally invisible in 'AI-ready' legislation due to the geographic and institutional alignment between federal workforce grants and privately operated innovation hubs like those in the National Network for Regional Innovation, where proposals are evaluated by actors embedded in venture capital and tech philanthropy. These intermediaries—such as the Brookings Institution's Metro Program or MITRE Corporation—operate as policy incubators that preselect 'viable' training models, excluding union-led or community-based alternatives. The unnoticed consequence is that political connection is institutionalized not through bribery or corruption, but through epistemic authority over what counts as 'innovative' worker development.

Deeper Analysis

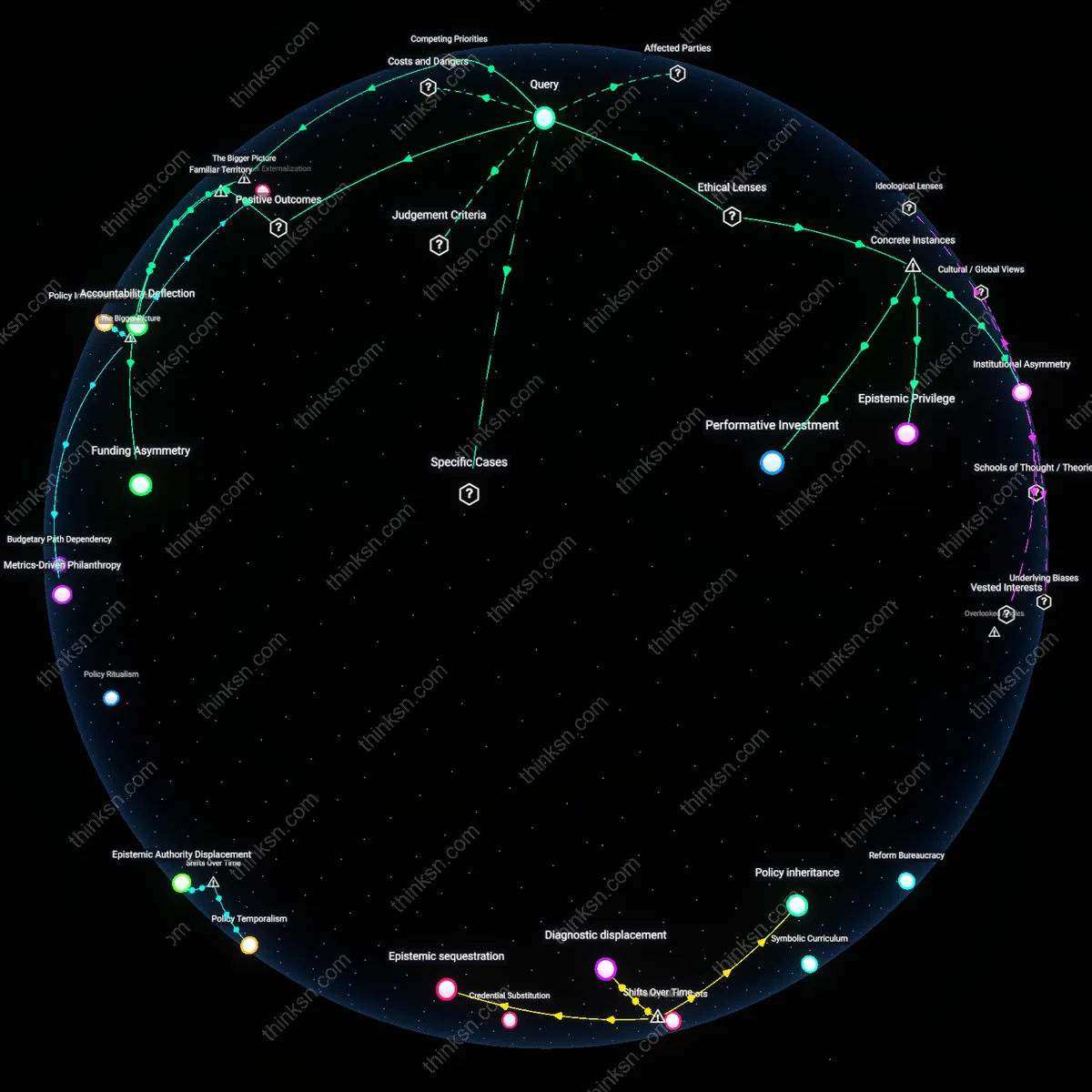

Who benefits when worker data from AI training programs gets turned into tools that predict job performance or track employees?

Corporate Surveillance Regime

Employers benefit when worker data from AI training programs is repurposed into performance prediction tools because they gain unprecedented control over labor through continuous behavioral monitoring. This shift allows management to codify productivity into algorithmic benchmarks, enforce compliance via real-time tracking, and preempt dissent by identifying at-risk employees before attrition or protest occurs. The non-obvious consequence within this familiar frame of workplace efficiency is that surveillance becomes a structural feature of employment itself, shifting power decisively toward capital by normalizing the erosion of privacy as a condition of work.

Data Extractive Infrastructure

Technology vendors benefit when worker data is transformed into AI-driven performance tools because they monetize human labor traces through scalable SaaS platforms sold to enterprises. These firms exploit a feedback loop where employee behavior collected during training programs becomes proprietary datasets used to refine predictive models that are then leased back to employers. The underappreciated reality beneath the familiar narrative of innovation is that the infrastructure itself—built by AI companies—extracts value from workers without compensation, turning lived labor experience into assetizable data streams.

Capital erosion resistance

Corporations benefit when worker data from AI training programs is repurposed into performance prediction tools because it enables management to preemptively identify and neutralize emergent labor solidarity by individualizing risk assessments. This operates through HR analytics systems that flag behavioral patterns associated with dissent or organizing, transforming collective workforce dynamics into atomized productivity metrics under the guise of efficiency—thus preserving managerial control. The non-obvious mechanism is not mere surveillance, but the systematic erosion of workers’ capacity to build trust and organize by rendering solidarity statistically anomalous, which reinforces capital’s structural resistance to labor’s power recomposition.

Technocratic legitimacy surplus

AI developers and consulting firms benefit when employee data is converted into performance-tracking tools because they position themselves as neutral arbiters of organizational efficiency, thereby expanding their market reach into internal corporate governance. This is enabled by a demand among executives for data-driven decision-making that appears objective, which licenses third-party vendors to embed their algorithms deep within HR infrastructures. The underappreciated dynamic is how these actors generate a surplus of technocratic legitimacy—where algorithmic recommendations are treated as self-evident truths—allowing them to shape workplace norms without formal authority.

Inequity deferral mechanism

Shareholders benefit when AI systems use worker training data to predict performance because such tools allow firms to externalize the costs of underperformance onto individual employees while retaining the financial gains from optimized labor allocation. This functions through performance-linked pay adjustments and automated attrition systems that align labor costs with real-time productivity benchmarks, all justified by the AI’s purported accuracy. The overlooked consequence is that systemic inequities—such as biased training data or unequal access to development resources—are deferred rather than resolved, making the tool not a solution but a procedural shield against structural reform.

Corporatized Meritocracy

American tech firms like Amazon benefit when worker data from AI training programs becomes performance prediction tools, as seen in its warehouse monitoring systems that algorithmically track productivity metrics like time-per-task and penalize workers deemed inefficient. This system operates through real-time data extraction and automated disciplinary workflows embedded in operations across U.S. fulfillment centers, reinforcing a cultural ideal of quantified self-improvement while shifting accountability from management to individuals. The underappreciated dynamic is how this reframes labor rights as personal performance failures, masking structural exploitation under the guise of objective evaluation.

State-Directed Obedience

China’s social credit pilot programs in cities like Rongcheng benefit directly when employee data is repurposed through AI to assess job performance, where corporate workforce analytics are integrated into broader governmental frameworks for social control. Here, private-sector data collection by firms such as Alibaba-linked platforms feeds into regional scorekeeping systems that reward or restrict citizens’ mobility, loans, and employment based on behavioral metrics—including workplace conduct. The non-obvious consequence is the seamless fusion of corporate HR tools with state surveillance, where worker tracking serves not just profit but political stability, reflecting a Confucian-inflected collectivist ethic instrumentalized by the party-state.

Guilt-Laden Surveillance

In Catholic-majority Poland, the adoption of AI performance trackers by Western-owned call centers—such as those operated by Deutsche Telekom in Katowice—benefits local supervisors trained to view employee inefficiency as moral shortcoming, leveraging a cultural association between work ethic and personal virtue rooted in post-socialist religious norms. The mechanism is managerial discretion amplified by algorithmic alerts that flag deviations from script adherence or break times, which are then interpreted through a moral lens of diligence and penance rather than systemic design. This reveals how technocratic tools are silently rewired within workplaces shaped by sacramental views of labor, where data-driven oversight intensifies internalized guilt rather than collective resistance.

Managerial Epistocracy

Corporate executives and HR technocrats benefit as AI systems repurpose worker data into performance prediction tools because these tools extend their oversight capabilities under the legitimizing guise of algorithmic objectivity, a shift that accelerated after 2010 with the rise of people analytics in firms like Google and IBM; what is underappreciated is that this marks a break from mid-20th-century personnel management, where evaluations relied on structured but human-mediated review, whereas now algorithmic outputs create feedback loops that systematically marginalize worker autonomy and embed managerial judgment within opaque models, producing a new form of authority insulated from appeal.

Data Enclosure Regime

Venture-backed AI startups and cloud infrastructure providers benefit when employee data from training programs is productized into performance analytics because they capture value from labor processes they do not directly manage, a dynamic that crystallized during the platformization of enterprise software between 2015 and 2020; unlike earlier eras of industrial psychology where assessments remained internal to firms, today’s modular AI tools treat behavioral data as a tradable asset, enabling third-party vendors to externalize the costs of data extraction while corporations outsource accountability, revealing a quiet enclosure of workplace behavior into privatized data markets.

Predictive Labor Stack

Global gig platforms such as Uber and Amazon Logistics benefit by integrating AI-driven employee tracking tools trained on aggregated worker data because these systems allow for the real-time modulation of labor supply based on forecasted performance thresholds, a capacity that emerged decisively after 2020 as machine learning models shifted from post-hoc analysis to prescriptive orchestration; what distinguishes this phase from earlier surveillance regimes is not merely intensified monitoring but the temporal compression of feedback—behavioral data from training is no longer descriptive but generative of future work design, effectively pre-empting dissent or deviation through algorithmic routinization, thus stabilizing a new infrastructural layer in contingent labor management.

Contractor precarity

Private staffing agencies benefit when worker data from AI training programs is repurposed into performance prediction tools because they use algorithmic outputs to justify excluding gig and temporary workers from long-term contracts, leveraging ostensibly neutral metrics to mask structural labor segmentation. These agencies operate under contractual obligations to deliver 'high-performing' workers to corporate clients, and AI-generated performance scores provide a defensible rationale for cycling out marginally ranked individuals without triggering labor protections. The non-obvious mechanism lies in how algorithmic classifications are not just used to rank but actively shape eligibility for formal employment pathways—transforming data derived from low-wage workers into tools that reinforce their own exclusion. This dynamic reveals how AI systems function as bureaucratic shields, allowing intermediaries to evade responsibility while maintaining plausible deniability through technical objectivity.

Infrastructure opacity

Cloud infrastructure providers benefit when worker data is transformed into behavioral prediction tools because the complexity and opacity of their machine learning platforms create vendor lock-in, where firms depend on proprietary data pipelines that obscure how raw inputs become operational outputs. Companies like AWS or Microsoft Azure profit not from the insights generated but from the sustained computational demand of training, retraining, and deploying performance models across global workforces—turning human behavior into a recurrent revenue stream via data processing cycles. What is overlooked is that the financial incentive lies less in the accuracy of predictions than in the continuous consumption of compute resources required to sustain the illusion of progress. This shifts the locus of benefit from decision-makers to the underlying digital architecture, where value is extracted through obscurity and scale rather than transparency or fairness.

Compliance deferral

Internal corporate legal teams benefit when employee-monitoring AI tools are built on worker-derived training data because these tools generate audit trails that preemptively satisfy regulatory expectations around performance management, even when outcomes are biased or inaccurate. By deploying systems that appear to follow data-driven protocols, legal departments can defer liability related to discrimination or wrongful termination claims, framing decisions as emergent from algorithmic analysis rather than managerial discretion. The underappreciated dynamic is that the primary function of these tools is not to improve productivity but to produce procedural legitimacy—creating a bureaucratic buffer between human judgment and organizational risk. This transforms AI not into a managerial instrument but into a ritualized shield against oversight, where the appearance of objectivity becomes more valuable than its substance.

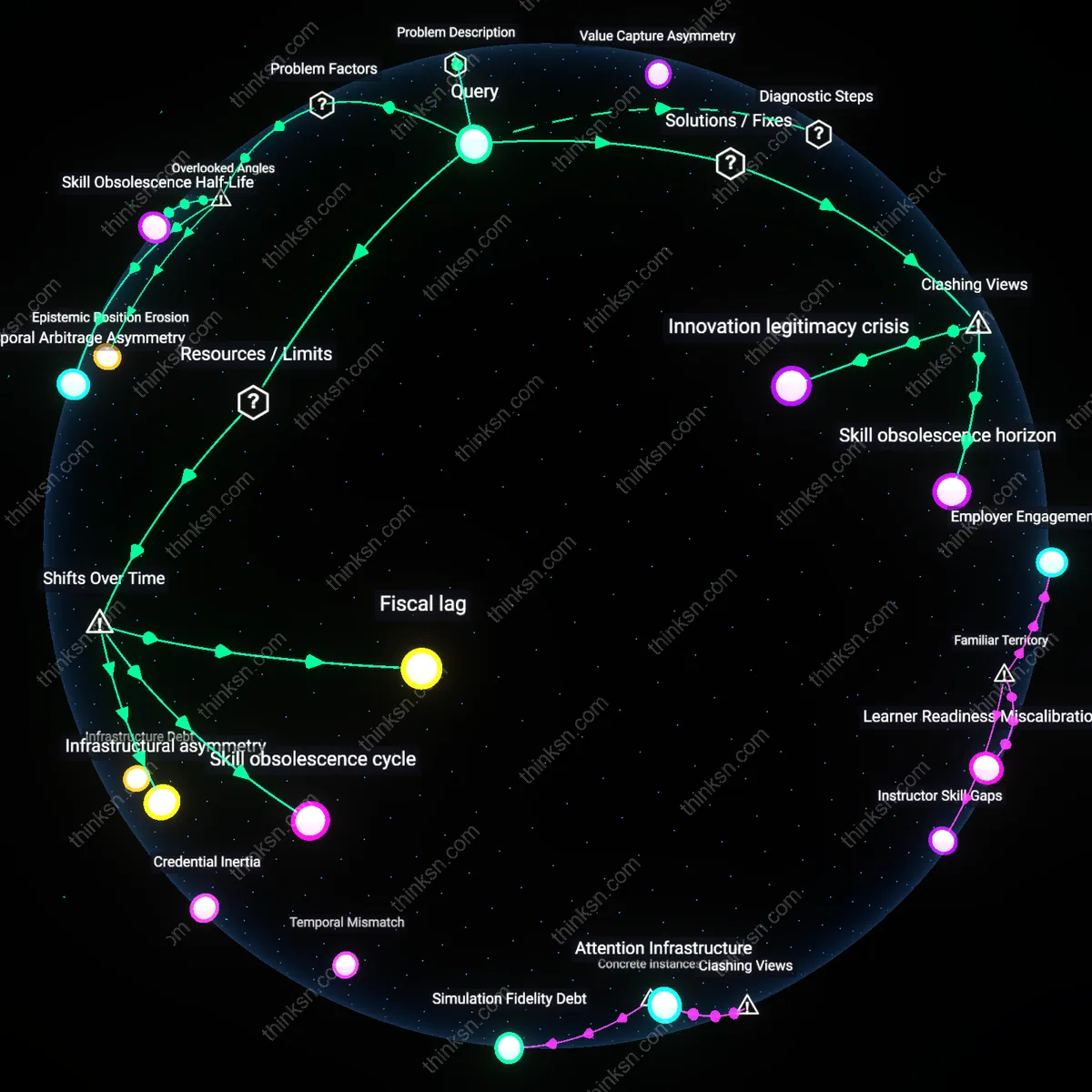

Who ends up benefiting when AI training programs track workers' behavior and turn it into performance scores?

Managerial Elision

Amazon warehouse supervisors in Kent, Washington, used AI-generated performance scores in 2019 to justify firing workers for low productivity, where the system tracked movement speed but excluded injury risks or workflow obstructions, privileging operational efficiency over worker well-being; this mechanism erases context in behavioral data, allowing authority to standardize accountability while shielding management from scrutiny—revealing that the primary beneficiaries are mid-level enforcers of corporate discipline who gain defensible, algorithmic cover for discretionary power.

Surplus Extraction

In 2021, Uber’s AI in Amsterdam optimized driver routes and ratings to compress idle time, effectively increasing the number of billable trips per hour without raising base pay, thereby converting behavioral data into intensified labor rhythms; this real-time recalibration of performance norms operates through algorithmic speedup, a process that transfers value from drivers to the platform by monetizing every adjustment to behavior—exposing how capital appropriates granular behavioral surplus under the guise of neutral performance metrics.

Docile Innovation

Chinese 'Social Credit System' pilots in Rongcheng by 2018 assigned citizens point-based scores using surveillance and behavior tracking, where higher scores unlocked access to public housing or faster government services, effectively rewarding self-regulation through algorithmic reputation; this system incentivizes preemptive conformity, where individuals internalize governance and innovate their own compliance—demonstrating that the state benefits not through direct coercion, but by outsourcing discipline to the ambition of the governed.

Centralized Control Imperative

Authoritarian management cultures in East Asia benefit most when AI training programs track workers’ behavior, because persistent hierarchies and top-down oversight norms amplify the legitimacy of algorithmic surveillance. In countries like South Korea and China, where workplace loyalty and organizational harmony are culturally prioritized, leadership classes deploy AI performance scores not merely to optimize efficiency but to reinforce institutional authority. This systemic alignment between technology and pre-existing power structures reveals how AI becomes a vehicle for institutional discipline rather than individual development, a nuance often obscured in Western meritocratic narratives. The residual concept this reveals is the institutional reinforcement of managerial dominance through culturally legitimate surveillance.

Data Extractivism

Transnational tech firms headquartered in Silicon Valley benefit when AI training systems mine worker behavior, because raw behavioral data becomes a fungible asset in global AI markets. These companies exploit regulatory asymmetries—collecting granular behavioral logs in regions with weak labor privacy laws while refining models that later generate outsized returns in wealthy, automated sectors. This dynamic functions through a supply chain logic where Global South labor practices feed AI systems deployed primarily in the Global North, turning everyday work routines into training data without compensating the source. The non-obvious outcome is not improved performance but the accumulation of behavioral capital, revealing a new form of digital colonialism rooted in latent data extraction.

Algorithmic Credentialism

Western professional elites benefit when AI quantifies worker behavior, because performance scores become new markers of legitimacy in credential-driven labor markets. In countries like the U.S. and U.K., where advancement depends on measurable outcomes and auditability, HR departments and middle managers use AI-generated metrics to depoliticize promotion decisions, even when those metrics distort actual contribution. This creates a self-sustaining cycle where scoring systems justify managerial reliance on algorithmic outputs, ultimately privileging those who already navigate institutional norms successfully. The overlooked mechanism is not bias in the algorithm but the systemic displacement of qualitative judgment by quantifiable proxies, which institutionalizes a new technocratic aristocracy.

Management Extraction

Corporate managers benefit when AI training programs track workers' behavior because surveillance-derived performance scores shift accountability away from structural inefficiencies and onto individual employees, particularly in call centers and warehouse logistics where algorithmic oversight is embedded in scheduling and discipline systems; this mechanism recasts workforce resistance or high turnover as personal underperformance, making systemic control appear as meritocratic optimization, a non-obvious shift that reveals how operational data regimes privilege managerial narratives over labor realities.

Vendor Predation

AI software vendors—especially HR tech startups and surveillance analytics firms—benefit most from behavior tracking because the expansion of quantified performance metrics creates recurring revenue through licensing, integration, and 'insight' dashboards sold back to employers; this dynamic turns workplace monitoring into a self-justifying product cycle where the vendor, not the worker or even the employer, dictates what performance means, exposing how the rhetoric of efficiency often serves to inflate the vendor’s market reach under the cover of organizational improvement.

Regulatory Avoidance

Labor regulators and legal enforcement bodies are structurally disincentivized to challenge AI-driven performance scoring, allowing employers to delegate decision-making to opaque systems that technically comply with anti-discrimination laws while producing discriminatory outcomes, thus benefit accrues to companies that use algorithmic ambiguity to shield management from liability; the non-obvious reality is that compliance theater enabled by AI obfuscation becomes a strategic asset, undermining worker appeals by reframing bias as computational neutrality.

Managerial Overreach

Corporations benefit when AI training programs track workers’ behavior and assign performance scores because it allows executives and middle managers to standardize oversight with algorithmic justification. This system replaces nuanced human judgment with quantified metrics, enabling faster disciplinary actions, optimized labor pacing, and reduced bargaining power for employees—especially in retail, warehousing, and customer service sectors. The non-obvious consequence under familiar assumptions about efficiency is that the very design of these tools reinforces hierarchical control by redefining worker autonomy as a risk factor rather than a right.

Surveillance Normalization

Tech developers and investors benefit when worker behavior is tracked and scored because continuous monitoring creates recurring data flows essential for refining and selling AI models. The mechanism operates through SaaS platforms and HR tech ecosystems—like those from Workday or HireVue—that monetize behavioral analytics under the guise of fairness and objectivity. While the public habitually associates performance scoring with meritocracy, the overlooked outcome is the entrenchment of surveillance as standard workplace infrastructure, transforming employee conduct into a perpetual training set.

Compliance Theater

Government regulators and corporate compliance officers benefit when AI systems track worker performance because the appearance of data-driven accountability reduces legal and reputational risk. These scores are used to demonstrate due diligence in investigations or audits, particularly in highly regulated industries like banking or healthcare, where oversight agencies expect tangible metrics. The underappreciated reality is that these systems often prioritize documentation over actual performance, fostering a ritual of measurement that satisfies procedural expectations without improving outcomes.

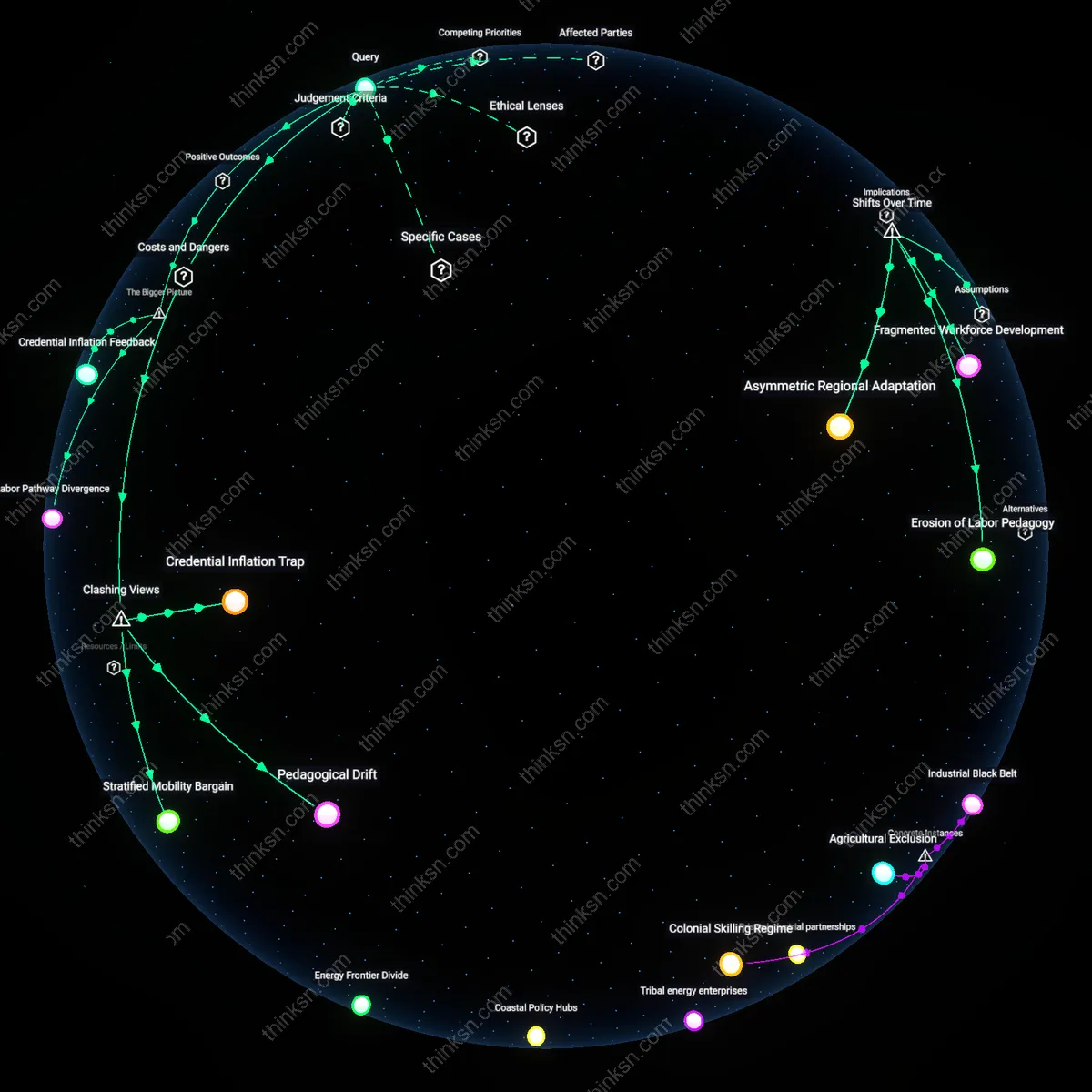

What would happen if worker advocacy groups developed their own tools to interpret employee data, using them to challenge management's algorithmic decisions?

Data stewardship

Worker advocacy groups would gain data stewardship by independently interpreting employee data, a capacity historically monopolized by employers since the rise of industrial human resources systems in the mid-20th century. As algorithmic management expanded post-2010 across gig platforms and service firms, raw workforce data became the foundation for scheduling, performance reviews, and terminations—yet remained inaccessible to workers. By developing forensic data tools modeled on labor statistics methodologies, unions like the IWGB or Data & Society collaborators could reverse-engineer personnel outcomes, exposing biased attrition patterns in firms like Amazon or Uber; this shift marks a rupture from the Taylorist assumption that only management can define productivity, revealing how data control entrenches power asymmetries long assumed natural.

Algorithmic counterpublics

The creation of algorithmic counterpublics would emerge as worker-led collectives use open-source analytics to dispute corporate algorithmic logic, transforming workplace dissent from isolated grievances into coordinated technical interventions. Unlike the shop-floor resistance of the 1970s, which relied on bodily presence and sabotage, today’s labor challenges target decision-making code—seen in instances like NYC delivery couriers reverse-engineering routing apps to document wage theft. By reframing algorithmic opacity as a collective action problem solvable through shared data literacy and tool-building, advocacy groups dissolve the post-1980 neoliberal fiction of the autonomous digital laborer, exposing how platform rule systems function as covert management policy rather than neutral technology.

Infrastructural friction

Management’s reliance on seamless algorithmic governance would face infrastructural friction when worker-developed tools expose misalignments between stated policies and actual system behaviors, a vulnerability amplified since enterprise AI systems scaled in the 2020s. When retail workers’ cooperatives in California used scraping tools to log inconsistencies between promised shift stability and automated scheduling outputs from Kronos software, they revealed not random glitches but systemic prioritization of inventory KPIs over labor law compliance—a divergence invisible without parallel data capture. This friction doesn't just challenge outcomes but disrupts the core assumption since the 1990s that integrated HRIS platforms operate as unified, rational systems, unveiling instead layered, conflicting scripts shaped by competing organizational imperatives.

Latent Compliance Debt

When worker groups build interpretive tools, they inadvertently expose latent compliance debt in corporate data systems—hidden gaps between stated personnel policies and actual algorithmic enforcement. Union-developed diagnostics, for example, may reveal that a company’s promotion algorithm systematically downweights shift coverage history, a factor managers claim to value but the system fails to track. Unlike audits, which verify adherence to existing rules, these tools uncover implicit contractual breaches buried in the misalignment between HR rhetoric and machine logic. This dimension is overlooked because compliance is typically assessed ex ante through policy documentation, not ex post through reverse-engineered behavioral outcomes, meaning legal exposure accumulates silently until worker-led analysis makes it actionable.