Digital IDs for Voting: Balancing Convenience and Privacy Risks?

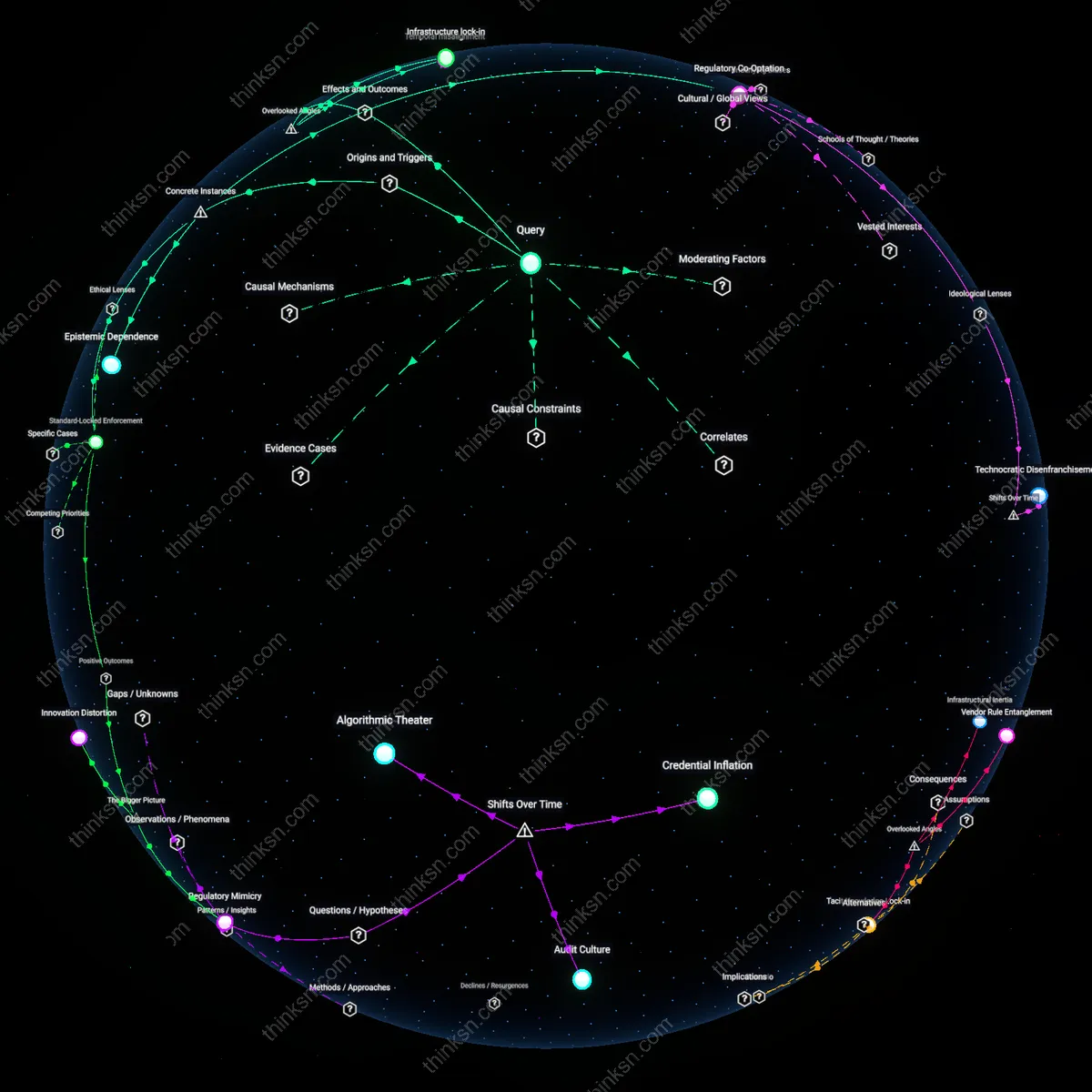

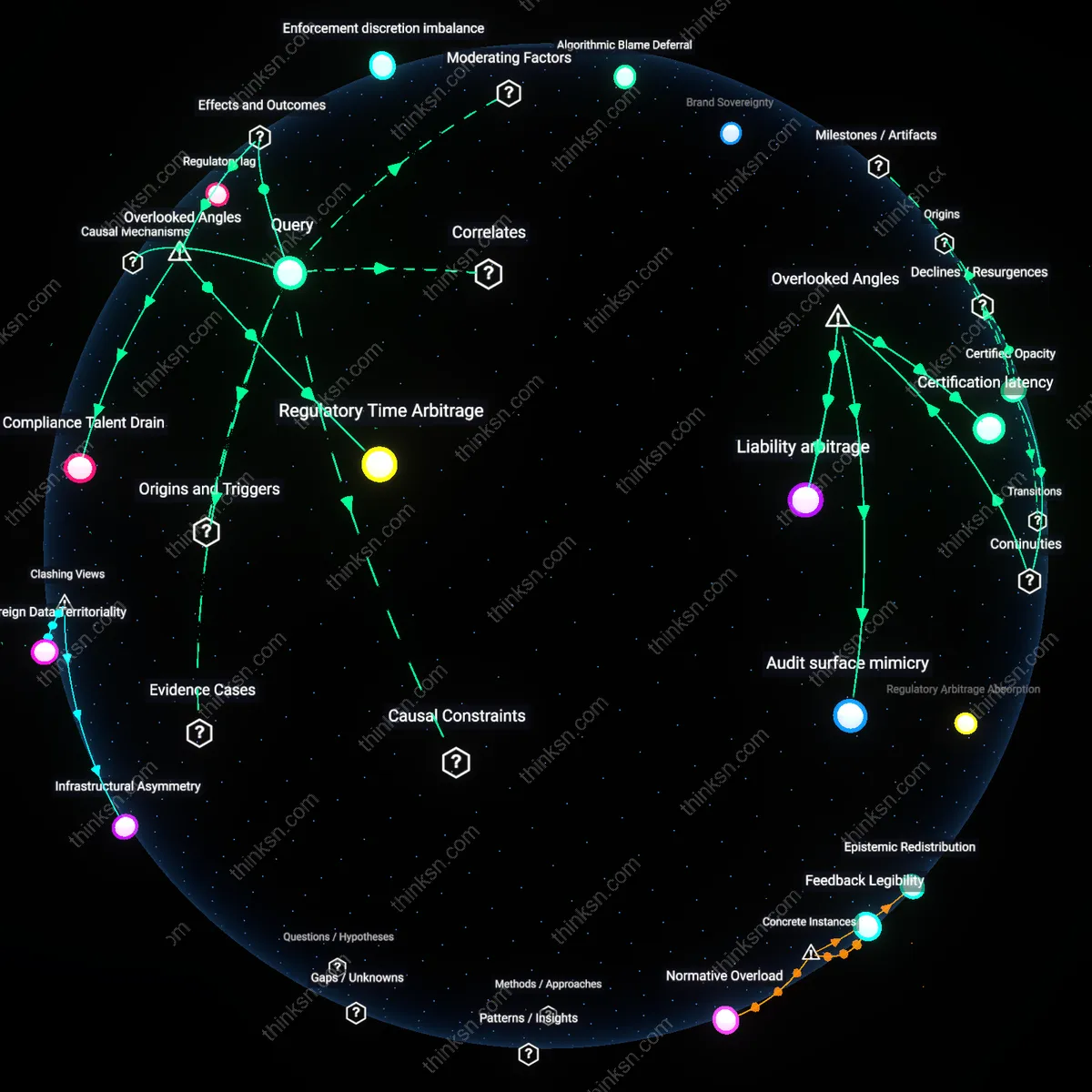

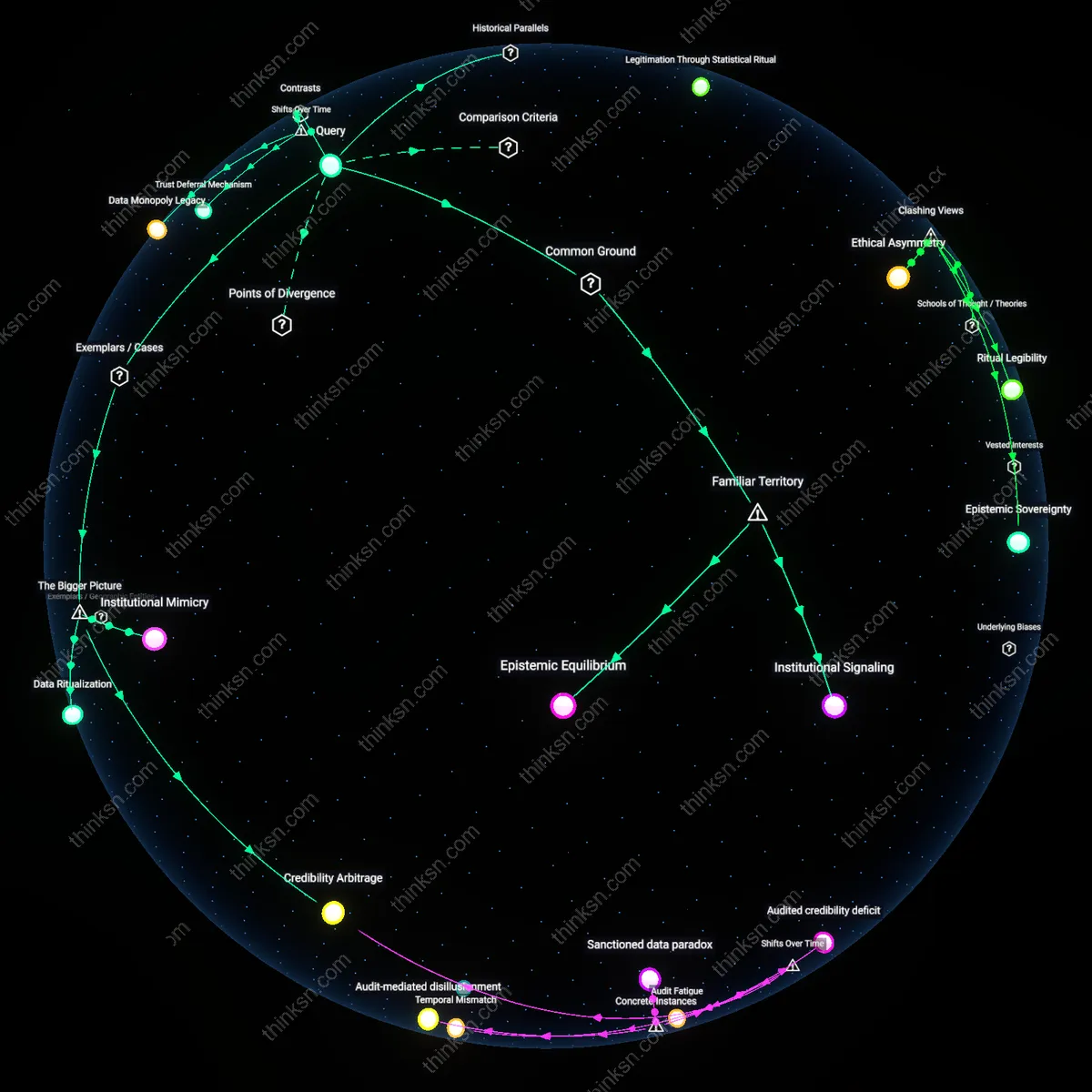

Analysis reveals 5 key thematic connections.

Key Findings

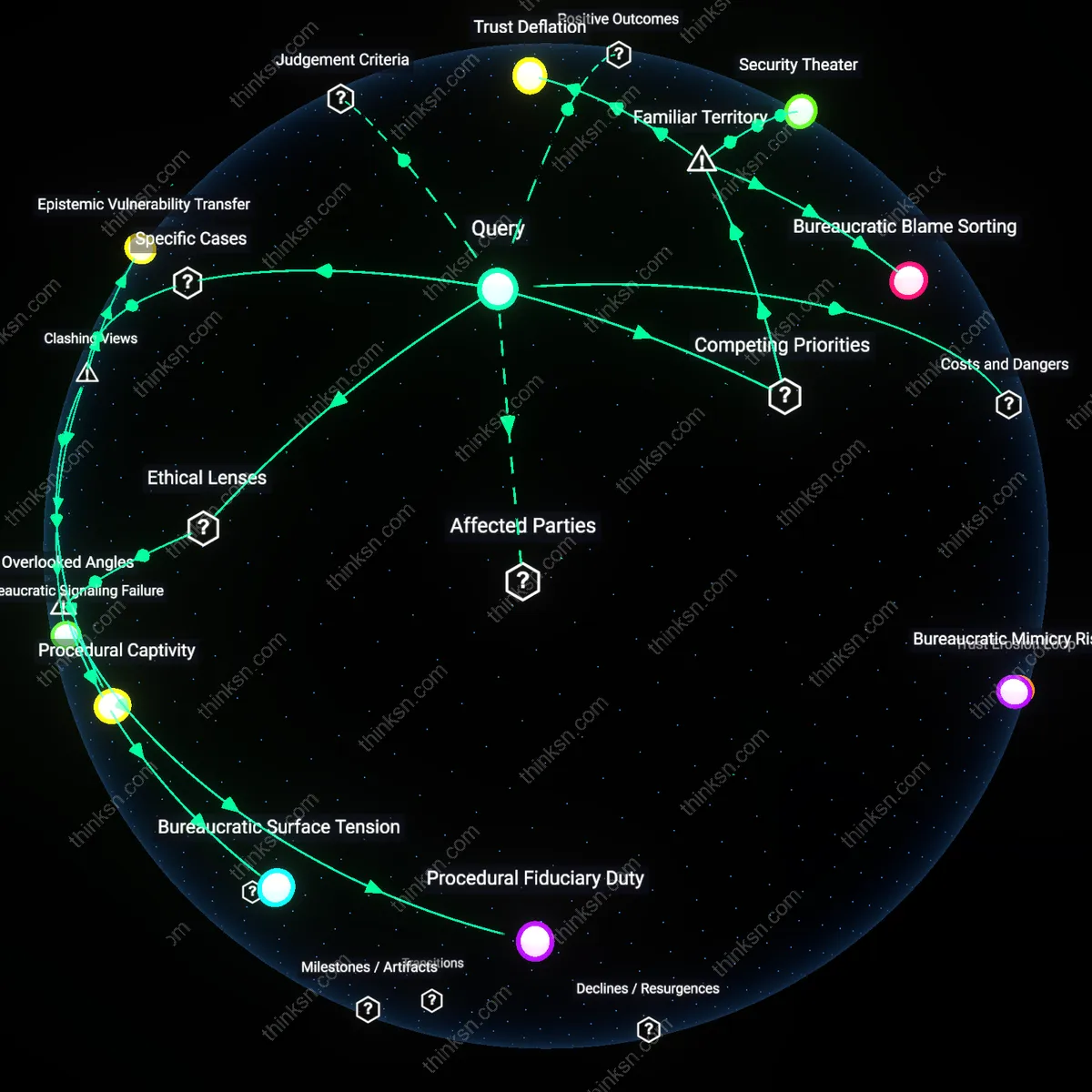

Data Trust Externalization

Citizens can evaluate the convenience-risk trade-off of digital ID systems by observing the degree to which independent oversight bodies are empowered to audit data access patterns across government agencies. When judicial or statutory data trusts—such as Estonia’s X-Road overseers—are granted real-time monitoring authority and public reporting mandates, they transform asymmetric power dynamics into accountable data flows, which allows users to trust system integrity without requiring technical expertise. This mechanism is analytically significant because it shifts risk assessment from individual judgment to institutional credibility, exploiting the systemic pressure for bureaucratic transparency in digitally centralized states where service efficiency depends on public participation.

Service Interdependence Leverage

Citizens gain evaluative power over digital ID systems when essential services like healthcare or banking are integrated conditionally—only upon demonstrable privacy safeguards—because their dependence on these services forces governments to maintain public trust to ensure system uptake. In India, the Aadhaar ecosystem sustains legitimacy not through consent alone, but through the functional necessity of the ID for accessing subsidized food rations and tax filing, which in turn pressures authorities to limit overt profiling to avoid systemic rejection. This dynamic reveals how user reliance on convenience becomes a political counterweight to abuse, embedding accountability within the infrastructure of daily life rather than relying on legal oversight alone.

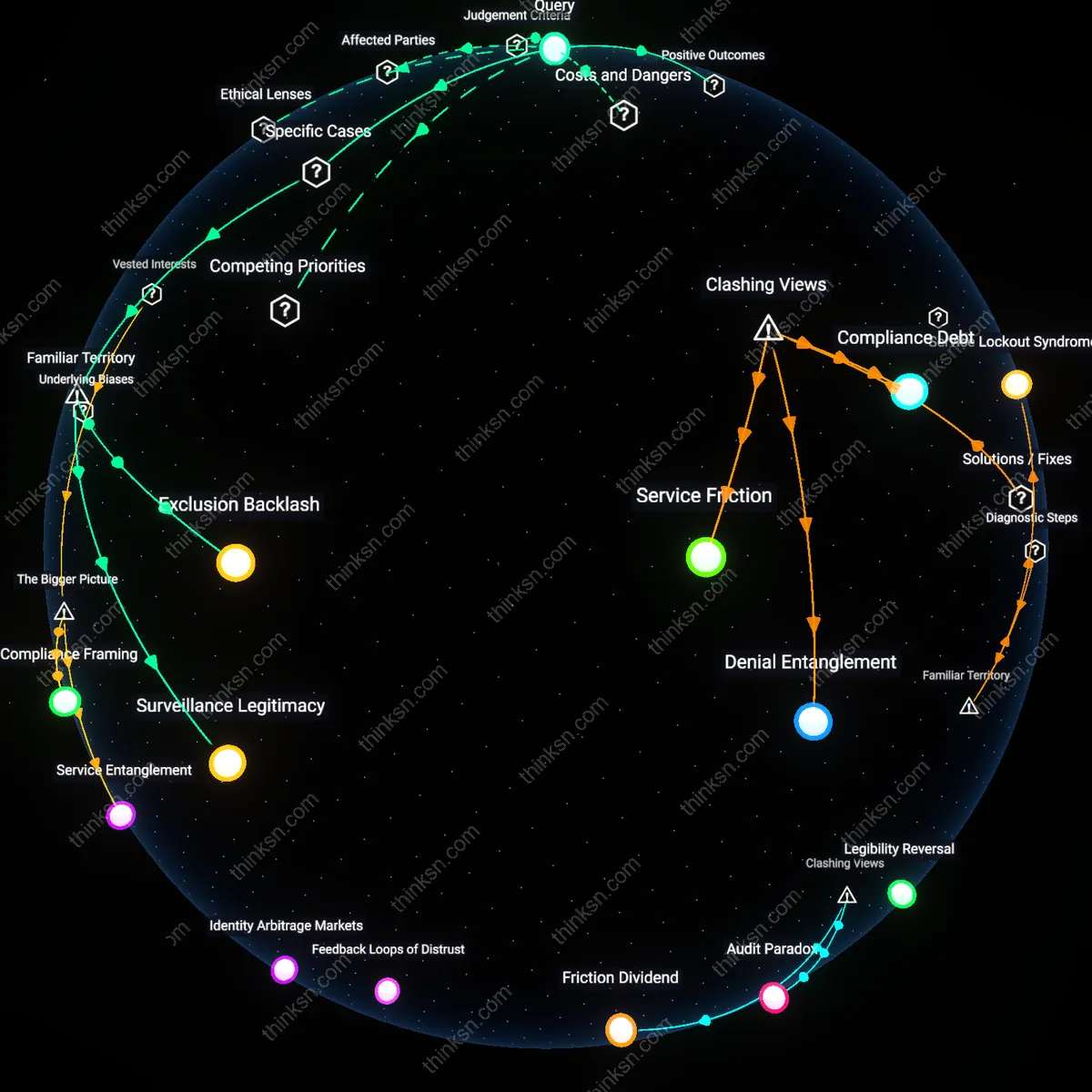

Surveillance Legitimacy

Citizens evaluate the trade-off by observing whether trusted public institutions, like Estonia’s e-Residency program, maintain transparency in data access protocols, because visible judicial oversight and audit logs make the system’s integrity legible to users. The mechanism—routine publication of state access requests and algorithmic accountability panels—creates an illusion of control that mirrors due process norms, even when the state retains ultimate discretion. What is underappreciated is that this perceived procedural fairness, not actual data restriction, becomes the primary metric of trust in high-functioning digital ID systems.

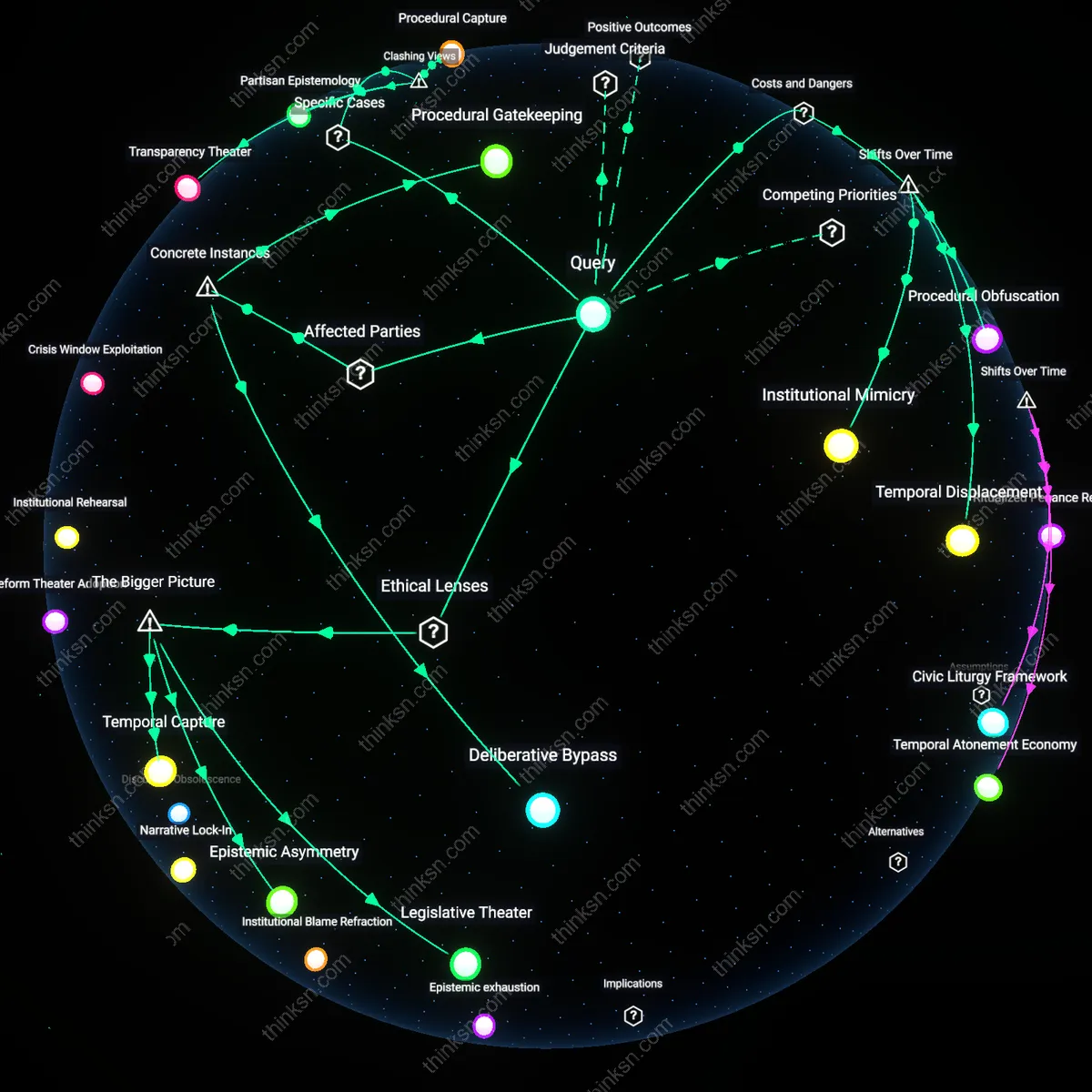

Exclusion Backlash

Citizens infer risk from visible cases of denial or restriction, such as India’s Aadhaar-linked welfare rejections in rural communities, because systemic errors in biometric verification directly disrupt access to essential services. The mechanism—automated authentication failure in low-infrastructure areas—exposes how unequal technical reliability creates de facto political marginalization, even without intentional profiling. What is underappreciated is that citizens often conflate technical inequity with intentional surveillance, treating malfunction as evidence of deliberate disenfranchisement.

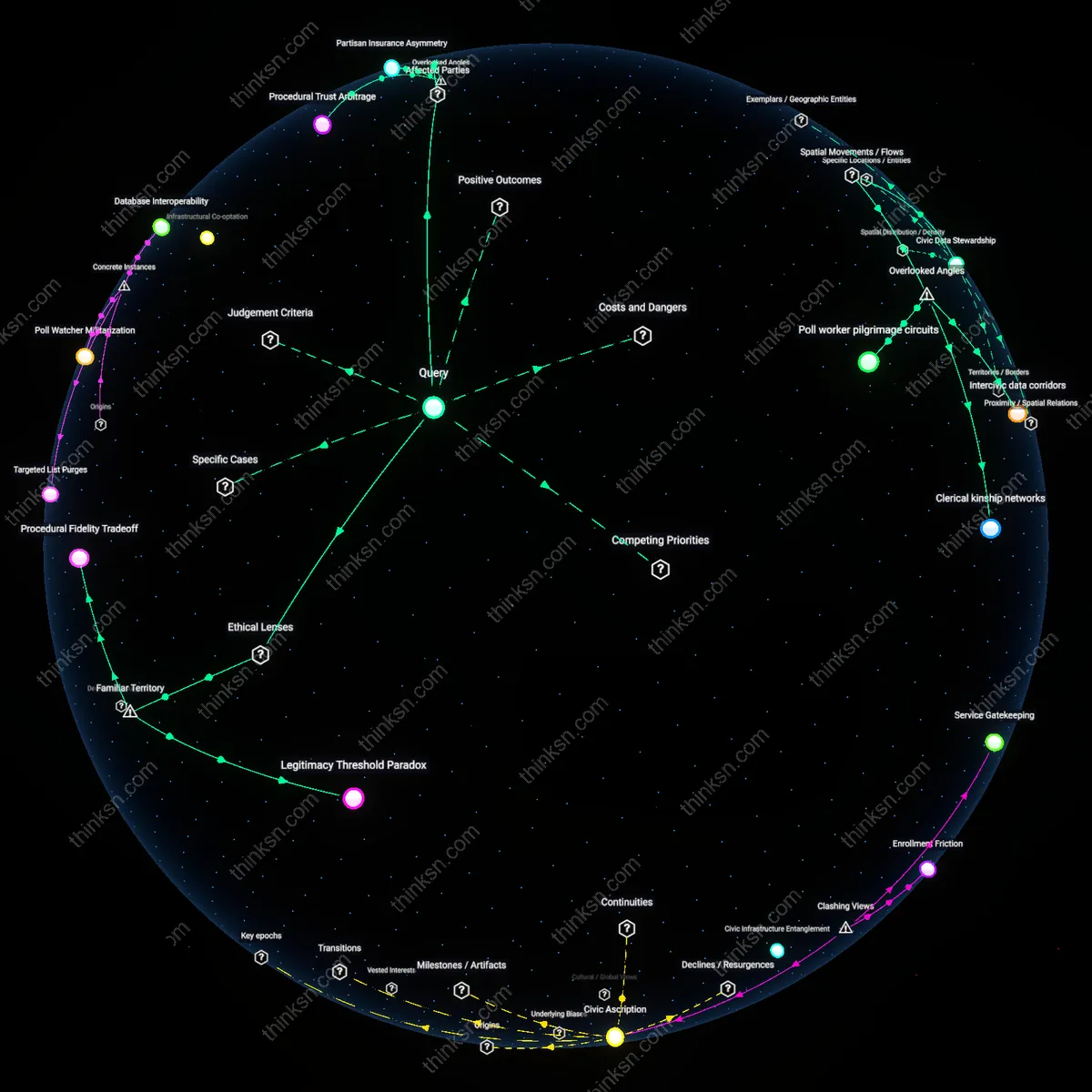

Function Creep

Citizens gauge danger by observing mission expansion, like China’s Social Credit System evolving from financial scoring to behavioral monitoring through integration with local digital ID platforms, because initial adoption for convenience normalizes infrastructure later repurposed for control. The mechanism—interoperability between civil service databases and public behavior tracking—enables incremental normalization of surveillance under the guise of efficiency. What is underappreciated is that the greatest risk isn’t overt profiling, but the silent redefinition of ‘public benefit’ to require continuous digital compliance.