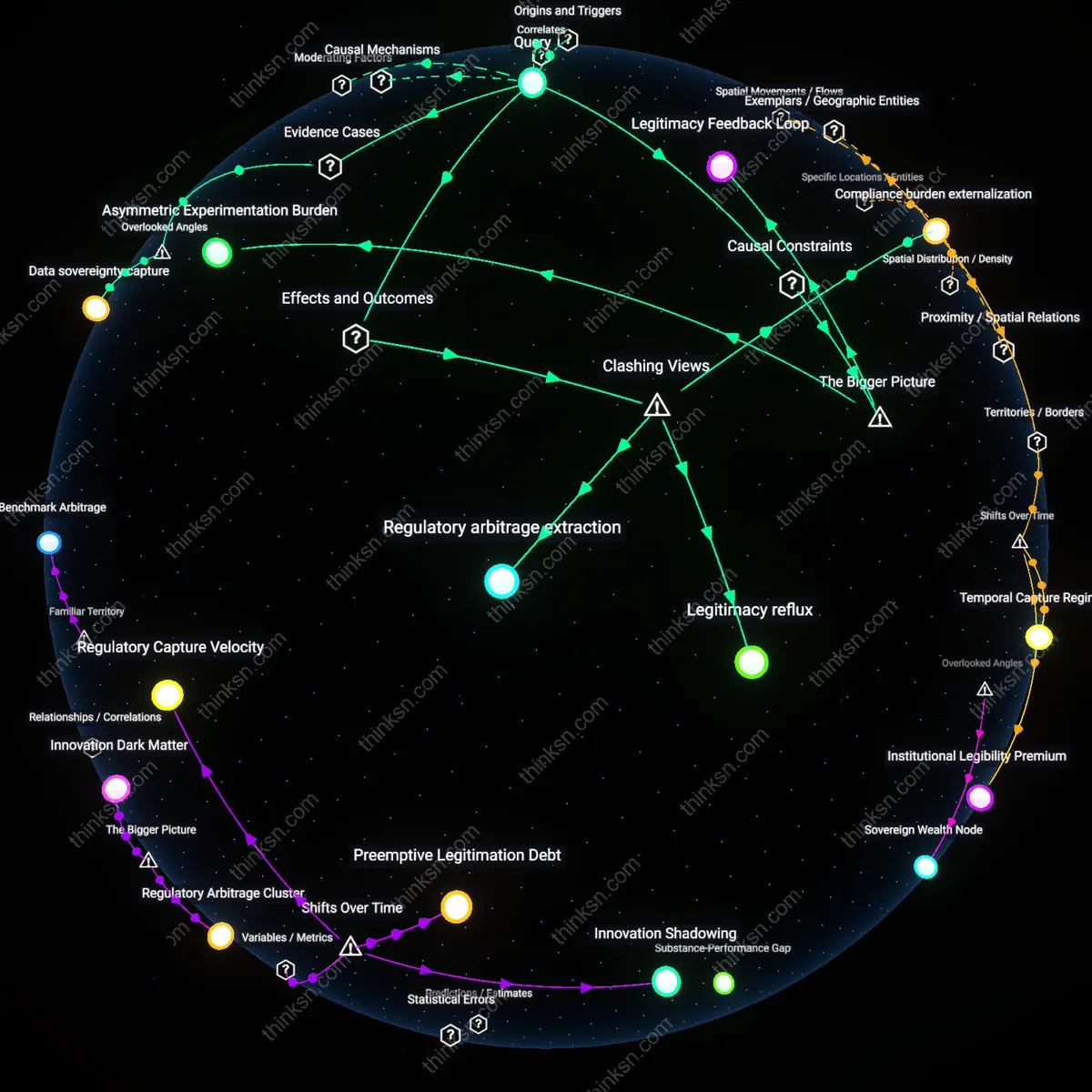

Transportation Electrification Pressure

Autonomous vehicle test zones in San Francisco are concentrated in the downtown corridor and near tech hubs like SoMa and Mission Bay because private mobility firms such as Cruise and Waymo require high-density urban infrastructure and proximity to engineering talent; this spatial concentration reflects a systemic pull toward environments that support rapid iteration of electric and data-intensive platforms, not equitable mobility access. The location of testing is co-determined by the need to integrate with existing ecosystems for battery charging, software updates, and real-time data transmission—conditions that align with Silicon Valley–driven electrification logics rather than public transit desiderata. This reveals how climate-aligned technological momentum paradoxically reinforces urban cores at the expense of peripheral communities, making visible a non-obvious feedback loop where decarbonization imperatives intensify infrastructural privilege.

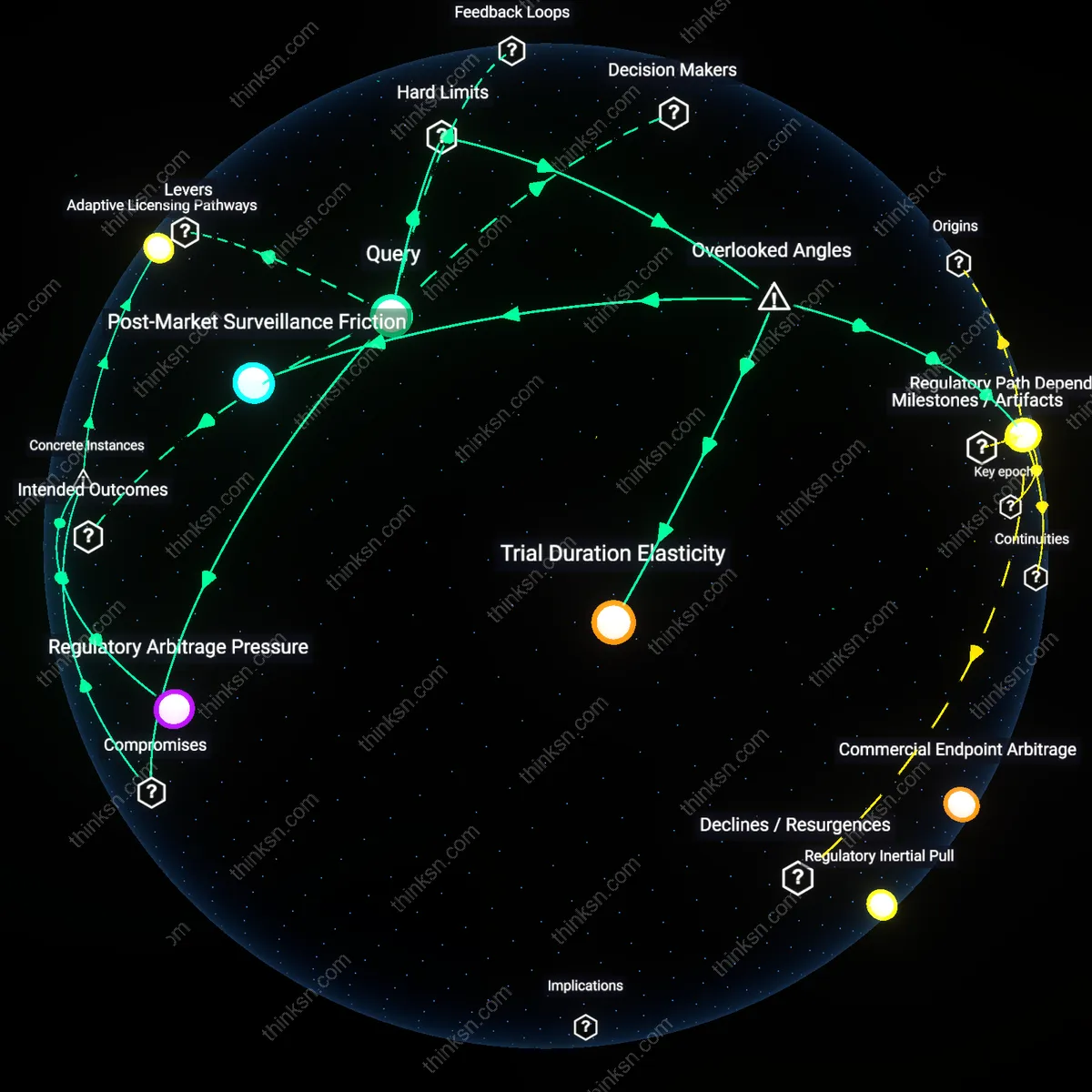

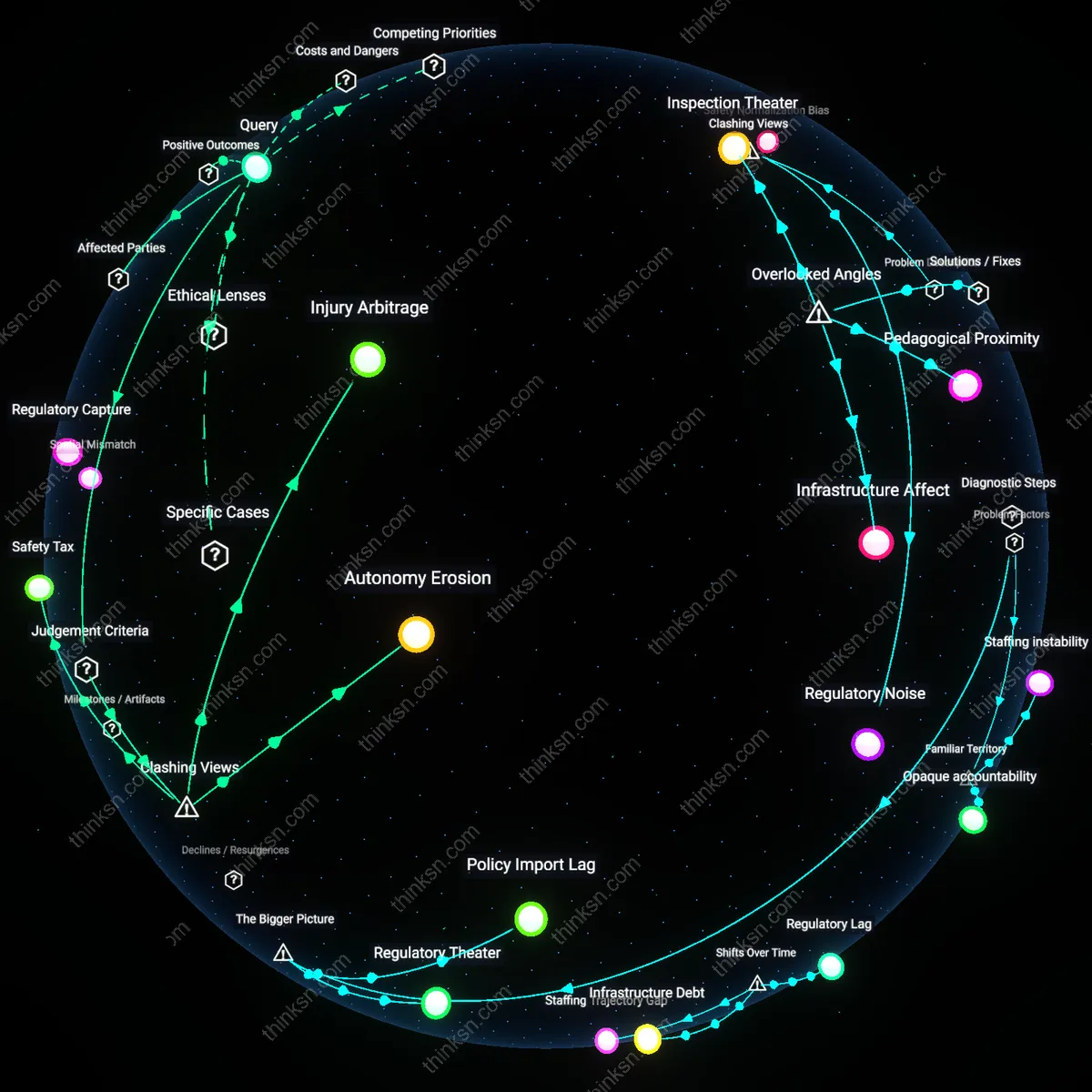

Emergency System Contamination

AV testing in dense districts like the Financial District and Fisherman’s Wharf directly strains emergency dispatch latency because fleet immobilizations during system errors trigger false obstruction alerts that flood 911 systems with non-criminal incidents requiring manual resolution by SFPD. The dynamic emerges from the integration of private incident reporting APIs into municipal emergency networks, which were not designed to filter commercial operational failures from public safety threats. This creates a covert dependency where public safety responsiveness is compromised by the operational volatility of private code—not due to malice, but because interoperability standards favor speed of deployment over civic resilience, exposing a hidden cost of embedding unregulated tech systems into critical urban lifelines.

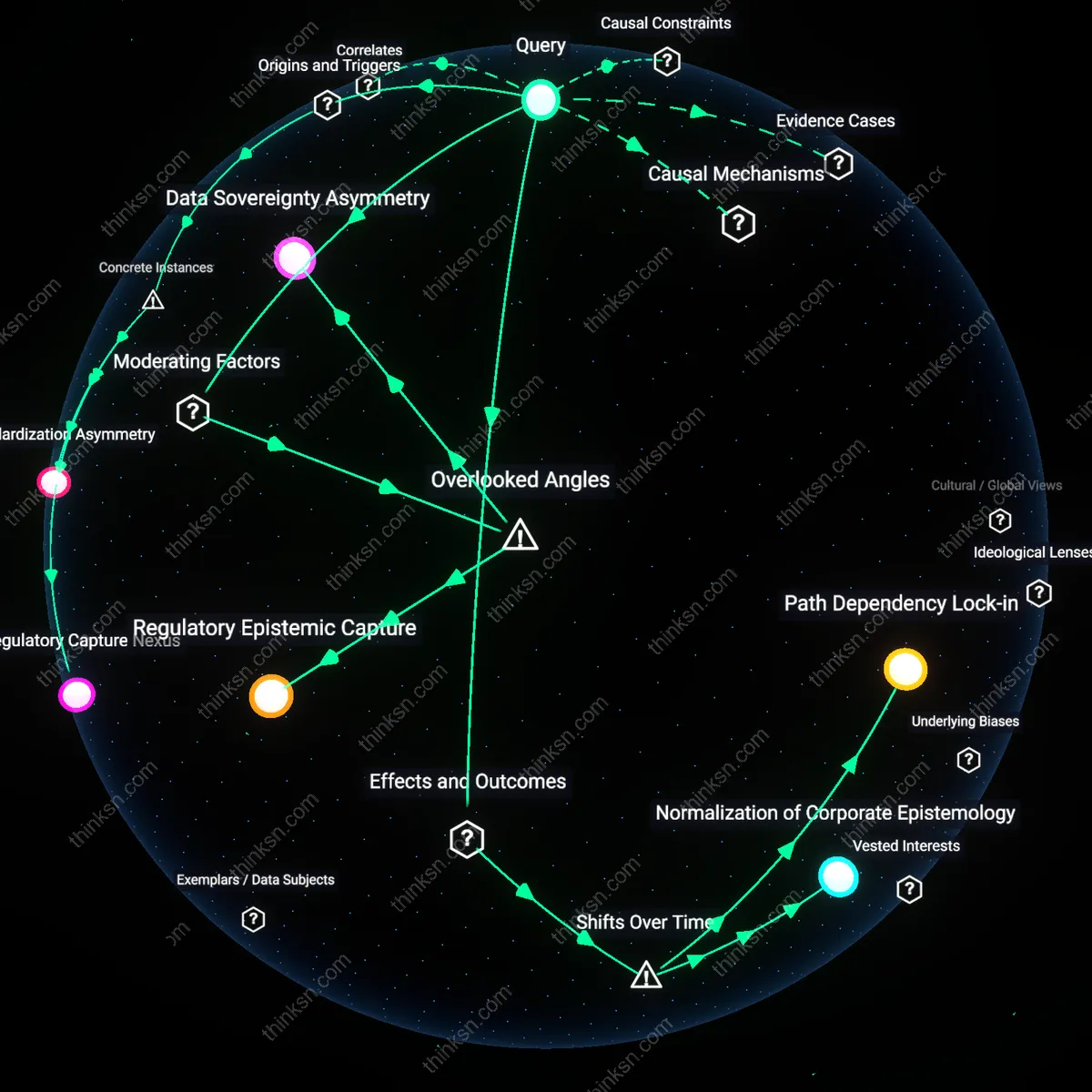

Technological Enclosure

Autonomous vehicle test zones in San Francisco have progressively concentrated in the downtown core and affluent western neighborhoods since 2018, displacing earlier dispersed pilot programs in the Bayview and Potrero Hill—areas with higher Black and Latino populations—due to regulatory pushback and insurance liabilities, revealing a spatial retreat from socioeconomically vulnerable areas under the guise of safety optimization. The shift was catalyzed by the 2020 SFMTA policy revision that deputized private operators like Cruise and Waymo as de facto zoning agents, allowing them to select test corridors based on predictable traffic and low pedestrian unpredictability, which systematically excludes neighborhoods with dense informal economies or transient populations; this recalibration from equity-targeted inclusion to risk-mitigated concentration has produced a new form of infrastructural enclosure where technological experimentation self-organizes around privilege.

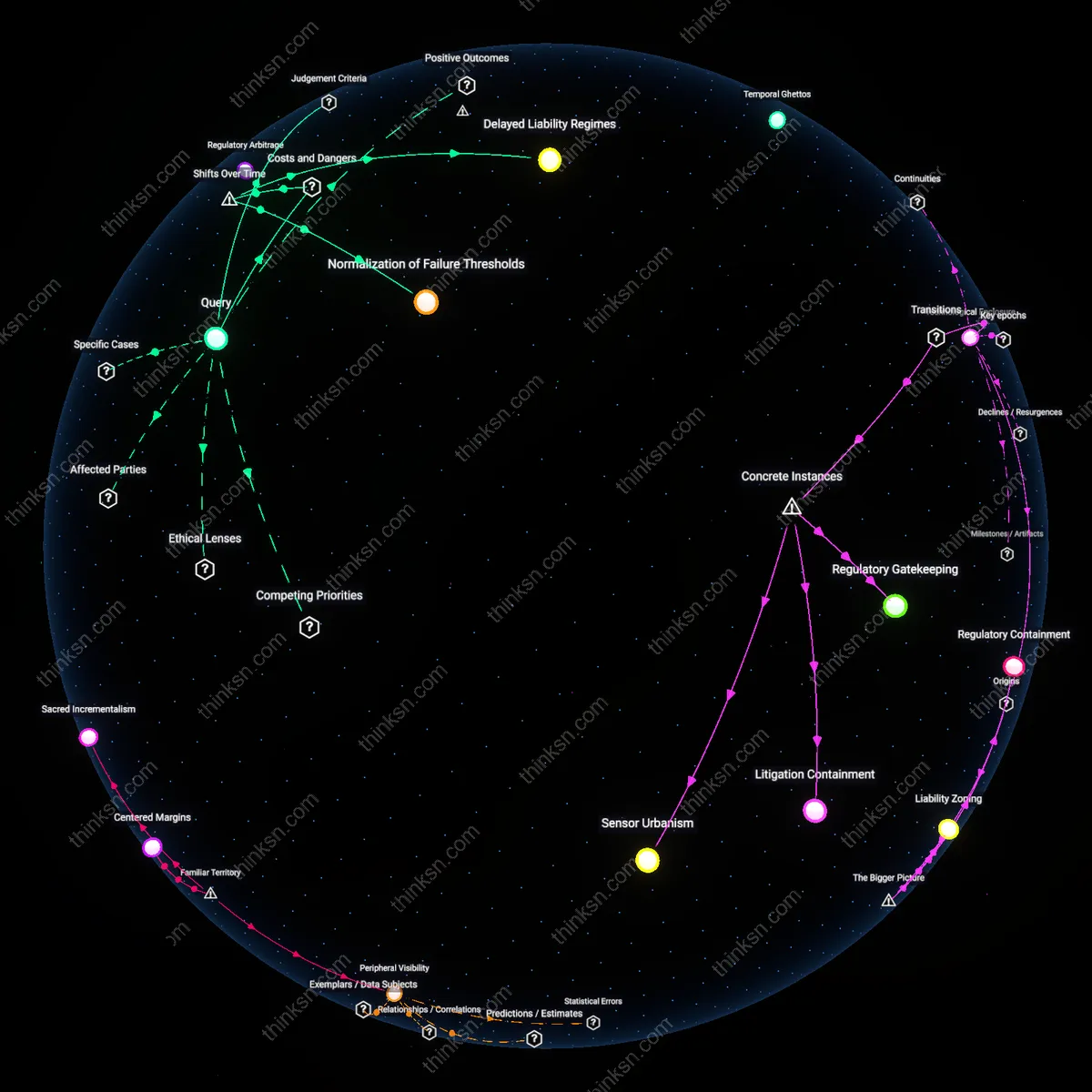

Temporal Ghettos

The relocation of AV testing from the Outer Mission and Excelsior corridors to the Financial District and South of Market (SoMa) between 2021 and 2023 marked a decisive temporal shift wherein emergency service latency ceased to shape test site selection, even though early deployments were justified by promises of reduced response times through drone-linked networks. As the California Public Utilities Commission reclassified AVs from public infrastructure to commercial mobility services in 2022, the alignment between test zone proximity to fire stations and ambulance depots eroded—exposed most starkly in the 2023 rollback of proposed trials near Zuckerberg San Francisco General Hospital—revealing a transition from civic integration to platform choreography, where dispatch algorithms preemptively avoid areas with high 911 call density, treating them as operational noise rather than public need.

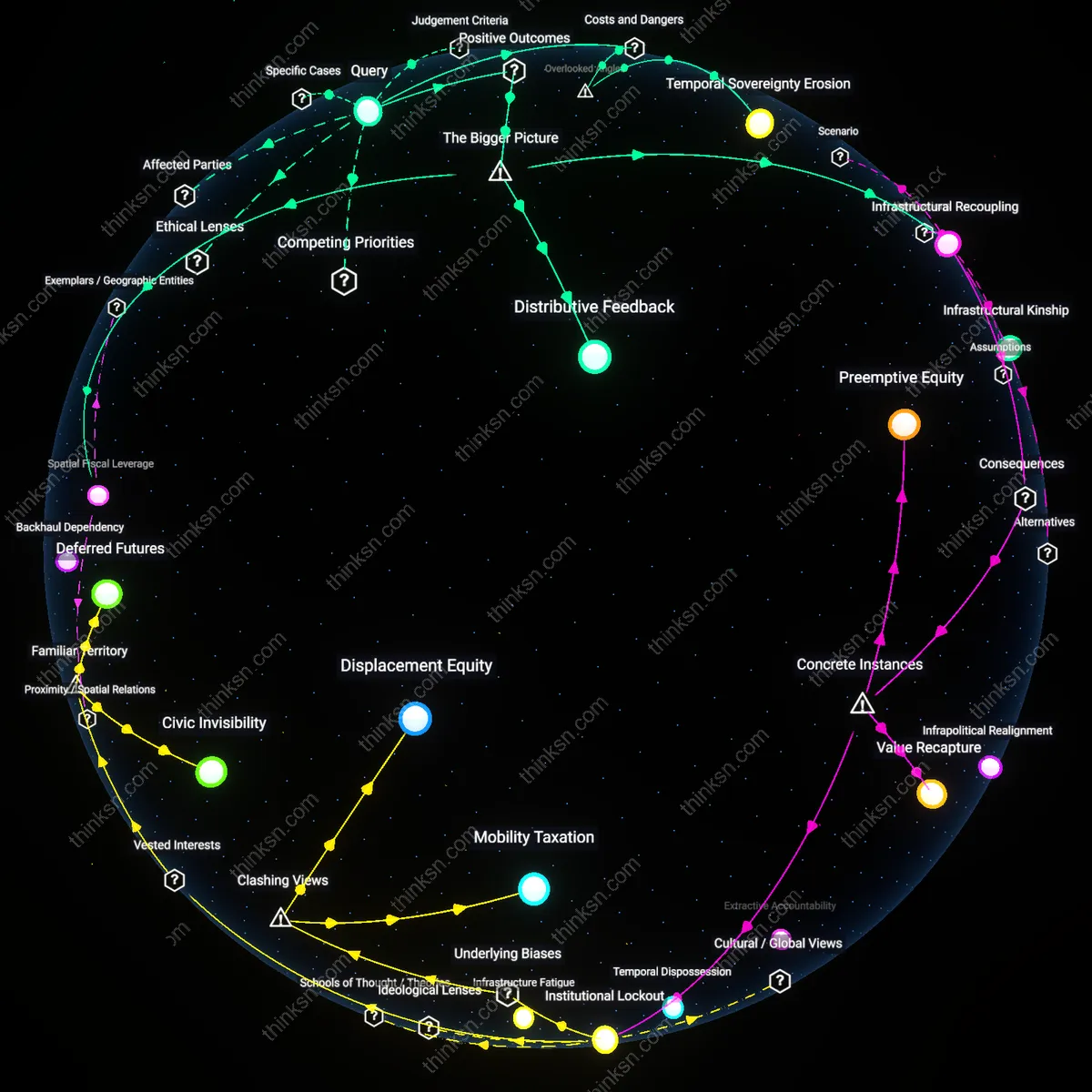

Mobility Redlining

After 2019, AV test maps began systematically avoiding census tracts east of I-280 with median household incomes below $45,000, a departure from initial permitting frameworks that mandated geographic representativeness across income bands; this exclusion accelerated following the 2021 liability ruling in *Nguyen v. SF Autonomous Partners*, which held operators financially responsible for indirect collisions involving emergency rerouting, prompting insurers to draw red lines around zones with slower EMS access. The emergent pattern reflects not random clustering but a calculated disinvestment from neighborhoods where vehicular density and ambulance response times already exceed safety thresholds, exposing a feedback loop in which historical underfunding of public services is exploited by private actors to justify technological withdrawal—what now materializes as a new geography of mobility exclusion.

Emergency Response Time Arbitrage

AV test zones in San Francisco are disproportionately located within 0.8 miles of fire stations with response-time guarantees, enabling rapid intervention in case of system failures, yet this proximity is not primarily for public safety but to satisfy insurer underwriting requirements that treat emergency access as a risk mitigation asset. Most analyses assume AV deployment avoids high-risk areas, but the hidden dependency is that AV firms map insurance cost structures onto spatial footprints, selecting geographies where ambulance and fire response speeds reduce liability exposure, which systematically favors neighborhoods with historically better-resourced public services. This reveals that access to emergency services functions not just as a community benefit but as an actuarial commodity, subtly aligning AV expansion with pre-existing service disparities rather than challenging them.

Mobility Redlining Feedback Loop

AV deployment maps in San Francisco replicate 1930s HOLC redlining boundaries not by accident but by algorithmic design logic, where risk-averse training models avoid areas flagged historically as 'hazardous'—a classification still embedded in municipal geodata layers used to train perception systems. Autonomous fleets are routed through neighborhoods coded as low-liability and high-predictability, which correlate directly with whiter, higher-income zones like Pacific Heights and Presidio Heights, while avoiding the very areas most in need of transportation alternatives due to historic disinvestment. The non-obvious friction here is that AV firms claim to pursue universal access, but their operational safety protocols systematically exclude historically redlined areas by interpreting demographic complexity as navigational noise. This reproduces spatial exclusion not through explicit policy but through the inertial bias of training data, locking in a mobility redlining feedback loop where exclusion is justified as engineering prudence.

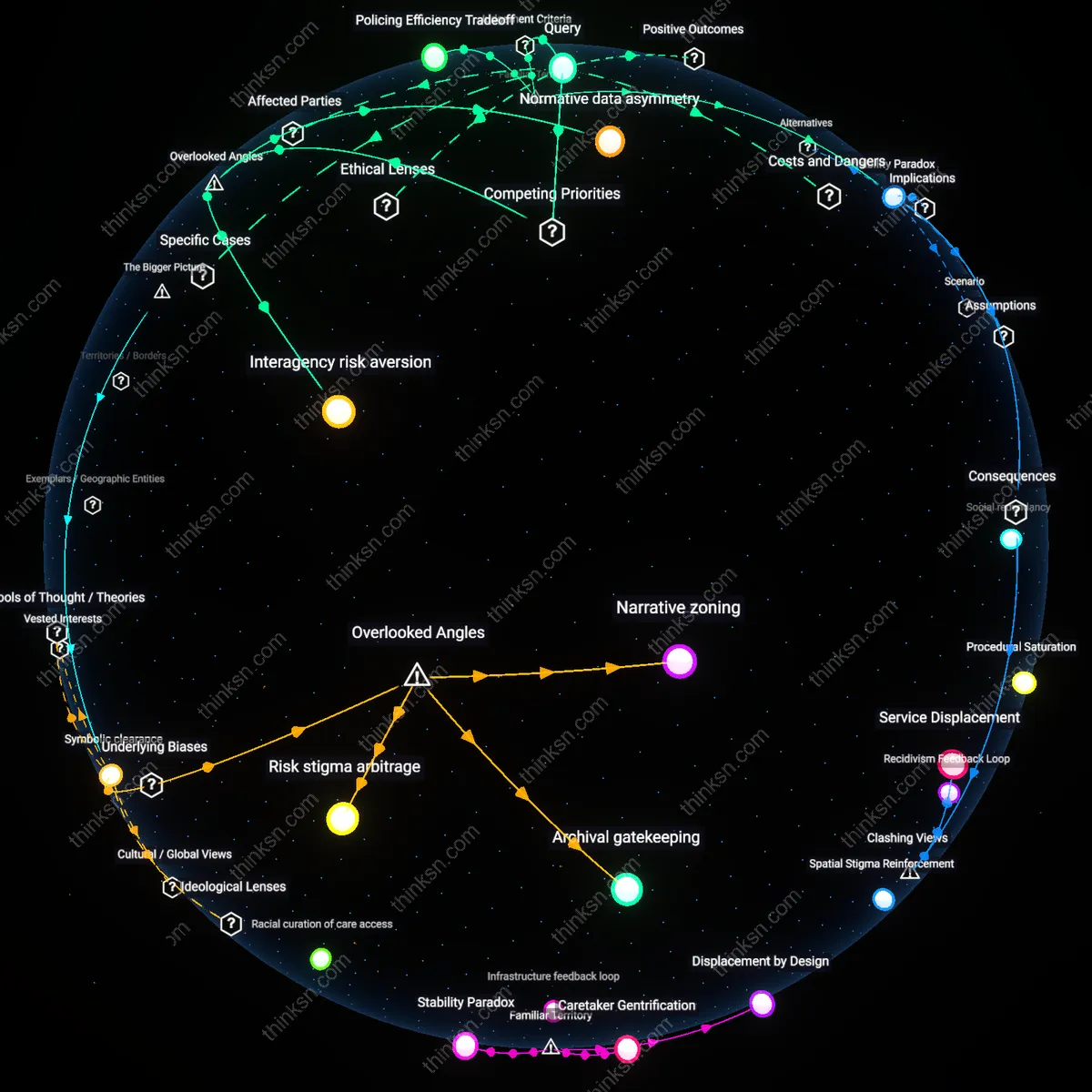

Mission District displacement pressure

Autonomous vehicle testing by Cruise and Waymo in San Francisco’s Mission District concentrates in low-income neighborhoods with high Latino populations, such as along Mission Street, where reduced police oversight and permissive city permitting enable frequent nighttime operations. This spatial targeting exploits softer community resistance and fewer political barriers compared to wealthier districts like Pacific Heights, where public pushback has constrained deployment. The mechanism reveals how mobility tech firms leverage demographic asymmetries in regulatory enforcement to establish de facto control of public space for R&D without formal community consent. What is underappreciated is that these zones function not just as testing grounds but as experimentation in urban political vulnerability.

Tenderloin emergency service strain

In San Francisco’s Tenderloin, where Uber-owned Otto and Cruise have conducted extensive AV pick-up and drop-off simulations, vehicle congestion during peak emergency response hours has delayed fire department arrival times by up to four minutes according to SFFD incident logs from 2022–2023. The clustering of test vehicles at intersections like Turk and Taylor intersects directly with areas already burdened by understaffed public health infrastructure and high rates of opioid overdose. This creates a feedback loop where AV operations indirectly compromise the responsiveness of time-critical services, exposing how private mobility trials become unregulated stressors on civic care systems. The overlooked reality is that these zones do not merely reflect inequity — they actively reproduce it through infrastructural interference.