Is Relational Data in Credit Scoring Discriminating Against Free App Users?

Analysis reveals 10 key thematic connections.

Key Findings

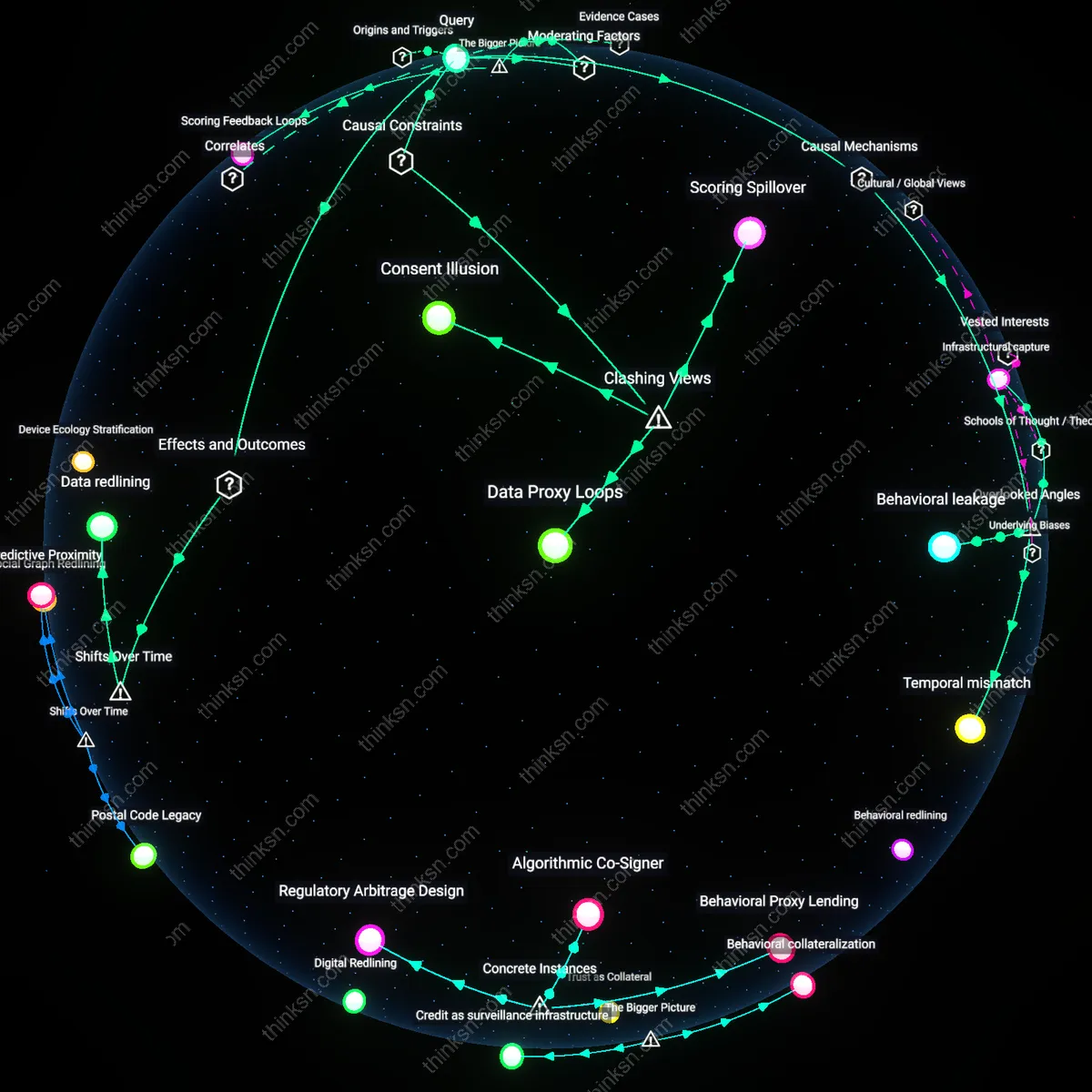

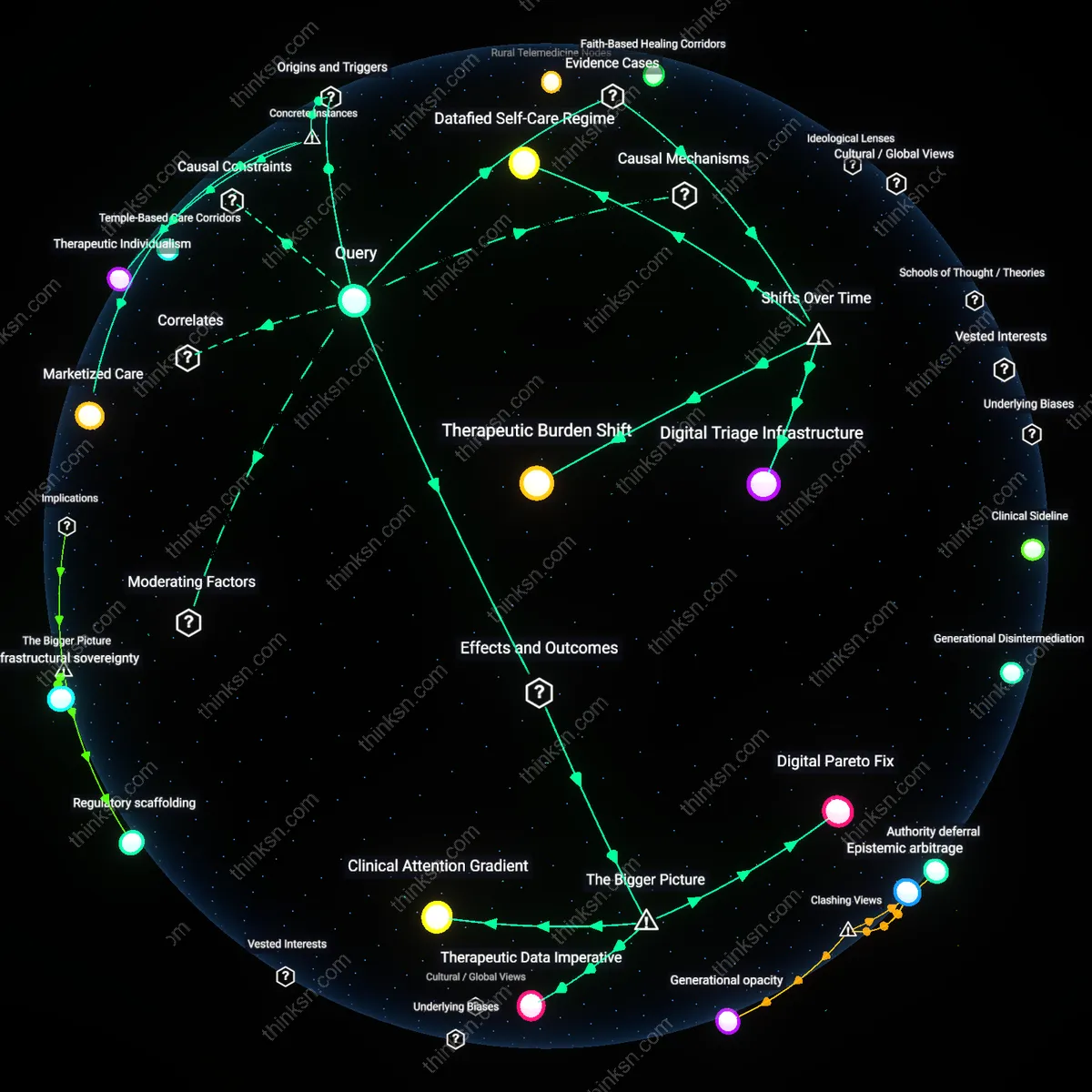

Behavioral leakage

Incorporating relational data such as social network patterns into credit-scoring algorithms in free financial apps inadvertently assigns financial risk based on behavioral proxies not directly linked to creditworthiness, because app providers harvest metadata like frequency of contact, shared devices, or communication patterns with wealthier contacts to infer stability. This mechanism assumes that associating with financially stable users implies similar trustworthiness—a heuristic codified in opaque models developed by fintech firms in markets like Kenya and India where formal credit histories are sparse. What is overlooked is that relational spillover creates 'behavioral leakage' where routine interpersonal activity becomes a risk signal, reframing everyday sociability as a financial liability, which distorts fairness by penalizing structurally embedded behaviors rather than individual intent.

Infrastructural capture

Relational data pipelines in free financial apps become sites of infrastructural capture when third-party analytics firms, such as those providing SDKs embedded in apps like Cash App or Tala, bundle contact lists, messaging patterns, and geolocation co-occurrences into standardized feature vectors that are then used across platforms to assess credit risk. Because these modular data infrastructures are reused across multiple services, a single relational data point—like being frequently contacted by someone with delinquent accounts—can propagate silently across services without user awareness or recourse. This hidden standardization of social proximity as a credit proxy transforms modular software dependencies into enduring risk exposures, a dynamic rarely considered in fairness audits that focus on model outputs rather than the pre-trained data supply chain, making infrastructural capture the concealed source of systemic bias.

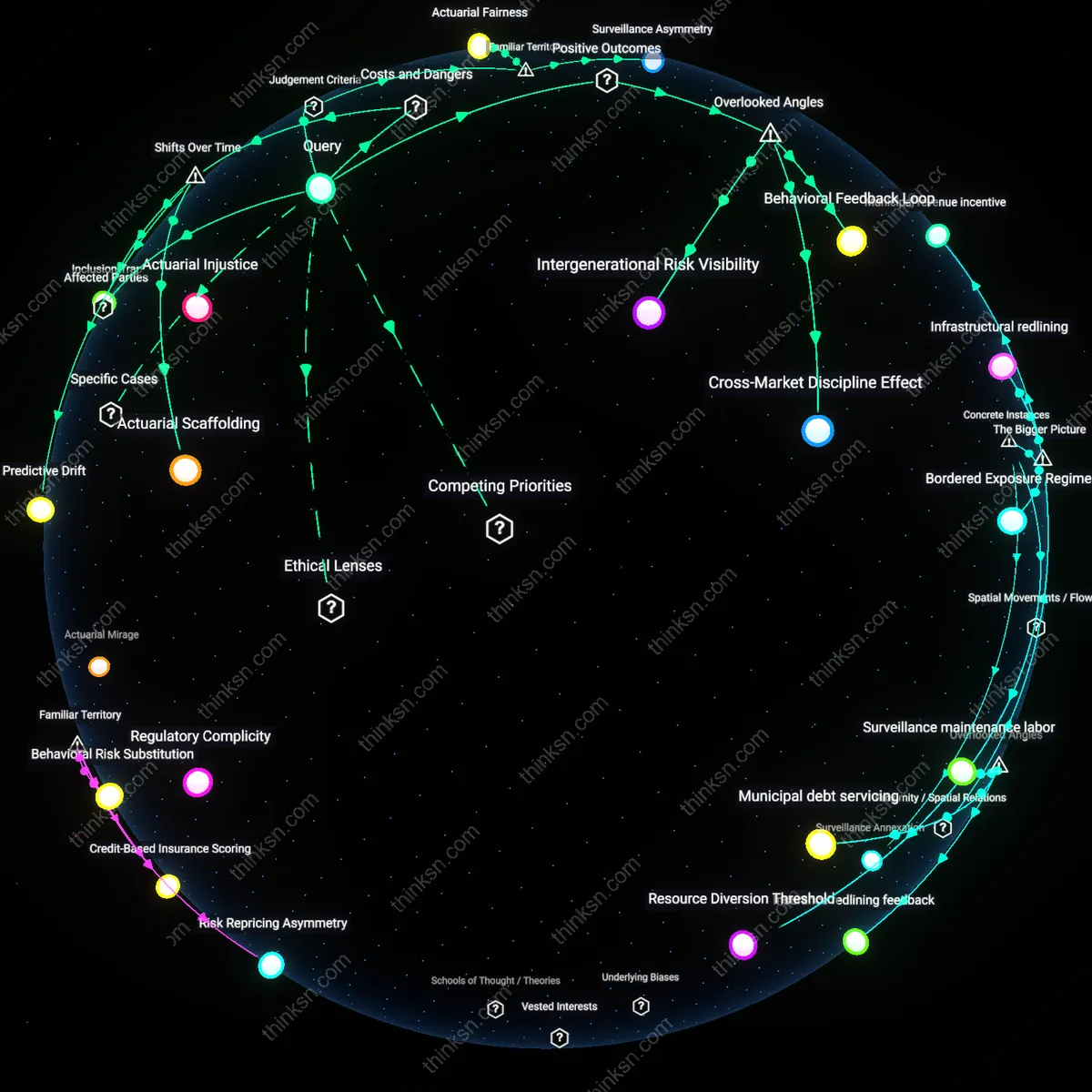

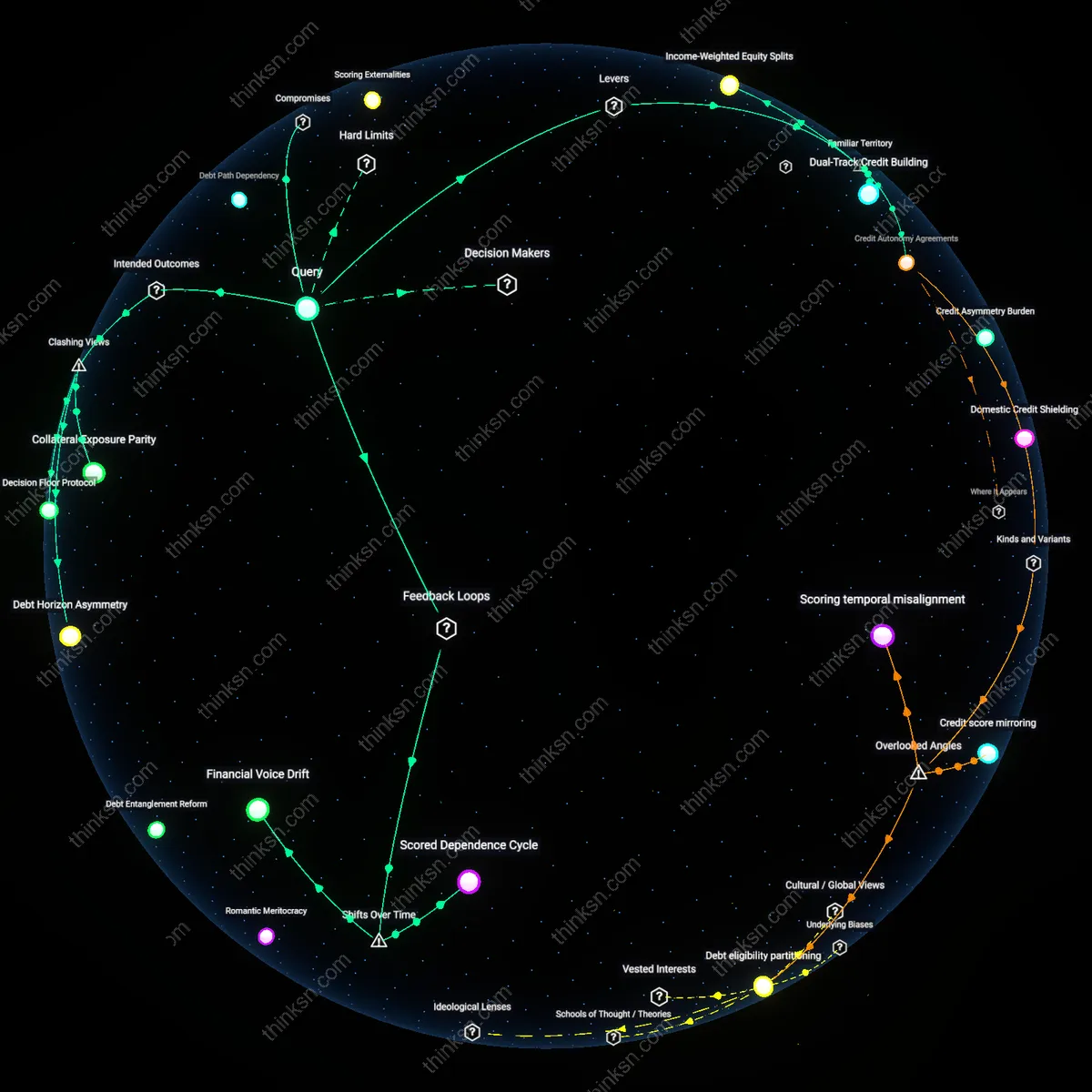

Temporal mismatch

The use of relational data in mobile credit scoring imposes a temporal mismatch by treating socially dynamic relationships as fixed financial attributes, because algorithms freeze social ties at a single point—such as the moment a user first invites a contact—to generate long-lasting risk assessments that ignore relationship evolution over time. When a user in the Philippines receives a loan through a telco-backed fintech service based on their connection to a college roommate who later defaults, the model does not retroactively adjust for relationship dissolution or social distance, locking individuals into outdated network identities. This overlooked rigidity creates a lag between lived social reality and algorithmic representation, distorting accountability by binding financial outcomes to transient affiliations, which challenges the assumption that relational data improves accuracy when in fact it fossilizes context.

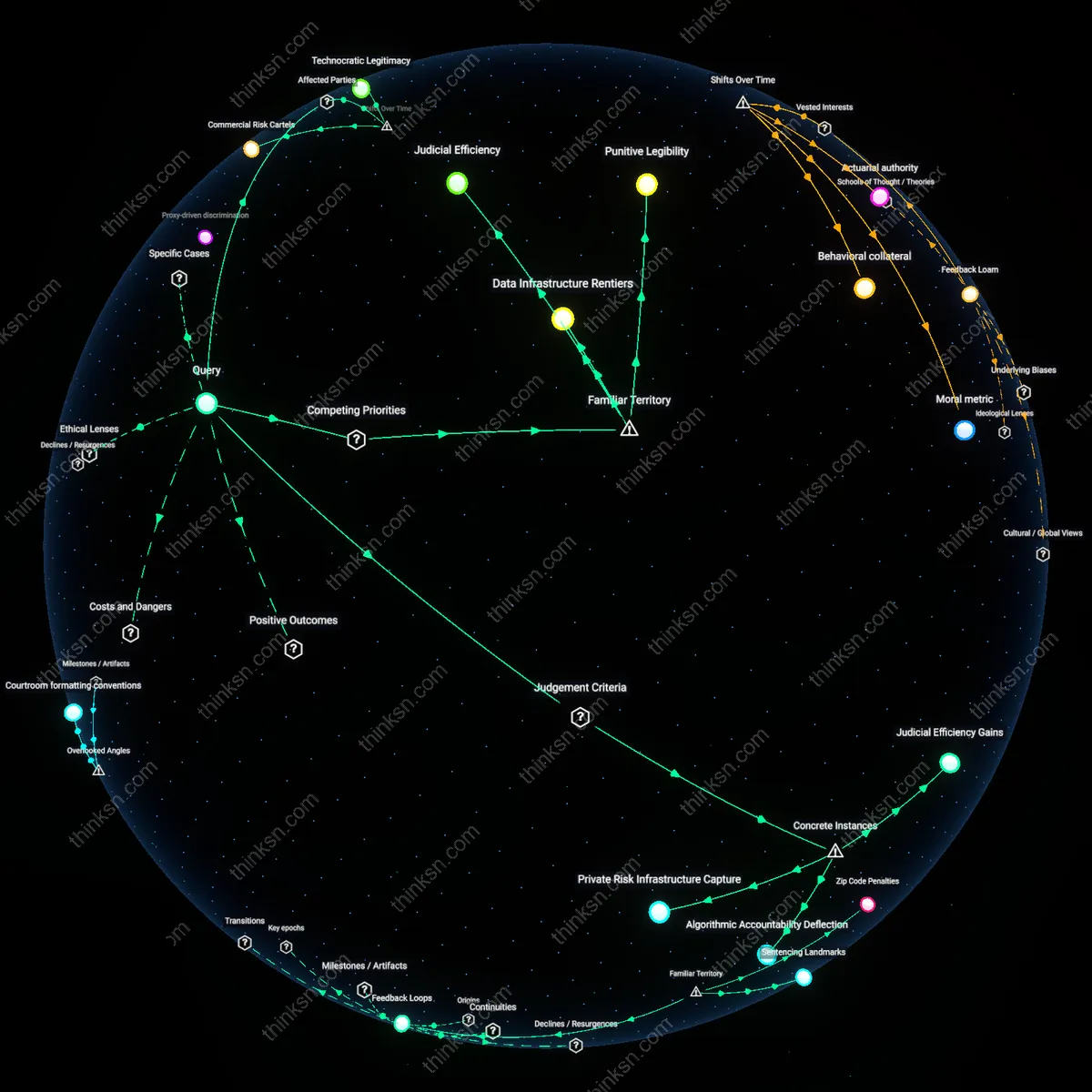

Predictive displacement

The integration of relational data into credit algorithms since the mid-2010s has shifted risk assessment from individual financial behavior to networked associations, rendering users accountable for the economic patterns of their contacts. This transition moves beyond income or repayment history, using metadata from social connections—such as frequent transactions with underbanked individuals or proximity to high-default geographies—to probabilistically downgrade creditworthiness. Operating within proprietary models used by fintech apps like Chime or Affirm, this mechanism evades traditional anti-discrimination safeguards by framing exclusion as statistical necessity rather than intentional bias. The historically distinct moment lies in the outsourcing of credit judgment from regulated banks to app-based platforms, where algorithmic logic normalizes indirect punishment through association—altering the moral framework of financial responsibility.

Data redlining

As free financial apps expanded after 2018 by monetizing user behavior through embedded credit scoring, they began replicating the geographic and racial exclusions of 20th-century redlining under a new technical guise—using relational proximity to disqualify users in structurally unequal neighborhoods. Unlike the explicitly race-based policies of mid-century lending, today’s models leverage network density, device type, and app usage frequency to indirectly identify and penalize residents of historically marginalized areas like South Chicago or North Philadelphia. These inputs, while formally race-neutral, correlate strongly with segregation patterns amplified by decades of disinvestment—meaning algorithms trained on relational data absorb and re-inscribe spatial inequities as behavioral risk. The shift from explicit exclusion to cryptic statistical filtering reveals how regulatory lag enables old hierarchies to persist through new data logic.

Regulatory Lag

Loose oversight of fintech credit models enables unregulated data proxies to entrench bias in free app lending decisions. Regulatory bodies like the Consumer Financial Protection Bureau lack updated authority to monitor algorithmic weighting of non-traditional relational data—such as social network patterns or behavioral metadata—collected by apps like Chime or Cash App, allowing indirect race or class proxies to skew risk assessments without formal accountability. This gap is systemically significant because it shifts creditworthiness determination into opaque technical systems while evading the anti-discrimination guardrails of the Fair Credit Reporting Act, which were designed for traditional credit bureaus, not app-based data ecosystems.

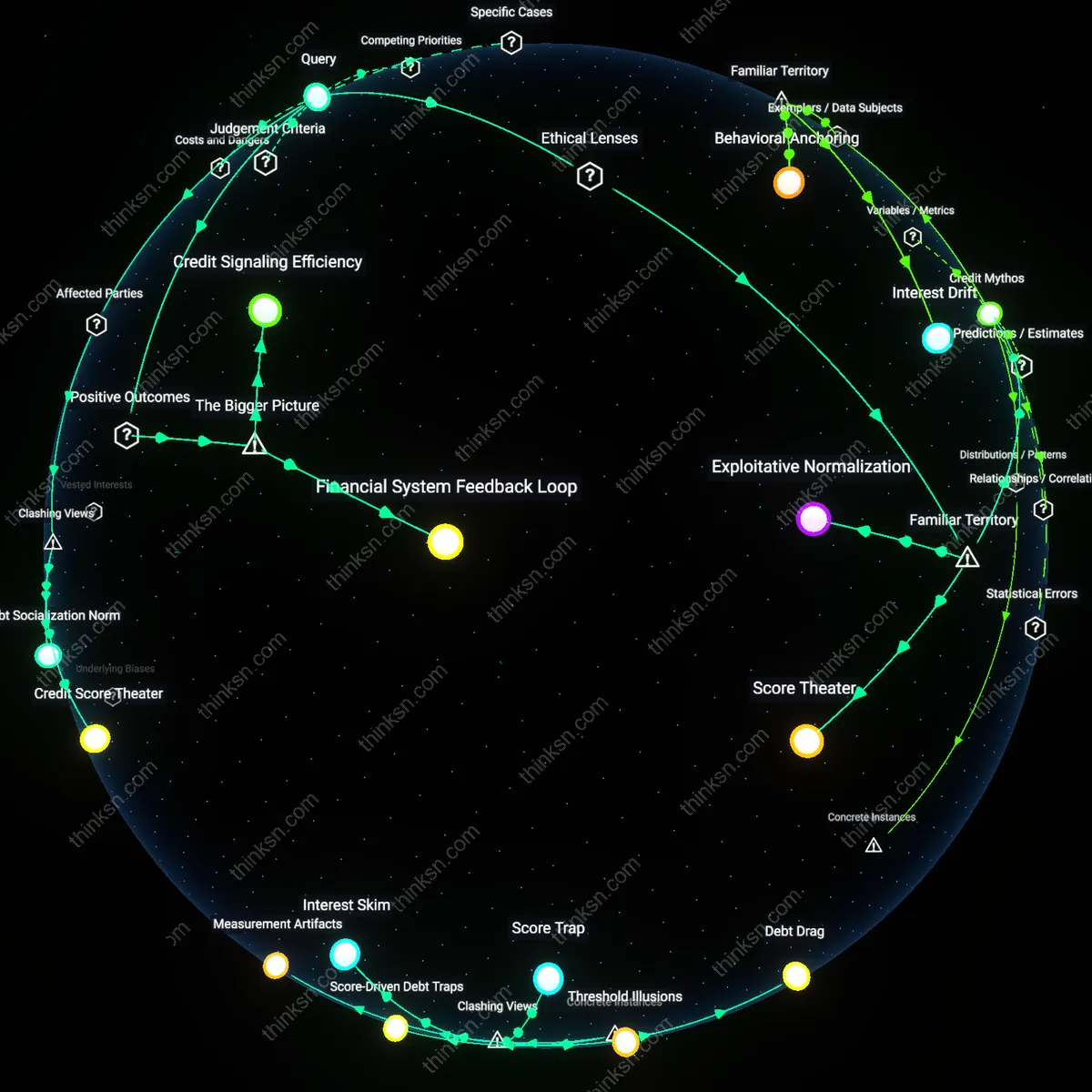

Scoring Feedback Loops

Relational credit data reinforces existing inequality by treating historically deprived networks as indicators of individual risk, not structural constraint. When algorithms interpret a user’s limited connections to banked individuals or frequent small transfers to kin as red flags, they pathologize survival behaviors common in unbanked communities, reducing approval odds and embedding past exclusion into future access. This mechanism is systemic in that it converts social capital deficits—themselves outcomes of redlining or wage stagnation—into algorithmic penalties, making the score both a mirror and an engine of inequality.

Data Proxy Loops

Relational data in credit-scoring does not primarily harm through direct bias but by enabling financial apps to bypass consumer protection laws designed for credit decisions, thereby embedding social connections as secondary risk proxies. Fintech platforms in the United States increasingly rely on network behaviors—such as payment patterns within app-based social groups—to infer creditworthiness, a method unregulated by the Equal Credit Opportunity Act which applies only to formal credit decisions, not pre-scoring data harvesting. This creates a feedback loop where race- or class-correlated relational patterns become structural inputs without triggering legal scrutiny, making discrimination invisible to existing regulatory frameworks. The non-obvious risk is not flawed algorithms but the lawful absence of oversight at the data ingestion stage, which allows relational proxies to enter scoring systems undetected.

Consent Illusion

Users of free financial apps are exposed to discrimination not because they unknowingly surrender data, but because the very design of consent rituals in app interfaces structurally prevents users from refusing relational data collection without losing core functionality. In apps like certain peer-focused budgeting or split-bill platforms operating in the EU, users must link social contacts to use basic features, and privacy notices obscure that these connections are later used to model risk, bypassing the intent of GDPR’s purpose limitation clause through feature bundling. Research consistently shows that granular opt-outs are buried or non-existent, rendering consent performative rather than operational. This challenges the dominant view that user awareness or transparency can mitigate harm, exposing that compliance theater sustains exploitative data flows even under strong privacy regimes.

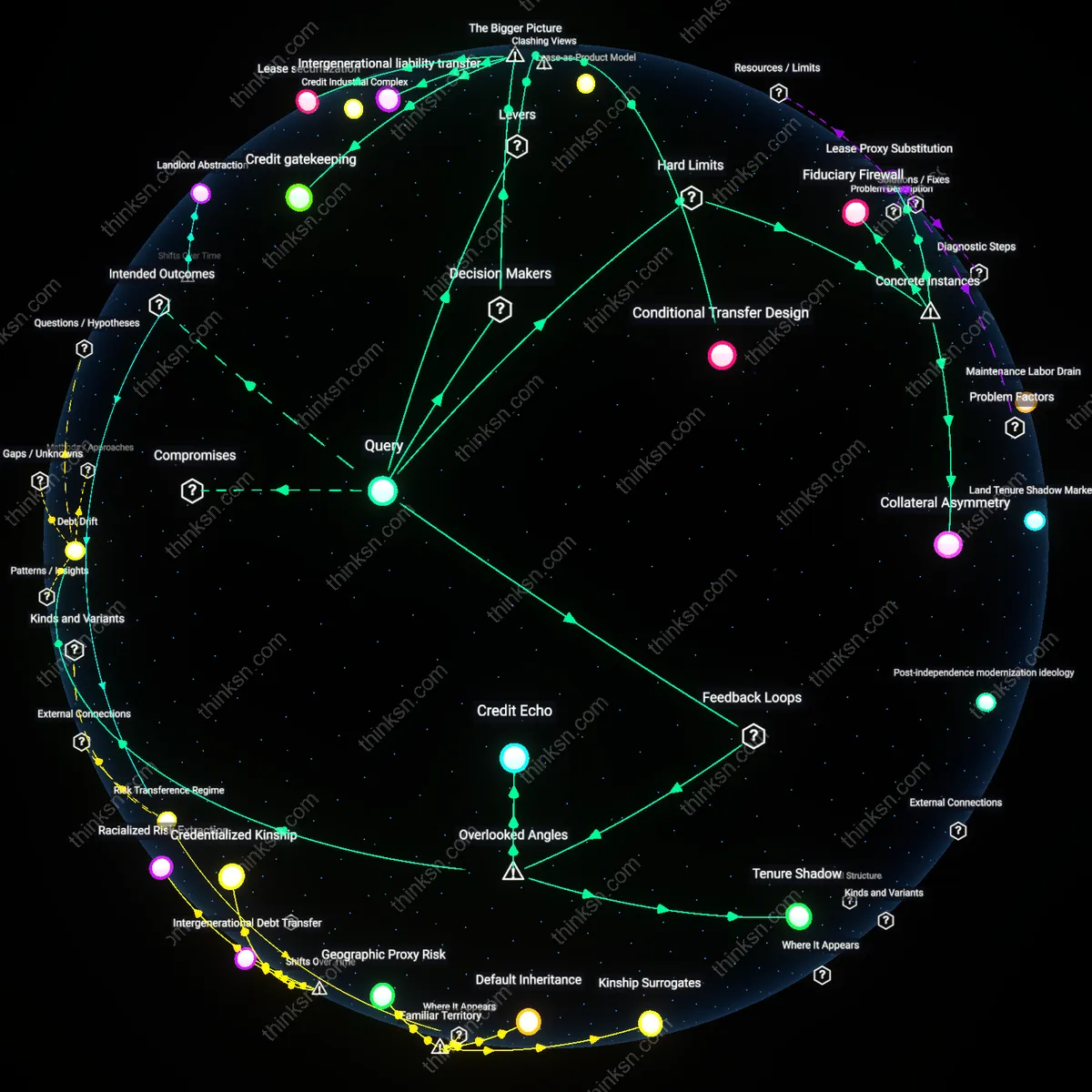

Scoring Spillover

Discrimination risk from relational credit-scoring does not stem from individual profiling but from the redistribution of financial liability onto third parties who never consented to be scored, turning passive social ties into silent guarantors. When apps in countries like India use WhatsApp group transactions to assess members’ credit risk, a person’s score can degrade based on others’ behavior—such as a shared group member defaulting—even if they themselves have perfect repayment history. This creates unregulated, invisible co-signing enforced through algorithmic networks, where risk is pooled without contractual awareness. The non-obvious reality is that discrimination here is not vertical (lender to user) but lateral, reshaping accountability across informal networks in ways that evade both contract law and anti-discrimination statutes.