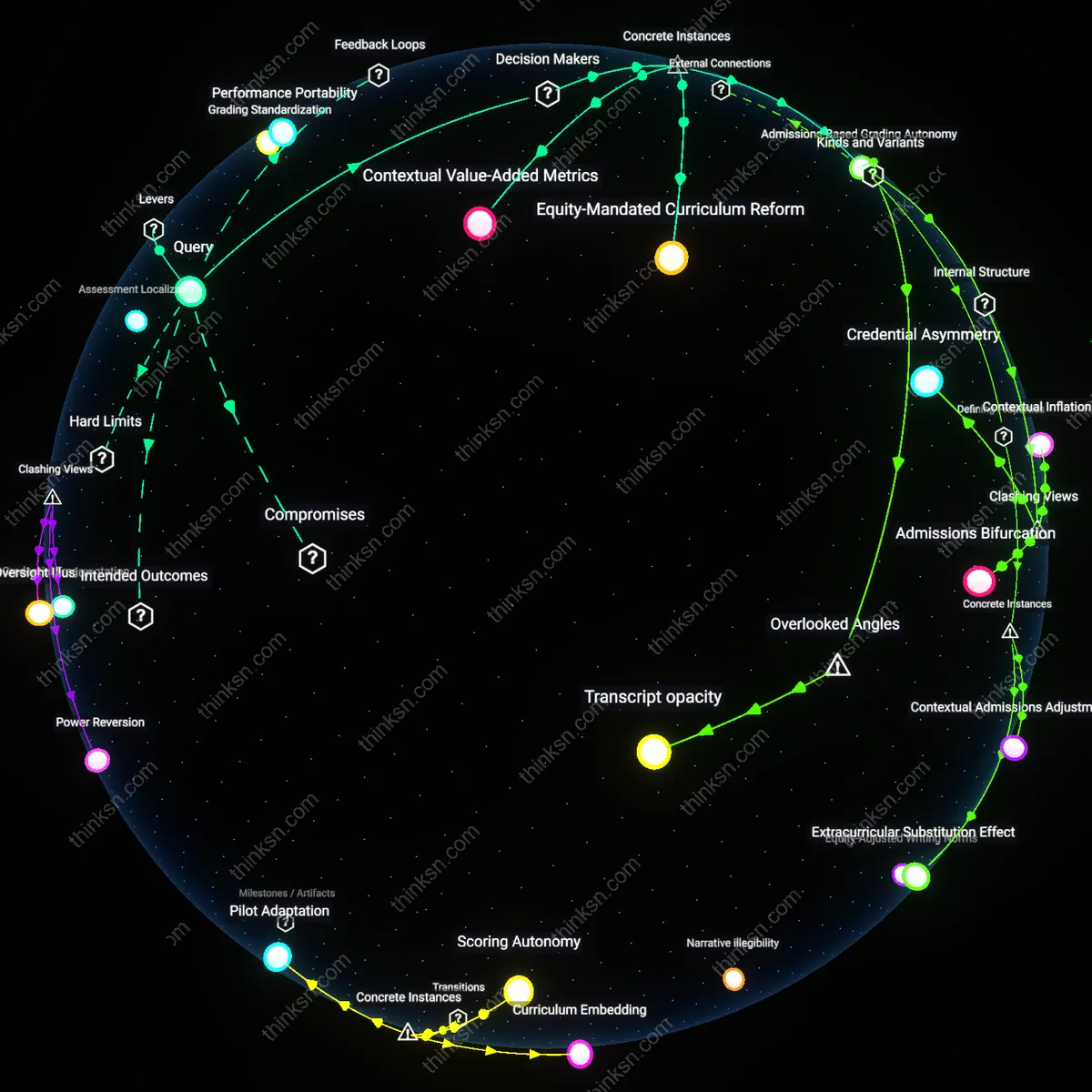

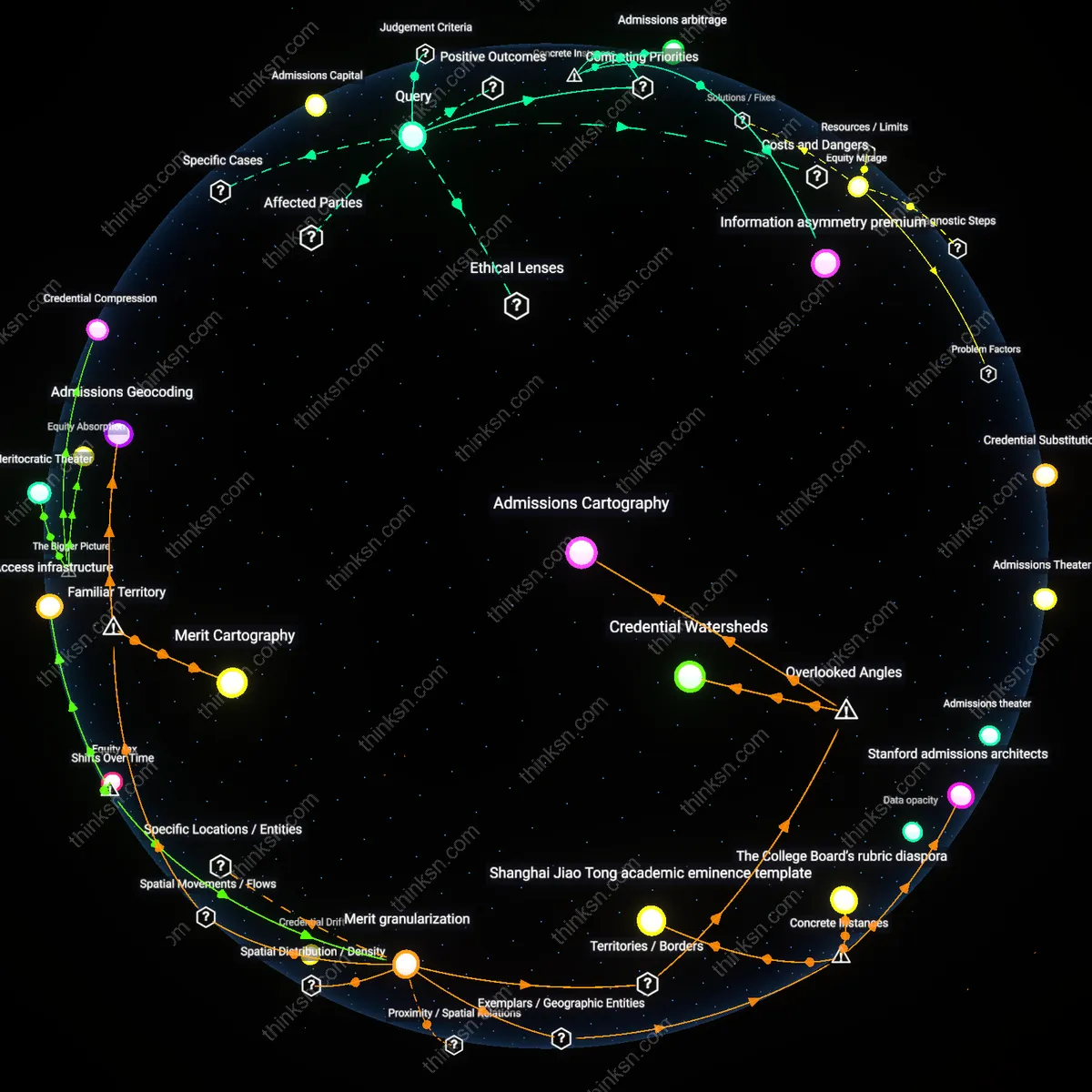

Does Reducing Bias With AI Credit Scoring Risk Perpetuating Segregation?

Analysis reveals 10 key thematic connections.

Key Findings

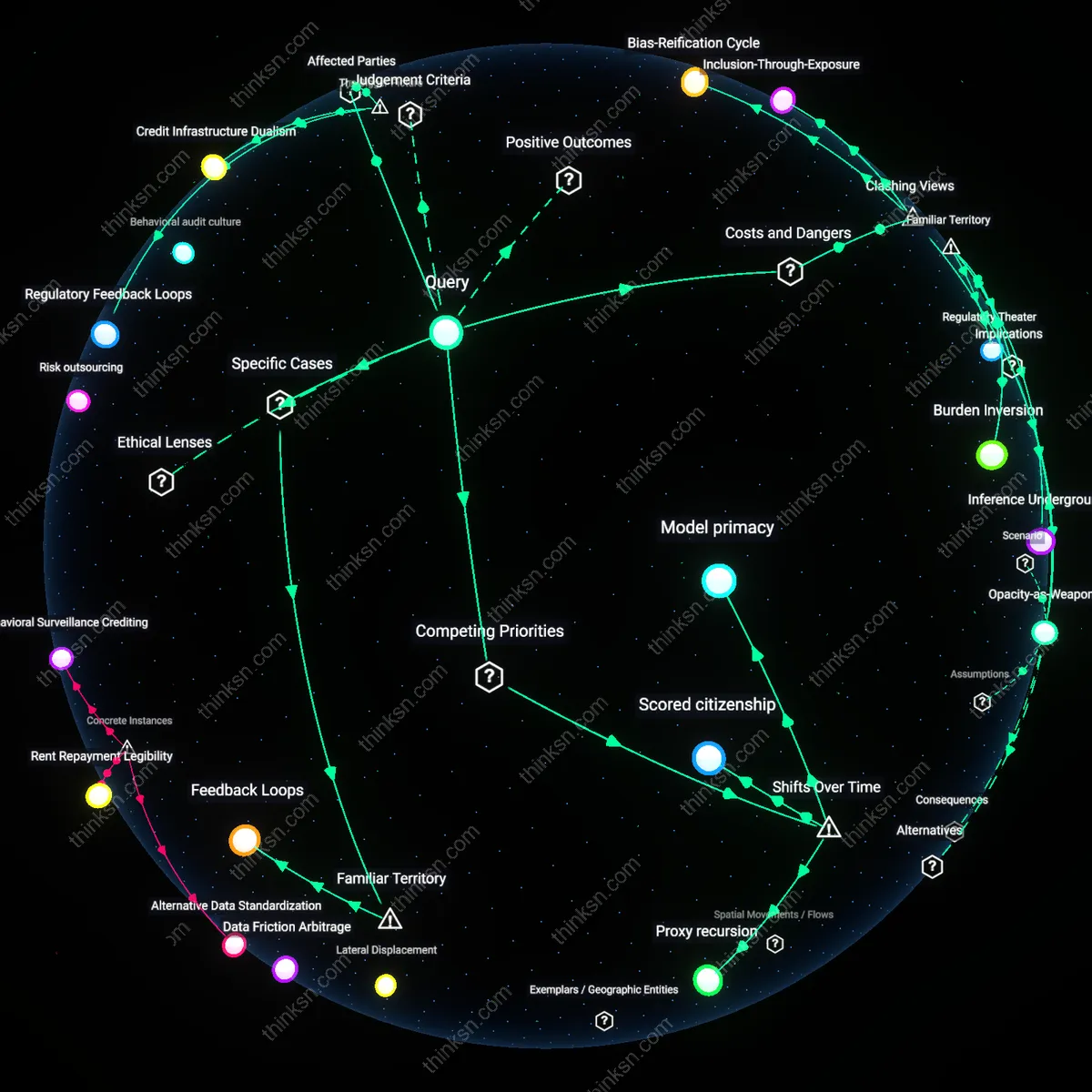

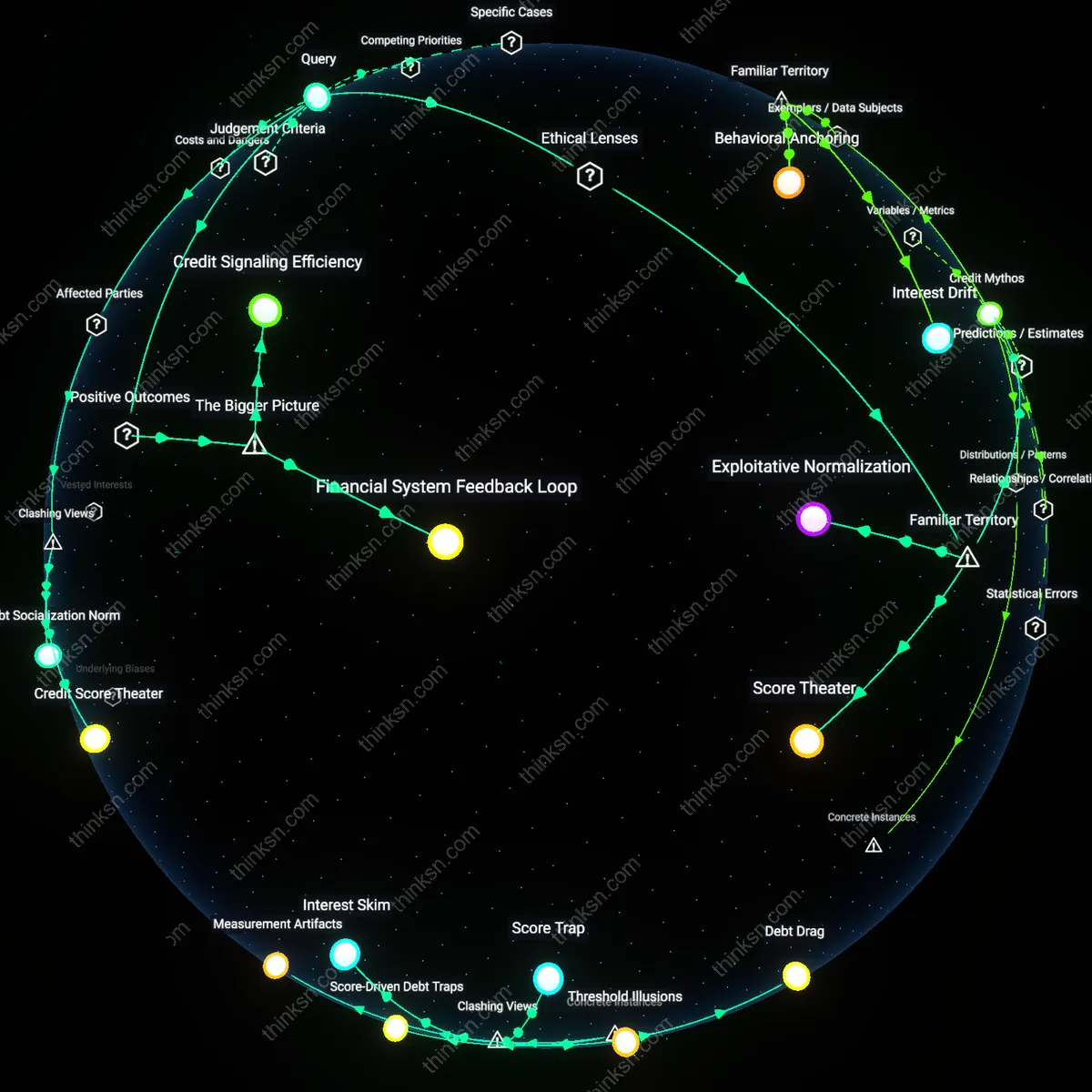

Regulatory Feedback Loops

Independent algorithmic audits mandated by financial regulators can align AI credit scoring with equity goals by forcing transparency without stifling innovation. Regulatory bodies like the CFPB or EBA can require version-controlled model disclosures from fintech lenders and banks, enabling third-party analysts to detect bias in training data or feature weighting—particularly in zip code–proxied variables that correlate with race or class. This works because regulatory enforcement creates a feedback loop where noncompliance triggers public scrutiny and financial penalties, incentivizing firms to preemptively adjust models. The non-obvious insight is that regulation does not merely constrain but can actively shape the evolution of private-sector AI systems through iterative compliance, turning oversight into a dynamic governance mechanism rather than a static barrier.

Credit Infrastructure Dualism

Expanding public credit repositories with alternative data—such as utility payments or rental history—can counterbalance opaque private AI models by creating a transparent, inclusive baseline scoring system. Institutions like the Federal Reserve or municipal credit unions can administer these repositories, drawing from data sources that underbanked populations regularly generate but are typically excluded from commercial models. This functions because public infrastructure operates outside profit-driven data extraction logics, allowing for deliberate inclusion and algorithmic accountability. The underappreciated dynamic is that dual credit ecosystems—one public, one private—can create competitive pressure for fairness, where transparent public benchmarks expose discriminatory departures in proprietary systems.

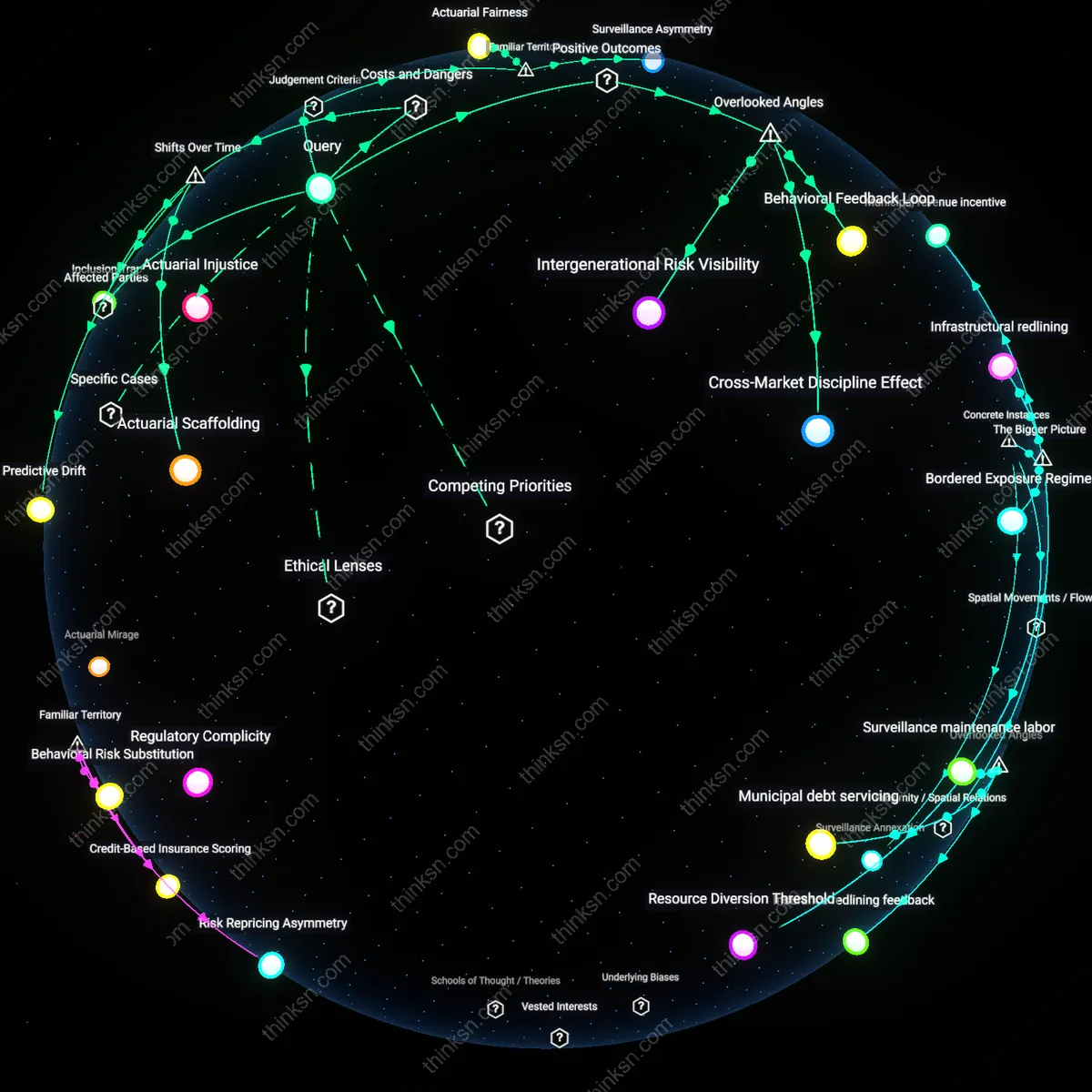

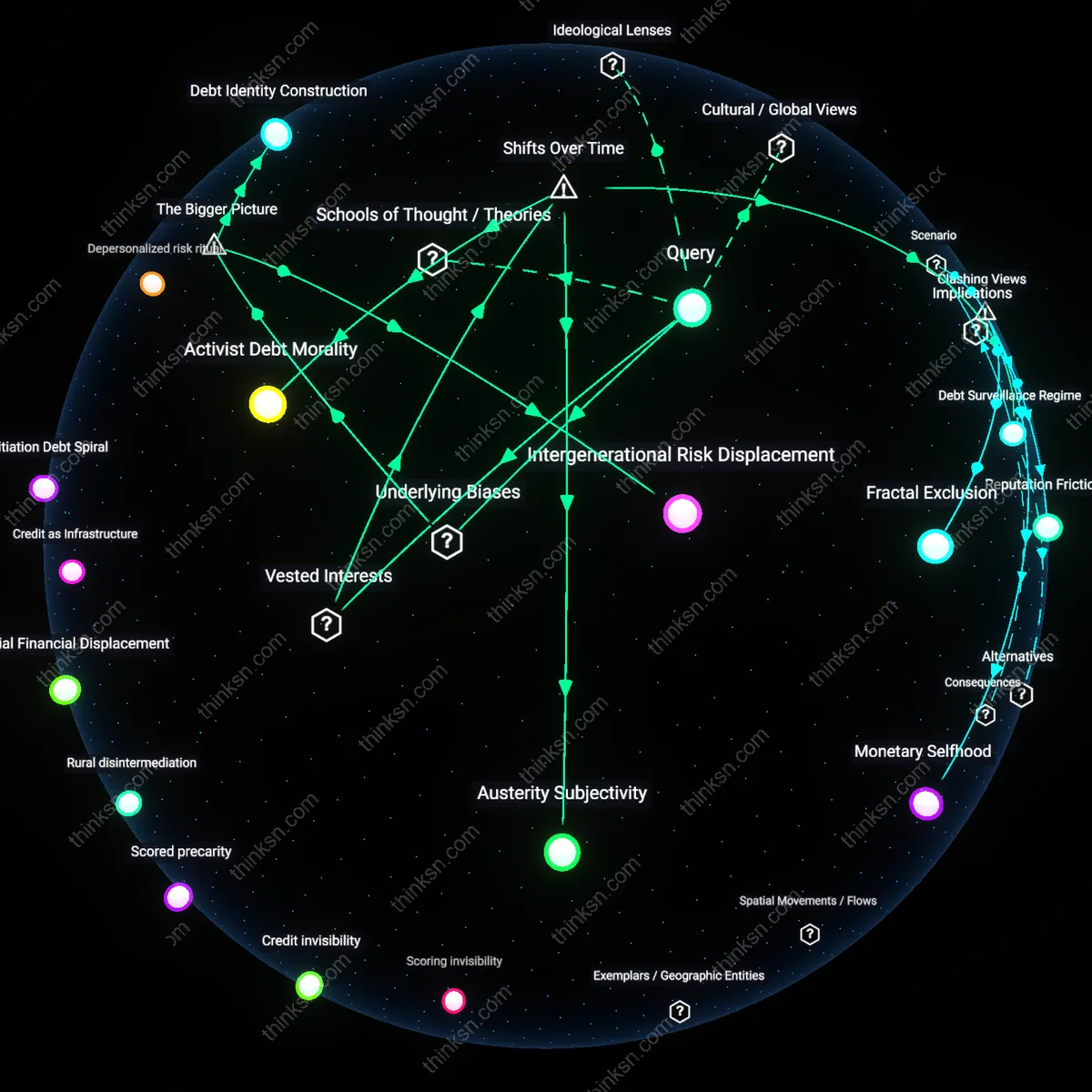

Feature Engineering Inheritance

AI credit models deepen segregation when they repurpose legacy financial variables—like 'length of employment' or 'credit utilization ratio'—that encode historical disadvantage into new decision frameworks. Major lenders and fintech developers rely on these variables because they are validated by decades of actuarial practice, but their continued use assumes past stability in labor and housing markets, which no longer holds for gig workers or marginalized renters. This perpetuates bias not through overt discrimination but through seemingly neutral technical choices that inherit structural inequities as 'features.' The critical insight is that algorithmic bias is less about data opacity than about the unrecognized sociotechnical lineage of variable selection—where engineering conventions silently embed exclusion.

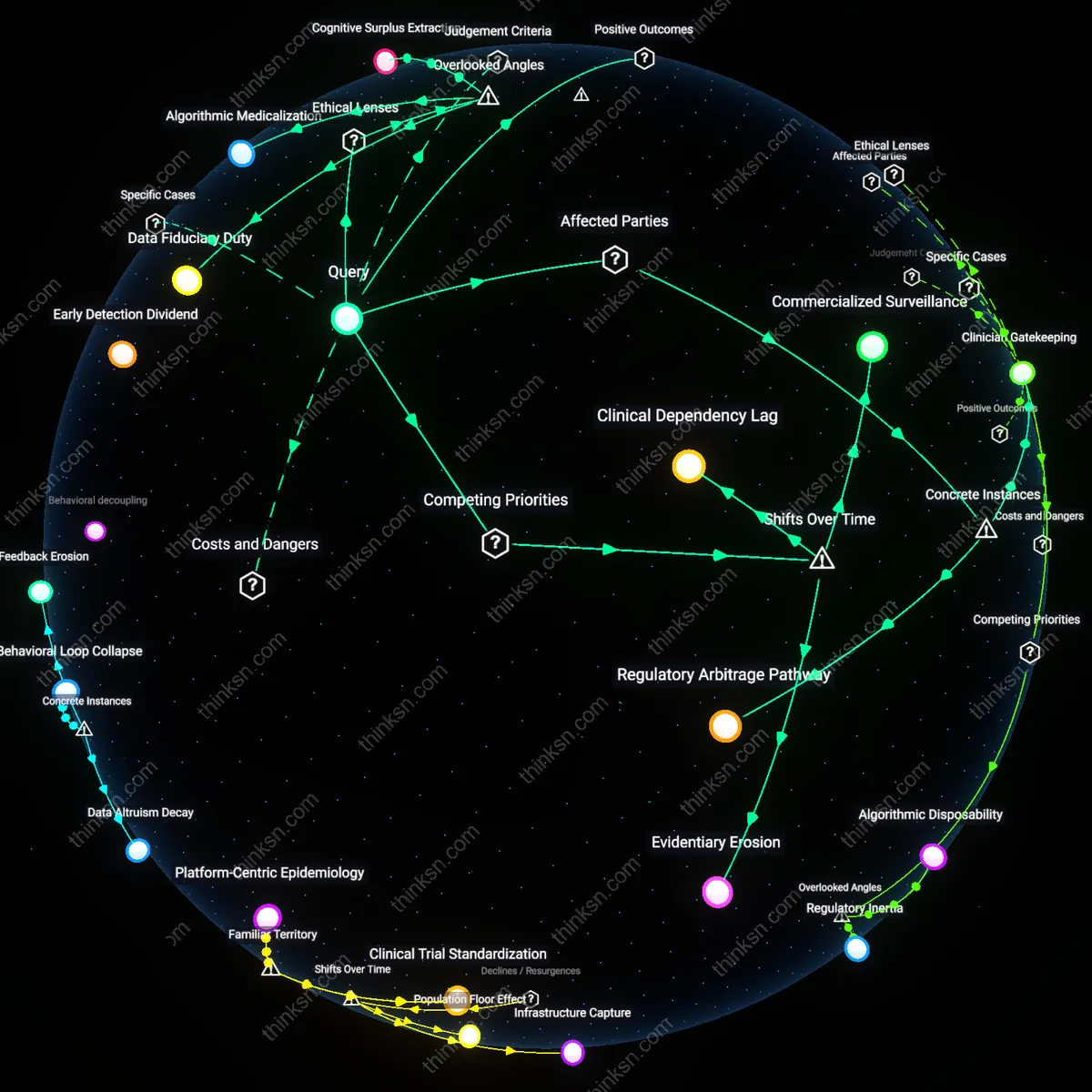

Opacity-as-Weapon

AI-driven credit scoring deepens socioeconomic segregation not despite its opacity but because its opacity is weaponized by financial institutions to bypass regulatory scrutiny while maintaining plausible deniability for discriminatory outcomes. Institutions such as fintech lenders in the U.S. Sun Belt exploit the complexity of black-box models to justify risk-based pricing that disproportionately rejects applicants from historically redlined ZIP codes, masking structural exclusion under the veneer of algorithmic neutrality—thereby transforming lack of transparency into a deliberate compliance loophole. This dynamic reveals that algorithmic opacity is not a technical flaw but a strategic liability shield, reframing transparency efforts as secondary to power asymmetries in data governance.

Bias-Reification Cycle

The deployment of AI in credit scoring entrenches bias by reifying historical inequities under the guise of statistical optimization, where machine learning systems trained on decades of discriminatory lending data—such as those used by major U.S. banks in subprime auto lending—automatically replicate racial and class disparities under the logic of risk prediction. By treating past exclusion as a stable input for future decisions, these systems convert social injury into financial risk metrics, making segregation appear statistically rational rather than politically contestable. This challenges the notion that better data or models can correct bias, exposing instead how statistical objectivity becomes a mechanism for legitimizing hierarchy.

Inclusion-Through-Exposure

The push for AI-driven credit inclusion actually increases vulnerability among marginalized populations by extending financial reach not through empowerment but through targeted extraction, as seen in the rollout of algorithmic microloan platforms in Nairobi’s informal settlements. These systems lower entry barriers not to uplift but to capture data and surplus from previously unbanked groups, using real-time transaction monitoring and social graph tracking to enforce repayment—transforming inclusion into a regime of hyper-surveillance and debt discipline. This inverts the progressive narrative around financial access, revealing that broader scoring coverage often means deeper entrapment in extractive financial ecosystems.

Model primacy

Prioritize public auditing of training data over deployment speed to expose how post-2008 financial deregulation enabled unmonitored feedback loops between credit algorithms and housing markets. Regulators, fintech firms, and data brokers now treat model stability as a technical necessity, masking how historical redlining data—repurposed in AI systems after 2010—becomes legitimized through predictive accuracy metrics, obscuring its role in reproducing spatial inequality. This shift from rule-based scoring to dynamic learning systems since the 2010s reveals that opacity is not a flaw but a functional feature that resists external interpretation while adapting to market shocks, making transparency efforts reactive rather than preventative.

Scored citizenship

Treat creditworthiness as an enforced social contract by recognizing how welfare-to-work reforms in the 1990s transitioned into algorithmic behavioral conditioning after 2015, particularly in municipal financial inclusion programs. Cities like Chicago and Atlanta began linking access to public transit subsidies and utility billing to AI credit scores around 2017, transforming what was once a financial assessment tool into a mechanism of civic participation. This post-welfare state trajectory shows that reducing bias in scoring models does not diminish segregation but instead reconfigures it around conduct-based inclusion, where marginalized populations must perform financial visibility to receive basic services, normalizing surveillance as a condition of belonging.

Proxy recursion

Constrain the use of indirect indicators in AI models by tracing how Fair Lending laws from the 1970s were circumvented after 2012 through the rise of alternative data—such as grocery spending or mobile phone usage—as proxies for credit risk. Fintech lenders like Upstart and Affirm justified these inputs as bias-reducing innovations, yet their adoption coincided with a shift from income-based lending to behavioral inference systems that re-aggregate disadvantage along racial and geographic lines. The turn to machine learning after the 2008 crisis enabled deeper layers of proxy logic, where each attempt to remove explicit variables like race led to more cryptic recombinations of socioeconomic signals, revealing that algorithmic segregation emerges not from static models but from iterative proxy refinement over time.

Feedback Loops

Regulate opaque AI credit models by embedding audits within financial institutions actively deploying them, like Upstart in lending markets where repayment data is fed back into algorithmic weights—this tightens a cycle where historical disparities in approval rates become future risk thresholds, even if initial model design appears neutral. Most public debate centers on fairness in code, not how operational data flows reinforce exclusion through learning loops, making the recursive nature of model refinement the underappreciated core of segregation risk.